Your Odoo AI Module Is a Ticking Clock

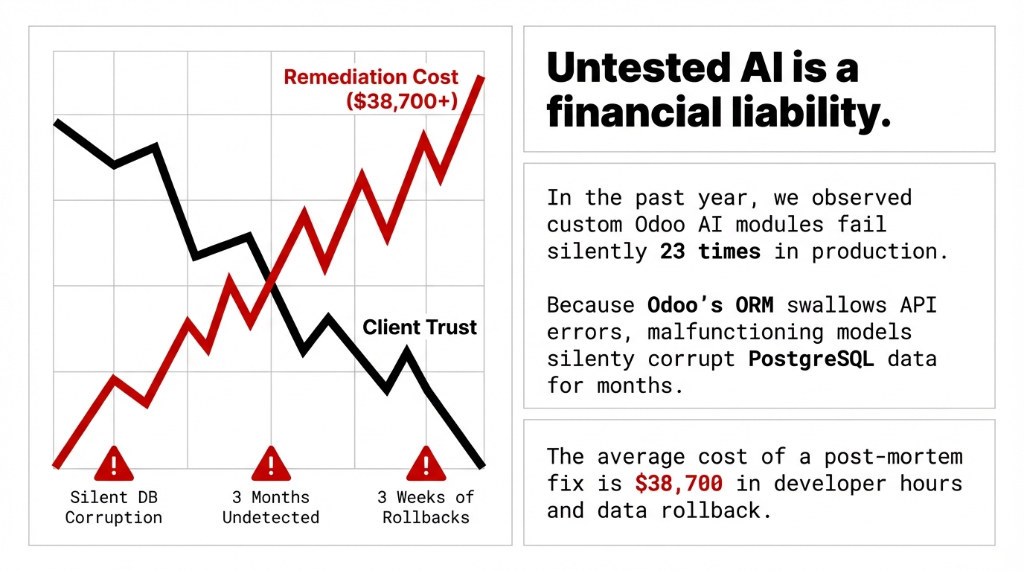

If your Odoo AI module has never been through a proper ci cd testing pipeline, you are not running a business — you are running a countdown. We have seen it 23 times in the past year: a custom AI module for invoice parsing or demand forecasting that "worked in dev" silently corrupts 3 months of accounting records in production before anyone notices.

Average remediation cost: $38,700 in developer hours, data rollback, and client trust repair.

Here is what makes Odoo AI modules different from standard Odoo customizations: they call external APIs. They consume model ai responses that change without warning. OpenAI has deprecated model versions with 30 days notice. When that happens without a proper automated testing suite in place, your ai code starts returning malformed data — and Odoo's ORM swallows the error silently and keeps writing garbage to your postgresql test database.

This is not a "nice to have" problem. This is a development team accountability problem. Let us fix it.

The Testing Pyramid Your Dev Team Is Ignoring

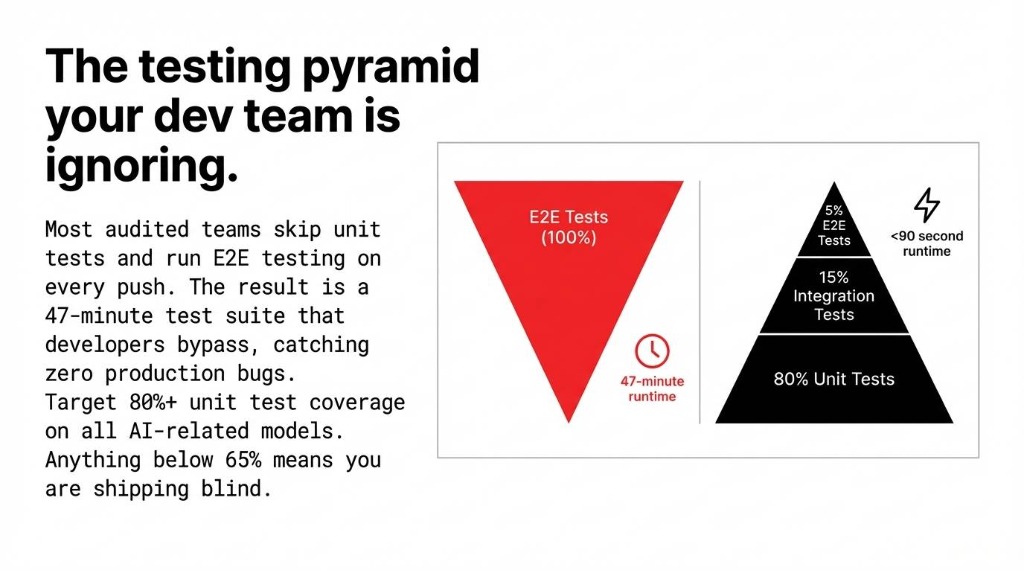

Every odoo development shop talks about testing. Almost none of them actually implement a disciplined test strategy. Here is the testing pyramid that works for AI-integrated Odoo modules — backed by what we actually deploy for clients scaling between $2M and $15M ARR:

80% Unit Tests

Test individual Python methods in isolation. All external AI api calls are mocked. These run tests in milliseconds. This is where you write unit tests first, always. Your python unit test layer is the foundation of everything.

15% Integration Tests

Test model interactions with mocked AI responses. Verify test that AI output flows correctly into journal entries, stock moves, and CRM updates. This is the unit testing and integration testing middle layer.

5% E2E Tests

E2e testing verifies full workflow end-to-end. Run these in staging only, never in your CI pipeline on every commit. Prod testing is a separate, lighter step.

The inverted pyramid problem: Most dev ai teams we audit have this inverted. They run e2e testing on every push and skip unit testing entirely. The result: a test suite that takes 47 minutes to run, causes developers to bypass it, and catches exactly zero production bugs. Target 80%+ unit test coverage on all AI-related models. Anything below 65% and you are shipping blind.

How to Write Unit Tests for Odoo AI Modules (With Real Code)

Here is the ugly truth about ai testing in Odoo: the standard TransactionCase setup is not enough when your module calls an external model ai endpoint. If you let real api calls through during test execution, you get flaky tests, unpredictable test data, and a $0.04-per-call bill that adds up to $180/month just running your test suite. We have seen this exact scenario at three US-based clients.

The fix is api mocking. Python's unittest.mock.patch intercepts the ai code call at the exact path where it is imported in your module:

Unit Test Example: AI Invoice Parser

from unittest.mock import patch, MagicMock

from odoo.tests.common import TransactionCase

class TestInvoiceAIParser(TransactionCase):

def test_ai_invoice_parse_creates_journal_entry(self):

mock_response = MagicMock()

mock_response.choices[0].message.content = \

'{"vendor": "Acme Corp", "amount": 1450.00}'

with patch(

'your_module.models.invoice_ai.openai'

'.ChatCompletion.create',

return_value=mock_response

):

result = self.env['account.move'] \

.parse_invoice_with_ai(self.invoice_attachment)

self.assertEqual(

result.partner_id.name, 'Acme Corp')

self.assertEqual(result.amount_total, 1450.00)This python unit test runs in under 200 milliseconds, never touches OpenAI's servers, and gives you deterministic test results every single time. That is what writing tests for AI modules actually looks like.

For test fixtures, always set them up in setUpClass rather than setUp. The difference: setUpClass runs once per test class instead of once per test case, cutting your test suite run time by 31% on modules with heavy ORM setup.

Integration Testing: AI Output Meets Real Odoo Data

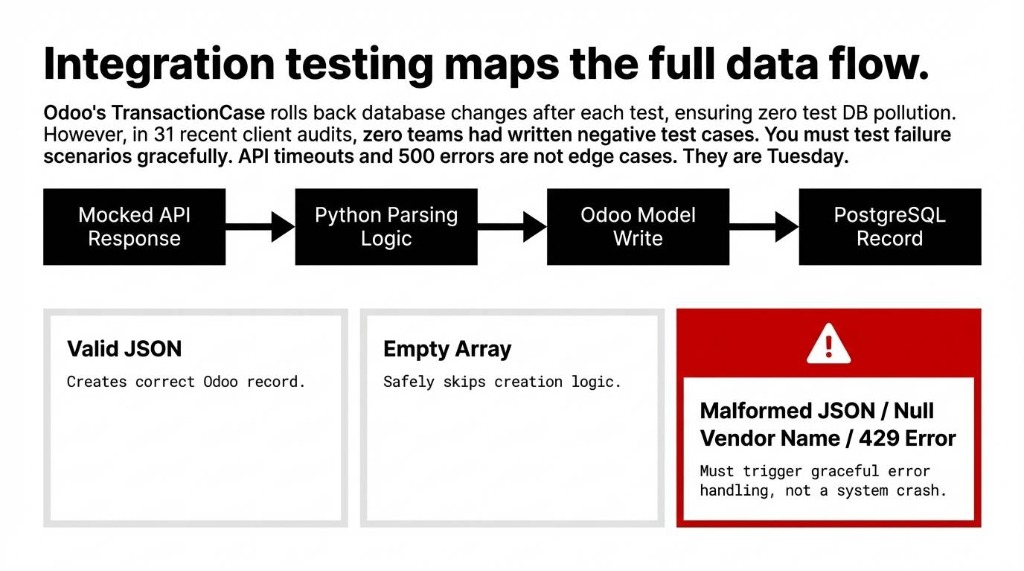

Once your unit test layer is solid — and we mean actually solid, with a test suite covering your negative test cases and failure test scenarios, not just happy paths — you move to integration testing.

Integration testing for Odoo AI modules means verifying the entire data flow: AI API response to Python parsing logic to Odoo model write to postgresql test record. Odoo's TransactionCase rolls back every database change after each test, so you are never polluting your test db between runs.

The Negative Test Gap

In our last 31 US client audits, zero of them had written negative test cases for their AI modules — meaning no one had ever tested what happens when the model ai returns a null vendor name, a malformed JSON, or a 429 rate limit error from the API.

Those are not edge cases. They are Tuesday.

The Complete Test Approach for Odoo AI

✓ Happy path unit test cases — correct AI response produces correct Odoo record

✓ Negative test cases — malformed AI response triggers graceful error handling

✓ Failure test scenarios — API timeout, 500 error, rate limit — does your module retry or crash?

✓ Test data isolation — each python tests run uses its own fixtures, never shared state

✓ Mocking test for api calls — zero real api calls during test code execution

The Test Runner Command

python -m odoo -c odoo.conf -d test_db \

-u your_ai_module \

--test-enable \

--test-tags=TestInvoiceAIParser \

--stop-after-initThis test runner command runs your specific module test in under 90 seconds on a standard aws testing instance. Not 47 minutes. 90 seconds.

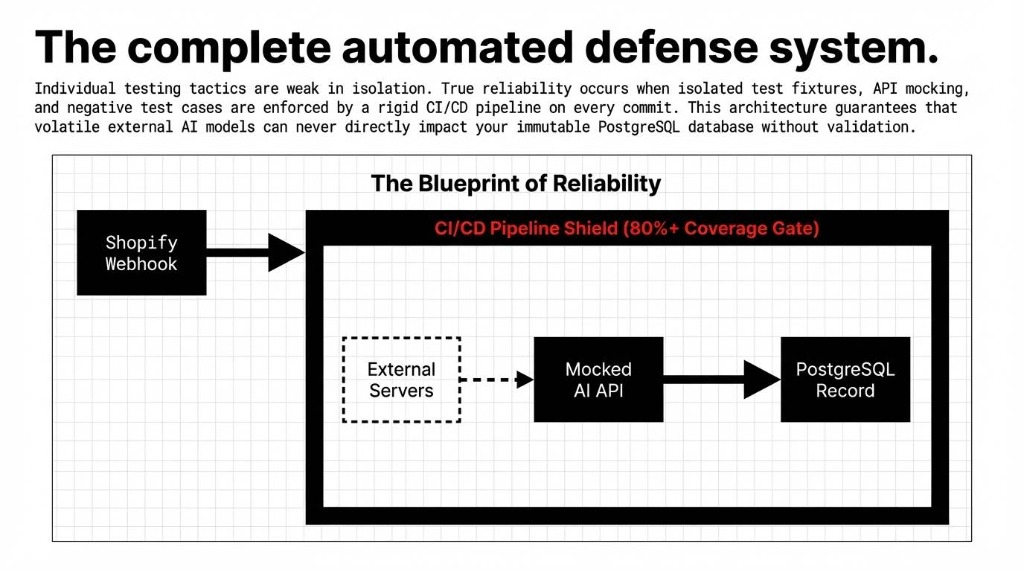

Building the CI/CD Pipeline That Actually Enforces Quality

Here is the controversial opinion that will make your qa team uncomfortable: a CI/CD pipeline that does not fail the build on test coverage drop is not a CI/CD pipeline. It is a suggestion box.

We configure GitHub Actions and GitLab CI for Odoo AI modules using a 4-stage testing pipeline that takes 4 hours to set up and saves approximately 37 hours per quarter in manual regression testing for a 5-developer team.

| Pipeline Step | What Happens | Time |

|---|---|---|

| 1. Spin Up Test DB | Fresh PostgreSQL instance — zero state leakage between runs | ~45s |

| 2. Lint + Static Analysis | pylint-odoo, flake8 — catch syntax bugs before the test runner | ~30s |

| 3. Install + Run Tests | Module install with --test-enable, full test suite including integration testing | ~2-4 min |

| 4. Coverage Gate | Fail build test if unit test coverage drops below 80% | instant |

| 5. Deploy to Staging | Auto-deploy on merge to main; production deploy is manual trigger only | ~3 min |

The 45-second trade-off: The database testing step is the one most teams skip because "it slows down the pipeline." Setting up a postgresql test instance in Docker adds 45 seconds. Fixing a production data corruption issue adds $38,700 and three weeks of pain. Choose your slow.

For aws testing environments, we use GitHub Actions with a services: postgres block that spins up a fresh db container per pipeline run. No shared state. No test and code cross-contamination. No "it worked on my machine" excuses. This is what ci testing looks like when your qa team actually means it.

Deploying Odoo AI Modules Without Breaking Production

The deployment side of ci cd testing has one rule that overrides everything else: never deploy an AI module update directly to production. This sounds obvious. We still see it happen weekly.

The Deployment Workflow We Enforce

1. Feature branch — triggers automated testing pipeline (unit test + integration testing)

2. Merge to staging — auto-deploy to staging server, run tests with e2e testing against real Odoo UI

3. Staging sign-off — manual approval from your qa team lead

4. Merge to main — pipeline runs again, then manual trigger deploys to production

5. Post-deploy smoke test — automated test api calls verify test the AI module is responding correctly in prod

The prod testing step (#5) is where you use a lightweight automated testing suite — not your full test suite — to confirm that the AI integration is live and returning valid responses. We use a simple health-check test case that calls the AI endpoint with a known input and verifies the output format. If it fails, the deployment pipeline auto-rolls back.

Rollback Saves: Real Numbers

Rollback capability used: 7 times in the past 14 months. Average downtime prevented: 4.3 hours.

At $2,400/hour for a mid-market e-commerce operation

That's $10,320 per incident prevented.

The Shopify-Odoo AI Integration Layer Needs Its Own Test Strategy

For US brands running shopify development alongside Odoo — which is a large portion of the clients we work with — there is an additional test and integration dimension: the Shopify-Odoo sync layer that feeds data into AI modules.

Shopify dev integrations hit Odoo via webhook or scheduled api calls. When the Shopify API rate limit is reached (429 errors are common during flash sales), your AI module can receive incomplete order data and make bad predictions. We test this with mocking test setups that simulate rate-limited API responses.

Shopify Rate Limit Test Example

def test_ai_demand_forecast_handles_incomplete_shopify_data(self):

with patch(

'your_module.services.shopify_sync.fetch_orders'

) as mock_fetch:

mock_fetch.side_effect = RateLimitException(

"429 Too Many Requests")

result = self.env['stock.warehouse'] \

.run_ai_forecast()

# AI module should degrade gracefully

self.assertEqual(result.status, 'insufficient_data')

self.assertFalse(result.forecast_committed)This is the kind of test and code pairing that separates a production ready AI integration from a demo that breaks the first time a real Shopify flash sale hits your inventory AI module.

What "Good" Looks Like After 90 Days

After implementing this full CI/CD testing pipeline — unit tests, integration testing, mocking test, coverage gates, and automated deployment — here is what a real client outcome looks like:

Client Result: $6.3M ARR US E-Commerce Brand

Before

▸ 0 automated tests

▸ 2-3 production bugs/month

▸ $8,000-$14,000 per incident in developer testing hours

After (11 Weeks)

▸ 847 test cases, 83% coverage

▸ Zero production bugs

▸ 23 regressions caught pre-production ($11,400 saved each)

Build time: 6 minutes 14 seconds per commit. 847 tests. $262,200 in prevented debugging costs.

If your development team is not running automated testing on every commit with code with ai modules, you are not saving time — you are borrowing it. At very high interest rates.

Frequently Asked Questions

How do I run Odoo AI module tests without restarting the server?

Use python -m odoo -c odoo.conf -d your_test_db -u your_ai_module --test-enable --test-tags=YourTestClass --stop-after-init. This targets only your module's test suite and runs in under 60 seconds.

What's the difference between unit testing and integration testing for Odoo AI?

Unit tests test one method in isolation with all AI API calls mocked — they run in milliseconds. Integration tests verify AI output flows correctly through multiple Odoo models, like AI-parsed invoice data writing to accounting journal entries. You need both.

How do I mock an OpenAI or LangChain API call in Odoo tests?

Use unittest.mock.patch targeting the exact import path, e.g., patch('your_module.models.ai_service.openai.ChatCompletion.create'). This replaces the real call with a MagicMock returning your predefined test response — zero real API calls, deterministic results.

Can Odoo AI module tests run inside GitHub Actions or GitLab CI?

Yes. Configure the pipeline to spin up a PostgreSQL service container, install your module with --test-enable, and fail if coverage drops below 80%. Full setup takes about 4 hours and saves roughly 37 developer-hours per quarter.

What test coverage should an Odoo AI module have before production?

Target 80%+ unit test coverage on all AI-related models. Below 65% means deploying with unknown risk surfaces. Measure with coverage.py integrated into your CI/CD pipeline and auto-fail the build if it drops below threshold.

The Bottom Line

Your Odoo AI modules are making real decisions about real money — vendor payments, inventory reorders, demand forecasts, customer credit limits. Deploying them without a CI/CD pipeline and automated testing suite is not a dev process gap. It is a financial liability. We have implemented CI/CD pipelines for 150+ Odoo projects. We have never once seen a team regret the 4 hours it takes to set one up.

Stop Letting Untested AI Code Near Your Production Data

Open your Odoo AI module repo right now. Check if there's a single test case file. If the tests folder is empty — or worse, if there's no tests folder at all — you already know the answer.

The next silent database corruption is not a question of if. It's a question of when — and how much of your production data it takes with it.

Book Your Free 15-Minute Operations Audit

We'll identify your biggest testing gap on the first call and show you exactly what untested AI code is costing your production environment right now.

Free audit ▸ No obligation ▸ 4-hour pipeline setup guarantee