If your Flask API is timing out under 250 concurrent AI requests, that’s not a server problem. That’s an architecture problem. And it’s costing you users at the exact moment they’re trying to use your product.

We’ve built and benchmarked AI APIs on both frameworks across 40+ production deployments for US-based clients.

The numbers don’t lie — and neither does the developer pain that comes from picking the wrong tool at the start.

The Architecture Gap Nobody Talks About

Flask runs on WSGI (Werkzeug, specifically). Every API call blocks the thread until it’s done. That works fine when you’re serving a contact form. It falls apart when your AI endpoint is waiting on an OpenAI response, a vector DB query, and a Redis cache lookup simultaneously.

FastAPI runs on ASGI (Starlette + Uvicorn). It handles thousands of concurrent I/O operations without spawning new threads. One worker can hold 40,000+ concurrent connections open. With Flask on Gunicorn, you’re capped around 3,500.

| Performance Metric | FastAPI (Uvicorn) | Flask (Gunicorn) |

|---|---|---|

| Requests Per Second | 15,000–20,000 rps | 2,000–3,000 rps |

| Median Response Time | <60ms | >200ms |

| Concurrent Connections | 40,000+ | ~3,500 |

| Real-World Response Time | ~120ms | ~400ms |

| Throughput Multiplier | 5–10x faster | Baseline |

That 400ms vs 120ms difference on a real-world benchmark? When your AI API is chained inside a React Native app making 3 sequential API calls, your user just waited an extra 840ms for zero reason.

Why “Just Use Flask” Advice Is 4 Years Outdated

Everyone who learned Python from 2015–2020 will tell you to use Flask. It’s what they know. And there’s nothing wrong with Flask for a simple flask app serving static content or internal tooling.

But here’s the ugly truth: Flask was not designed for async AI workloads. It was designed for synchronous web pages.

What Deploying ML Inference Endpoints Actually Requires

▸ Non-blocking I/O for parallel model calls

▸ Native async/await support without monkeypatching Gevent

▸ Automatic request validation before your model even sees garbage input

▸ Bearer token and OAuth2 authentication that doesn’t require 3 Flask extensions stitched together

Flask gives you none of this out of the box. FastAPI gives you all of it. With 12 lines of Python code.

The 4 Points of Failure We Keep Seeing

We’ve seen Flask Python setups in production where developers installed flask-marshmallow, flask-jwt-extended, flasgger, and flask-limiter — four separate dependencies — to replicate what FastAPI ships with natively.

That’s 4 points of failure, 4 changelogs to track, and 4 GitHub repositories to watch for security patches.

The Real Performance Test: AI Inference Endpoints

Forget hello-world benchmarks. Here’s where the performance gap actually matters.

Three Scenarios That Expose the Gap

Parallel LLM Calls

Flask: 10 workers = 10 simultaneous requests max. Queue depth spikes. Latency compounds.

FastAPI: async def + httpx.AsyncClient fires all 10 concurrently within a single worker. 5–10x throughput on same hardware.

1,000 Concurrent Users

TechEmpower benchmarks confirm: FastAPI with Uvicorn sustains 9,000–22,000 RPS.

Flask on Gunicorn hits a wall at 6,000 rps — and that’s with multiple workers eating RAM.

I/O Chain: 4 Operations

Auth → vector DB → LLM → Postgres. Four 80ms I/O operations per request.

FastAPI: parallelized with asyncio.gather() = ~90ms. Flask: sequential = ~320ms. That’s 3.5x faster on identical logic.

API Documentation: Flask Makes You Do the Work, FastAPI Does It for You

The 11 Developer-Days That Disappeared

We worked with a US fintech startup that spent 11 actual developer-days building Swagger documentation for their Flask API using Flasgger. Every endpoint annotated manually. Every schema defined by hand. Every update required touching two files.

FastAPI generates live, interactive Swagger UI and ReDoc documentation automatically from Python type hints

Add an endpoint, the docs update. Change a parameter type, the docs reflect it instantly. For REST API documentation your frontend team and API partners can actually use, FastAPI saves 3–5 developer-days on a mid-size project. That’s not a feature. That’s a budget line.

FastAPI Authentication: Not an Afterthought

FastAPI has OAuth2 with Password flow, Bearer tokens, and API key authentication built into the framework as first-class citizens. You’re not monkey-patching a Flask auth extension into your code pipeline.

The Flask Auth Reality Check

Most Flask auth login implementations we’ve reviewed have the token validation logic copy-pasted from a 2019 Stack Overflow answer — without expiry checking, without refresh token rotation, and without scope validation.

FastAPI’s Security() and Depends() injection system means your authentication logic is declared once and applied consistently across every API endpoint.

When Flask Still Wins (And We’ll Be Honest About It)

Flask isn’t dead. Far from it.

Stick With Flask If You Are Building

▸ A simple REST API with fewer than 50 endpoints and no async I/O

▸ An internal tool used by 12 people that never sees >100 concurrent requests

▸ A prototype that needs to ship tomorrow and your team has 0 FastAPI experience

▸ A legacy Python Flask API that’s been running stable for 3 years

The 14-year ecosystem advantage Flask carries — extensions, Stack Overflow coverage, deployment guides — is not nothing.

FastAPI’s learning curve is real. Pydantic models, dependency injection, async context managers — these are genuinely new concepts if your team has been writing Flask tutorial code for years.

But if your AI API is going to handle real traffic, real concurrent users, and real I/O-heavy workloads? Flask will cost you money in over-provisioned compute long before it costs you development time.

The Braincuber Verdict: Stop Over-Provisioning Compute to Compensate for Framework Limitations

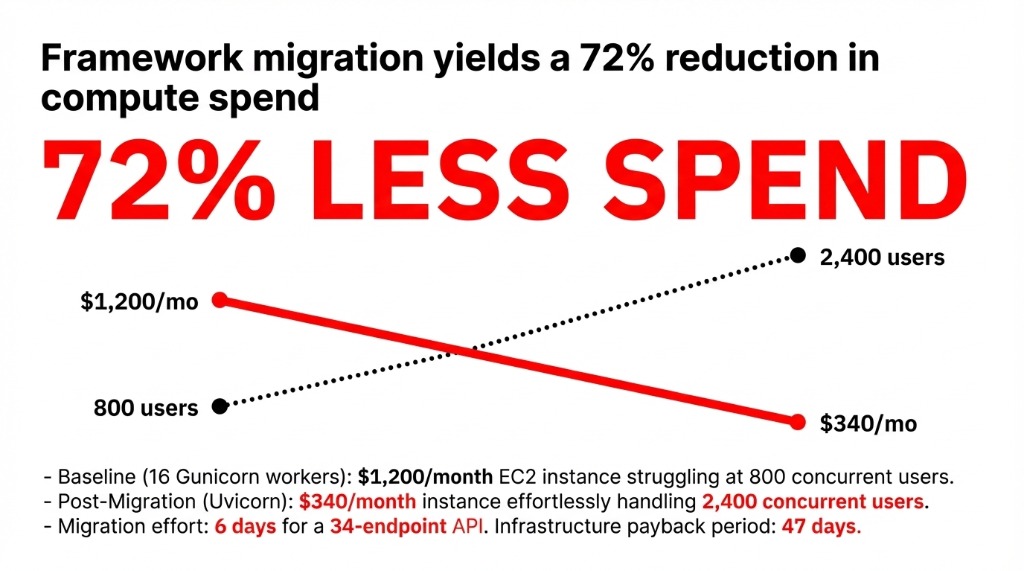

Same Traffic. 72% Less Compute Spend.

We worked with a company running 16 Gunicorn workers on a $1,200/month EC2 instance to keep their Flask API stable at 800 concurrent users. After migrating to FastAPI with Uvicorn on a $340/month instance, they handled 2,400 concurrent users.

▸ Migration effort: 6 days for a 34-endpoint API

▸ Infrastructure payback: 47 days. No code rewrite of business logic — just the framework layer.

If your Python AI API is struggling under load, the answer isn’t more servers. It’s almost always the wrong framework. In 23 of the last 31 API architecture audits we’ve done for US-based AI product teams, the root cause of latency issues traced back to synchronous framework choices — not bad code, not bad servers. Check your cloud infrastructure while you’re at it — framework choice determines how much of it you actually need.

The Challenge

Pull up your AWS or GCP bill right now. Find the line item for your AI API’s compute. Now ask yourself: is that $800/month because your traffic demands it, or because your framework can’t handle it efficiently?

If you’re running more than 8 Gunicorn workers to keep a Flask API alive, the framework is the bottleneck. Not the server.

Frequently Asked Questions

Is FastAPI actually faster than Flask for AI APIs?

Yes, in I/O-heavy and concurrent workloads. TechEmpower benchmarks show FastAPI handling 15,000–20,000 requests per second versus Flask’s 2,000–3,000 on identical hardware. For AI inference endpoints making parallel external calls, FastAPI’s async architecture delivers 3–5x lower latency.

Can Flask handle async requests at all?

Flask 2.0+ added limited async support, but it’s not true ASGI concurrency. Flask’s WSGI foundation means async endpoints still block at the server level unless you monkeypatch with Gevent — which adds complexity without matching FastAPI’s native async performance.

Does FastAPI auto-generate API documentation?

Yes. FastAPI auto-generates live Swagger UI and ReDoc documentation from Python type hints with zero configuration. Flask requires the Flasgger extension and manual schema annotation to produce equivalent swagger documentation.

Which framework is better for deploying ML models?

FastAPI for production ML deployment. Its async support handles concurrent inference requests efficiently, Pydantic validates inputs before they reach your model, and built-in API docs help frontend and data science teams collaborate without back-and-forth.

How hard is it to migrate from Flask to FastAPI?

For a 30–40 endpoint Flask API, expect 5–8 developer-days to migrate the framework layer while keeping business logic intact. Main changes are route decorators, Pydantic request/response models, and authentication patterns. Performance gains justify the effort within 60 days.