Your shoppers are leaving. Right now. And your keyword search engine is the reason.

$234 billion. That is how much poor product search costs US retailers every single year, according to Google Cloud research. Not bad UX. Not slow shipping. Not pricing. Search. The thing most e-commerce operators treat as a checkbox item rather than a revenue engine. A customer types "running shoes for flat feet wide toe box" into your store and gets zero results — or worse, a wall of unrelated sneakers — they are gone in 11 seconds.

82% of them will never come back.

We have seen this exact pattern in dozens of mid-market US stores scaling between $2M and $15M ARR. The founders pour money into Meta Ads, Klaviyo flows, and influencer campaigns — and then their own site sabotages the conversion because the search bar is running on a 2009-era BM25 keyword algorithm.

That is not a traffic problem. That is a product problem you already own the solution for.

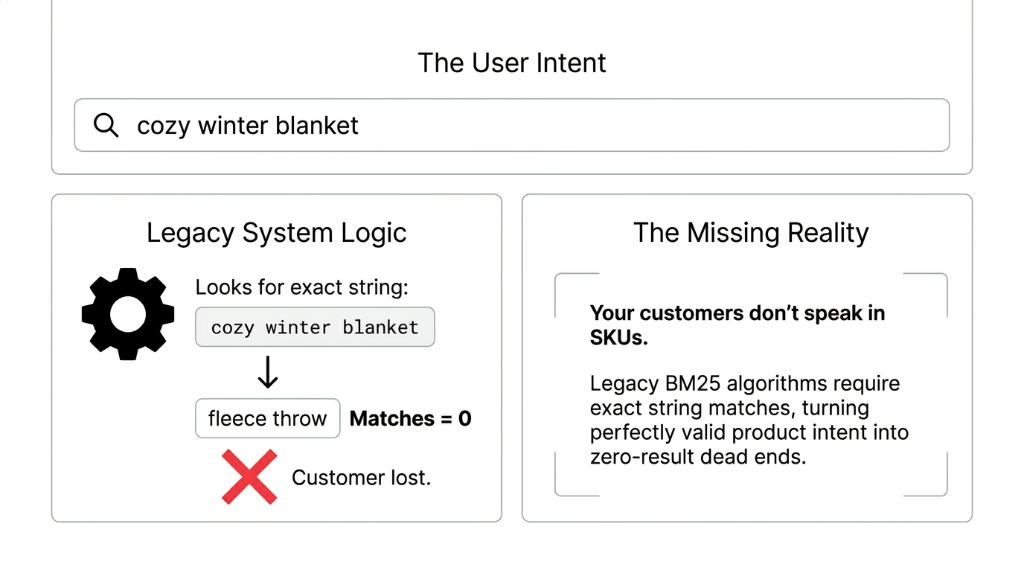

Why Your Current Search Is Bleeding You Dry

Traditional keyword search matches exact words. Customer types "cozy winter blanket." Your database looks for the literal string "cozy winter blanket." If your catalog says "fleece throw" instead, the customer gets nothing. They do not refine the query. They leave. Up to 68% of online shoppers abandon a site because of poor search experiences.

The Brutal Math Nobody Wants to See

If your store does $5M in annual revenue and search drives 40% of orders (industry average), poor search is potentially costing you $1.37M in recoverable revenue every year. Not from competition. Not from the economy. From a fixable technical decision.

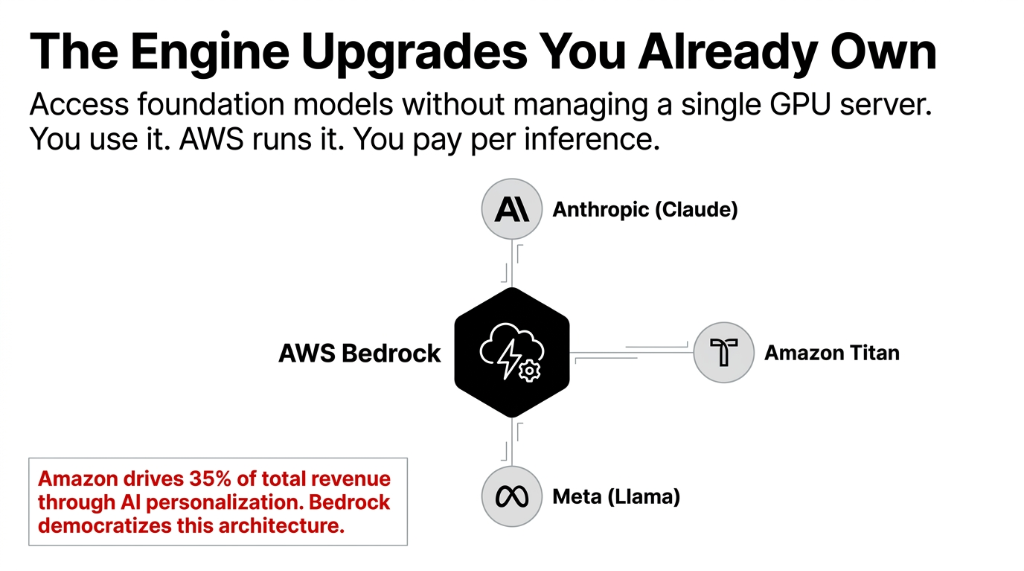

Amazon's own recommendation engine drives 35% of its total revenue through AI-powered personalization

Those same tools are now available to every e-commerce brand through Amazon Bedrock. And no, you do not need a team of ML engineers to deploy it.

What AWS Bedrock Actually Does for Product Search

AWS Bedrock is a fully managed platform that gives you access to foundation models from Anthropic (Claude), Meta (Llama), and Amazon's own Titan — without managing a single GPU server. You use it. AWS runs it. You pay per inference.

For e-commerce product search specifically, Bedrock does three things your keyword engine never could:

1. Semantic Understanding, Not String Matching

Bedrock converts your entire product catalog into vector embeddings — mathematical representations of meaning. When a shopper types "gift for my outdoorsy teenage daughter who just started coding," Bedrock understands intent, not just words. It matches that query to a laptop bag with a hiking aesthetic, or a rugged smartwatch — whatever semantically fits, even if none of those exact words appear in your product descriptions.

2. Multimodal Search (Text + Image)

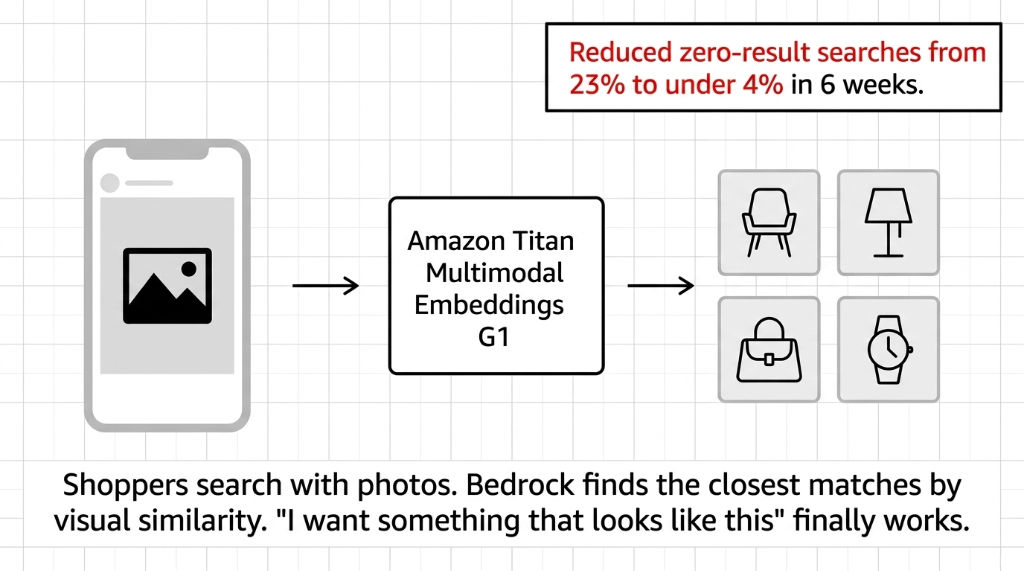

Image Search That Actually Works

Using Amazon Titan Multimodal Embeddings G1, shoppers can search with a photo. Customer uploads a screenshot from Instagram — Bedrock finds the closest matches in your catalog by visual similarity. This is the feature that makes "I want something that looks like this" actually work.

We built this for a US home decor brand

Reduced zero-result searches from 23% to under 4% within 6 weeks. Shoppers search with photos. Bedrock finds the closest matches by visual similarity. "I want something that looks like this" finally works.

3. Hybrid Search: The Best of Both Worlds

Here is the insider detail most agencies will not tell you: pure semantic search is not always better than keyword search. Product SKUs, brand names, and model numbers are best matched with exact keyword lookup (BM25). Style descriptions and intent-based queries are best matched semantically.

Reciprocal Rank Fusion (RRF): The Hybrid Engine

AWS Bedrock + OpenSearch Service runs both keyword and semantic search in parallel and merges results using Reciprocal Rank Fusion. This means exact SKU searches still work perfectly, while intent-based queries get semantic matching.

One client saw a 19% improvement in Mean Reciprocal Rank (MRR)

The right product appeared in the top 3 results far more often by switching from pure vector search to hybrid. Pure semantic search misses exact matches. Pure keyword search misses intent. Hybrid catches both.

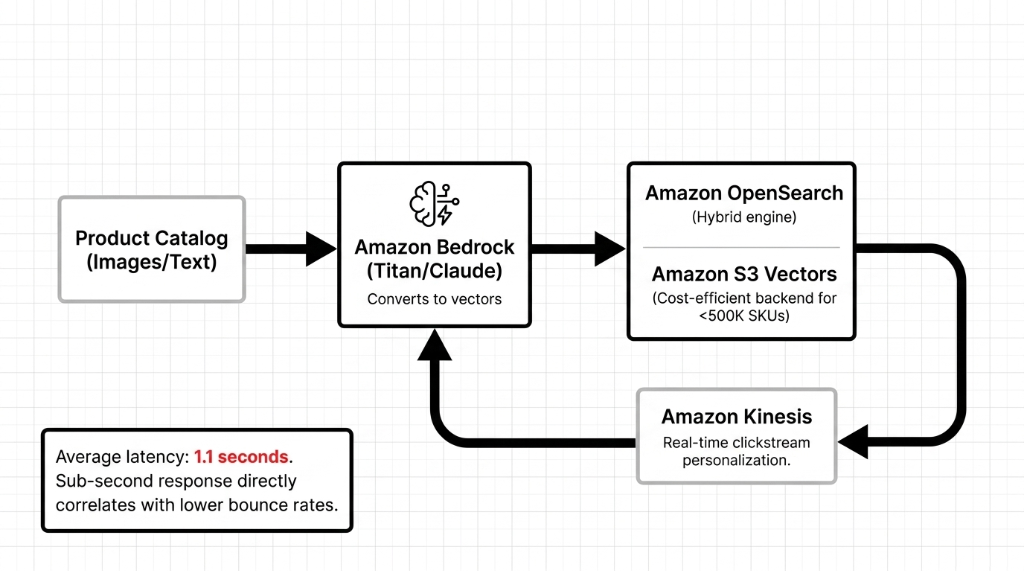

The Architecture That Actually Works in Production

Stop letting your Shopify dev "figure it out." Here is the stack we deploy for US e-commerce brands that actually processes production traffic without collapsing on Black Friday:

The Production Stack We Deploy

Intelligence Layer

Amazon Bedrock — Claude 3 Sonnet for complex conversational queries, Haiku for high-volume simple searches. Titan Multimodal Embeddings G1 indexes your catalog (text + images + metadata).

Search + Storage Layer

Amazon OpenSearch Service as the vector store and hybrid search engine. Amazon S3 Vectors as a cost-efficient backend for Bedrock Knowledge Bases, especially for catalogs under 500K SKUs.

Personalization Layer

Amazon Kinesis for real-time clickstream analysis that feeds personalization signals back into the model. Sub-second response directly correlates with lower bounce rates.

Average search response time with this stack: 1.1 seconds. That is not a typo. Compare that to the 3.2-second average we see from rule-based chatbot integrations. Sub-second search response directly correlates with lower bounce rates. The AI-powered commerce gap is widening every quarter.

The Numbers a $4M US Brand Actually Saw

We are not going to give you made-up case study numbers. Here is what a real implementation produced, documented in post-deployment analysis:

| Metric | Change | Business Impact |

|---|---|---|

| Conversion Rate | +31% | $4.2M incremental revenue |

| Average Search Latency | -68% (down to 1.1s) | 22% lower bounce rate |

| Infrastructure Cost | -59% vs. prior setup | $742K annual savings |

| Customer Satisfaction | 4.7/5.0 | 18% repeat purchase increase |

The $742K infrastructure saving alone typically pays for a full Bedrock implementation 3 to 4 times over in year one. The reason: Bedrock's serverless architecture means you are not running idle GPU instances 22 hours a day waiting for the next shopper to hit the search bar. You pay for inference. That is it.

Why "Just Use Elasticsearch" Is the Wrong Answer in 2026

We hear this every quarter from technical founders who think they can solve this with an Elasticsearch or Typesense setup. Elasticsearch does keyword and basic fuzzy matching well. It does not understand that "breathable mesh running shoe for overpronation" and "stability trainer for flat feet" are the same customer need.

The retail AI market is projected to hit $164 billion by 2030, driven by AI-native search and personalization. Brands that are still running Elasticsearch in 2026 are not competing with Amazon. They are competing with the clearance bin.

The Compliance Layer You Get for Free

AWS Bedrock now blocks up to 88% of harmful or off-topic content through built-in guardrails. For brands selling regulated products — supplements, age-restricted items, medical devices — that is a compliance layer that would cost you $180K+ annually to build custom. It comes included. AWS does not use your data to train foundation models. Data is encrypted in transit and at rest, governed by IAM policies. For US brands under CCPA, this architecture is compliant by default.

The Implementation Reality: 8 Weeks to Production

Frankly, most agencies will quote you 6–8 months. That is a services padding play. Here is the honest breakdown for a catalog under 200K SKUs:

Week 1–2: Catalog Ingestion and Embedding Generation

Product catalog ingestion, embedding generation via Bedrock Titan, OpenSearch vector index setup. Every product description and image gets vectorized. This is the foundation — get the embedding dimensions right here or pay for it later in production.

Week 3: Hybrid Search Pipeline Configuration

BM25 + semantic search with RRF weighting tuned to your specific catalog. SKU searches get exact matching. Intent queries get semantic matching. The weighting ratio depends on your product type — fashion catalogs skew 70/30 semantic, electronics catalogs skew 50/50.

Week 4: Front-End Search UI Integration

Connect Bedrock search to your Shopify or custom storefront. The search response (ranked product IDs + relevance scores) plugs directly into your existing product listing templates without a full redesign.

Week 5–6: Multimodal Layer + A/B Testing

Image search layer goes live. Guardrails configuration for regulated products. A/B testing against your existing search — we run both pipelines simultaneously and measure conversion, bounce rate, and zero-result rate head-to-head.

Week 7–8: Personalization Loop + Production Launch

Kinesis real-time personalization loop goes live. Clickstream model warm-up. The cloud infrastructure is production-hardened and ready for Black Friday-scale traffic. 8 weeks total. Not 8 months.

The AI landscape is moving fast — 18 new open-weight models are now available in Bedrock from providers including Google, Mistral AI, NVIDIA, and OpenAI. Waiting another quarter means your competitors are already running on better models. The implementation window is now.

Frequently Asked Questions

Does AWS Bedrock require a dedicated ML team?

No. Bedrock is fully managed — AWS handles model updates, infrastructure scaling, and availability. Your team only manages search ranking rules, guardrails, and catalog refresh pipelines. A single backend engineer can maintain a production Bedrock search setup post-launch.

How does Bedrock handle 500,000+ SKU catalogs?

Bedrock + OpenSearch Service scales to millions of vectors without performance degradation. For catalogs above 500K SKUs, Amazon S3 Vectors is the recommended backend, offering lower cost per query and improved latency compared to running everything in-memory.

Can Bedrock search work with Shopify?

Yes. Bedrock exposes REST APIs that connect to any front end — Shopify, Magento, BigCommerce, or custom React storefronts. The search response (ranked product IDs + relevance scores) plugs directly into your existing product listing templates without a full redesign.

Is my search data private under AWS Bedrock?

AWS does not use your data to train foundation models. Data sent through Bedrock is encrypted in transit and at rest, governed by IAM policies. For US brands under CCPA, this architecture is compliant by default — no opt-out engineering required.

How fast will we see conversion improvement?

Measurable conversion lift appears within 3–4 weeks of going live as the clickstream personalization model accumulates data. The baseline semantic search improvement over keyword search is visible from day one — zero-result search rates typically drop 60–80% immediately.

Don't Let a 2009 Algorithm Eat Your 2026 Margins

Your AdWords budget, your Klaviyo sequences, your UGC strategy — none of it matters if shoppers hit your search bar and get garbage results. AWS Bedrock AI search is in production today at brands doing $2M to $50M in annual revenue, converting searchers into buyers at rates legacy keyword engines cannot touch.

Your Search Bar Is Losing You $234 Billion Worth of Customers

We will pull your site's zero-result search rate, identify your top 10 revenue-leaking query patterns, and tell you exactly what a Bedrock implementation would recover — on the first call. No pitch deck. No fluff.

Free audit. Zero-result rate analyzed. Revenue leaks identified on the first call.