When AWS launched Bedrock as a managed platform for ai, the pitch was simple: call an API, skip the infrastructure. That is true, but dangerously incomplete.

Here is what nobody tells you about aws cost: Bedrock runs on linear pricing. Every token costs the same. Claude 3 Sonnet costs roughly $3.00 per million input tokens. At 40 million tokens per day, you are looking at $12,000/month just for inference.

Meanwhile, a team managing their own ai infrastructure on aws sagemaker using two ml.g5.2xlarge ec2 instance types pays approximately $2,218/month for an equivalent workload.

That is a $9,782/month gap. We fixed this exact scenario for a US-based enterprise ai team in Austin, saving them $11,400/month by switching from aws bedrock to SageMaker.

The Real AWS Architecture Behind Both

Think of it this way: Bedrock is a restaurant. SageMaker is a professional kitchen.

In aws bedrock, you walk in and order from the menu. You never touch an aws server or deal with amazon ec2 provisioning. The system is aws serverless by design.

In aws sage maker, you are the chef. You choose your ec2 aws instance type, your aws hosting environment, and your plating. You own the aws architecture end to end.

AWS Calculator Reality

Bedrock: Usage pricing based on tokens.

SageMaker: Hourly ec2 rates plus storage costs.

The Decision Matrix

Stop guessing. Here is the exact logic we use before recommending any aws ai service.

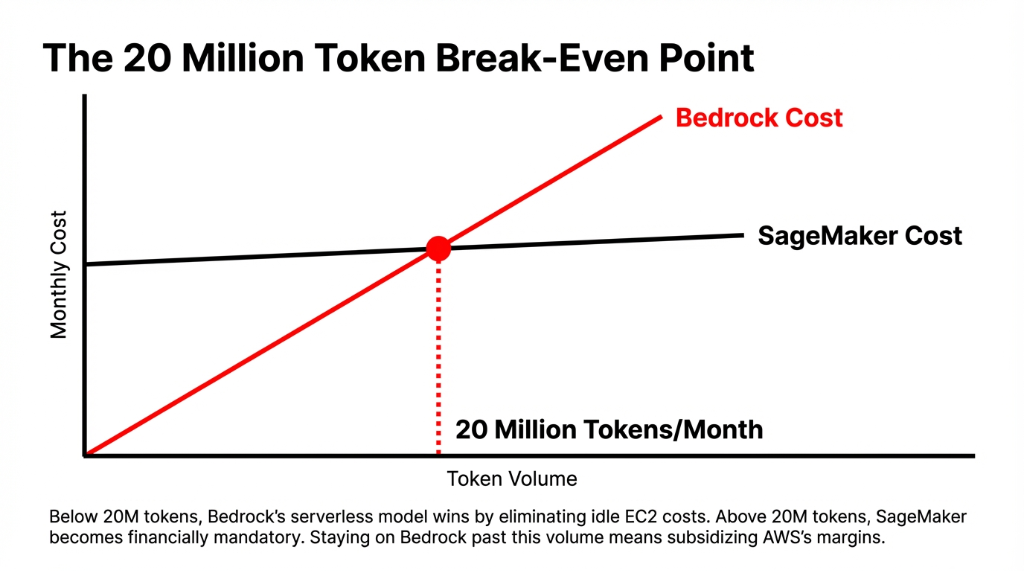

- ▸ Use Bedrock: When you need to ship in under 2 weeks, your monthly volume is under 10 million tokens, and you want zero aws server pricing surprises.

- ▸ Use SageMaker: When you exceed 20M+ tokens/month, need full fine-tuning on proprietary data, and have engineers to manage ai infrastructure.

A Dual-Service AI Architecture

The best setups use both. AWS made Bedrock intentionally easy to start and deliberately expensive to scale.

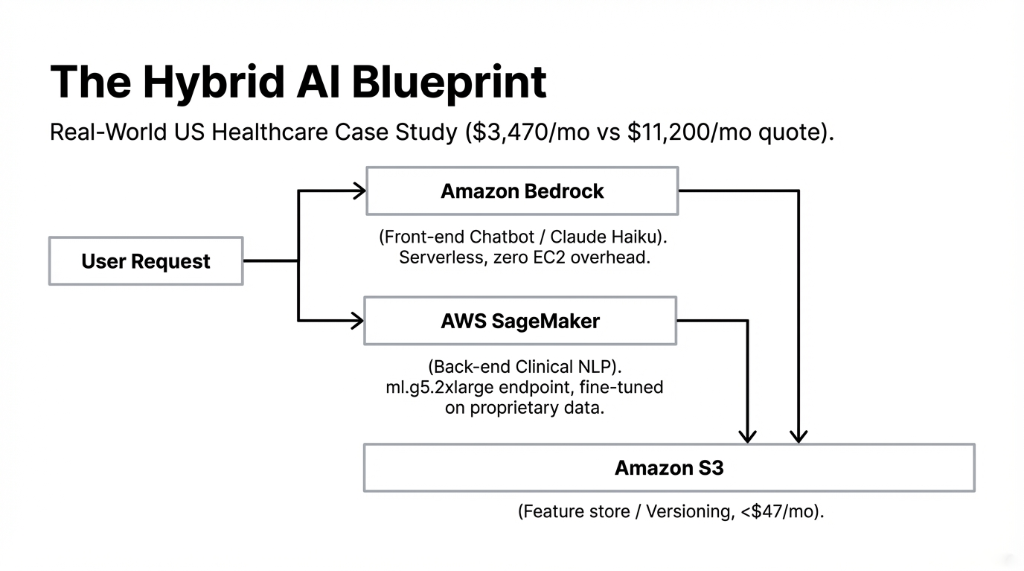

We deployed a hybrid architecture for a US healthcare company. Bedrock handles the front-end patient chatbot. SageMaker runs the back-end clinical NLP model on a dedicated aws ec2 instance with aws auto scaling.

Total aws cost: $3,470/month. The competitor quoted $11,200/month using Bedrock for everything.

AWS Sagemaker Pricing (EC2-Based) Breakdown

| Component | Instance Type | Monthly Cost |

|---|---|---|

| Real-Time Inference | ml.m5.large ($0.115/hr) | $82.80 |

| GPU Inference (Prod) | ml.g5.2xlarge ($1.52/hr) | ~$1,109.00 |

| Storage | 100GB S3 | $2.30 |

You can cut SageMaker costs by 47-72% using aws spot instances. This is how you keep aws server cost low without introducing high latency.

We model your workload against both platforms before we build. We do not recommend SageMaker when Bedrock is cheaper.

Stop Paying $19,400/Month

Every week we talk to US teams paying $12,000-$22,000/month in aws ai costs that should be $3,000-$5,000. Book a free AWS AI Audit.

FAQs

Can I use both SageMaker and Bedrock from the same AWS account?

Yes. Most serious enterprise ai setups do exactly this. Bedrock handles fast generative ai services via API, while SageMaker manages custom model training and fine-tuning.

Is Bedrock always cheaper than SageMaker?

Not above 20 million tokens/month. At lower volumes, Bedrock's usage pricing wins. But at production scale, a properly sized SageMaker endpoint on aws spot instances runs 40-70% cheaper.

Do I need ML engineers to use SageMaker effectively?

Yes. SageMaker handles the managed it infrastructure layer, but you still need engineers who understand pipeline sizing and cost optimization. Without that expertise, you'll over-provision.

What is Provisioned Throughput in Bedrock, and when does it make sense?

It reserves dedicated model capacity for guaranteed speed, requiring a 1- or 6-month commitment. It makes sense for predictable, high-volume production traffic with strict SLA requirements.

Can I fine-tune models on my own data in Bedrock?

Bedrock supports lightweight fine-tuning, but it cannot match SageMaker's full fine-tuning capability. For deep domain adaptation on custom hardware, AWS SageMaker is the right platform.