The AI Adoption Numbers Look Good. The Execution Numbers Do Not.

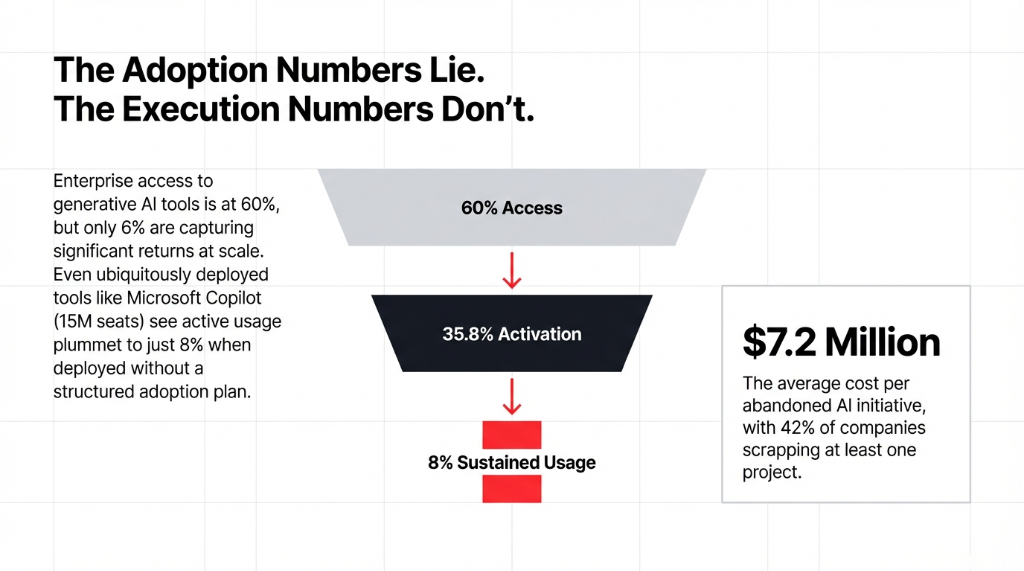

According to Deloitte's 2026 AI report, 60% of workers now have access to AI tools, and 75% report that AI improves the speed or quality of their work. On the surface, that sounds like the AI revolution is well underway.

Then you look at what McKinsey AI research actually shows: only 6% of companies are capturing significant returns from generative AI at scale. The other 94% are paying for the promise, not the performance.

The Microsoft Copilot Reality Check

As of Q2 FY2026, Microsoft Copilot has reached 15 million paid M365 seats. User activation rate? 35.8%. When employees have simultaneous access to Copilot, ChatGPT, and Gemini, Copilot's active usage drops to just 8%.

That is not an indictment of Microsoft AI as a platform. That is an indictment of how most companies deploy AI tools without a structured adoption plan.

We audited a $16M US professional services firm in February. 53 Copilot licenses. No prompt library. No AI training. No defined use cases per role. Monthly spend: $4,770. Measurable productivity gains after 4 months: none that anyone could quantify.

This is the dominant outcome of AI in companies right now. And it is entirely preventable.

What the 19.7% Did Differently: The 3 Patterns That Actually Worked

Pattern 1: They Embedded AI Into Business Systems — Not Just Employee Desktops

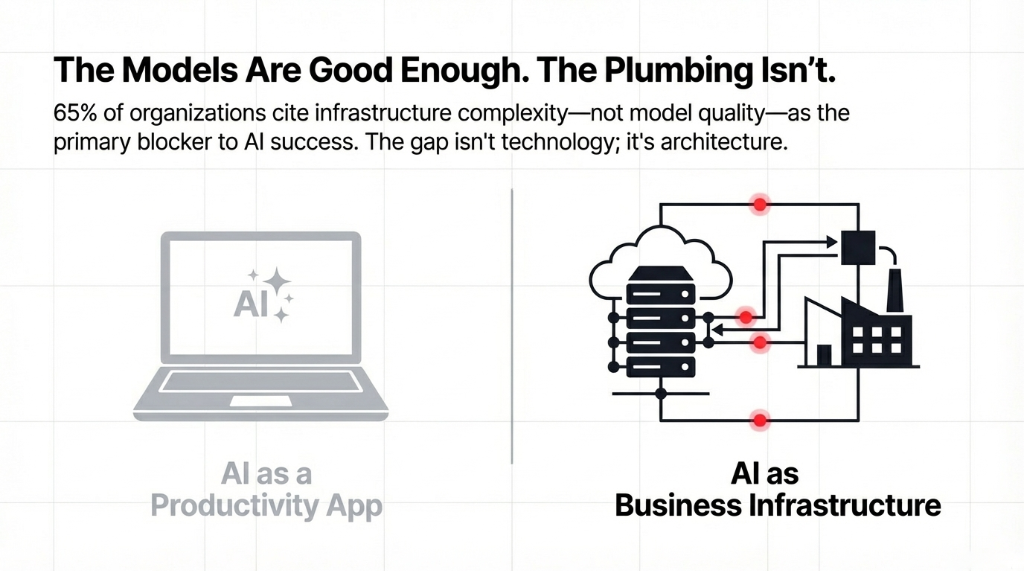

The biggest distinction we saw in Q1 between companies getting real results and companies running expensive pilots? The winners treated AI as infrastructure, not software.

When you are serious about AI integration, you are not handing employees a new AI tool and hoping productivity improves. You are connecting generative AI models directly to your CRM, your ERP, your support ticketing system, your finance workflows. You are building AI pipelines on AWS, Azure, or GCP that feed real-time business data into AI models and let those models act on what they find.

Case Study: $41M B2B SaaS Company

Before rebuild: AI tool sat completely disconnected from CRM. Salespeople manually copying AI-generated summaries into Salesforce.

After we integrated their AI data pipeline directly into CRM via API:

✓ Response time to new leads: 4.7 hours dropped to 19 minutes

✓ Forecast accuracy improved by 31.4%

✓ Pipeline conversion rate improved by 11.3 points in eight weeks

The AI infrastructure gap is real. A DDN report from January 2026 confirmed that 65% of organizations cite infrastructure complexity — not model quality — as the primary blocker to AI success. The AI models are good enough. The plumbing is not.

Pattern 2: One Use Case, All the Way — Not Seven Use Cases Halfway

Here is the controversial opinion nobody on your leadership team wants to hear: the companies that tried to roll out AI across every department in Q1 failed. Without exception, in every client we audited.

MIT estimates 95% of generative AI pilots fail to scale, primarily because organizations spread their focus, budget, and governance attention too thin.

The $1.3M Lesson From a Chicago Healthcare Tech Company

$29M company. Ran simultaneous pilots for writing AI, customer service AI, finance AI, data analysis AI, and AI in marketing — all at the same time.

None had a dedicated owner. None had defined KPIs. None had AI testing protocols.

By March, three were quietly shelved. Total sunk cost: $1.3M in licensing, consulting, and staff time.

The Counter-Example: $55M Logistics Company, Texas

One use case only: customer support AI. Not using AI for everything — just support.

Deployed a custom GPT AI agent using LangChain, trained on their top 3,400 historical support tickets, ran 8 weeks of AI testing with a controlled user group, and went live in March.

Results:

Ticket resolution time: 9.1 hours dropped to 44 minutes

Customer satisfaction scores: improved by 27 points

Ticket volume handled: 2.4x increase with zero headcount increase

AI for enterprise is not about how many tools you run — it is about how deep you go on the ones that matter.

Pattern 3: Governance Was the Architecture — Not an Afterthought

This is the one that most AI technology companies selling you platforms will never tell you.

The companies that got burned in Q1 were not running the wrong AI models. They were running the right models with no governance structure around them. No output monitoring. No version control. No human-in-the-loop checkpoints. No accountability chain when the AI data started producing errors.

The $1.2M Fintech Disaster We Documented

A fintech in New York — running finance AI for risk scoring — absorbed $1.2M in mispriced financial products because a generative AI model drifted in its output and nobody caught it for 11 weeks.

RAND Corporation confirms AI failure rates run up to 80% — nearly double the failure rate of non-AI IT projects — with misunderstood problem definition and inadequate data governance as systemic causes.

We caught a predictive AI model deviation for a client within 6 hours using drift alerts — before it touched a single live transaction.

The 3-Layer Governance Framework We Deploy

Layer 1: Drift Detection

Automated output monitoring with drift alerts. Model deviation caught within hours, not weeks. We configure this on every production deployment.

Layer 2: Human Checkpoints

Human-in-the-loop approval gates for high-stakes decisions in finance AI and customer data workflows. Non-negotiable.

Layer 3: Version Control

Full model versioning with complete audit logs. Every output traceable. Every decision documented. Adds 3-4 weeks to timeline — prevents $1.2M+ incidents.

Forrester puts it bluntly: by end of 2026, half of enterprise ERP vendors will launch autonomous governance modules combining explainable AI and real-time compliance monitoring. Companies that do not build this into their AI strategy now are going to be scrambling to retrofit it later — at significantly higher cost and after the damage is done.

The AI Tools That Actually Moved the Numbers in Q1

| Tool / Platform | What Worked | The Prerequisite |

|---|---|---|

| Microsoft Copilot | Content production time dropped 43% for marketing. Code review cycles shortened 29% for engineering. | 4-hour prompt library workshop per team — role-specific prompt templates. Without it, the tool underperformed. |

| GPT AI (OpenAI Enterprise) | Average handling time reduced 38% for first-response drafting and ticket classification. | Clean AI data pipeline feeding real-time CRM context. Not just API access. |

| Google Vertex AI | Most durable ROI for supply chain and demand forecasting. Returns: $1M–$15M+ at enterprise scale. | 12-24 months to break even. Not a quick win — a structural advantage. |

| Edge AI | Decision latency cut from 340ms to under 12ms for real-time quality control. | A $94M auto parts manufacturer in Ohio: that latency gap is $6,200 per recall event avoided. |

| Apple Intelligence | On-device AI creating new touchpoints — customers researching and comparing before a human rep enters. | B2C brands must account for on-device AI in their customer experience stack. |

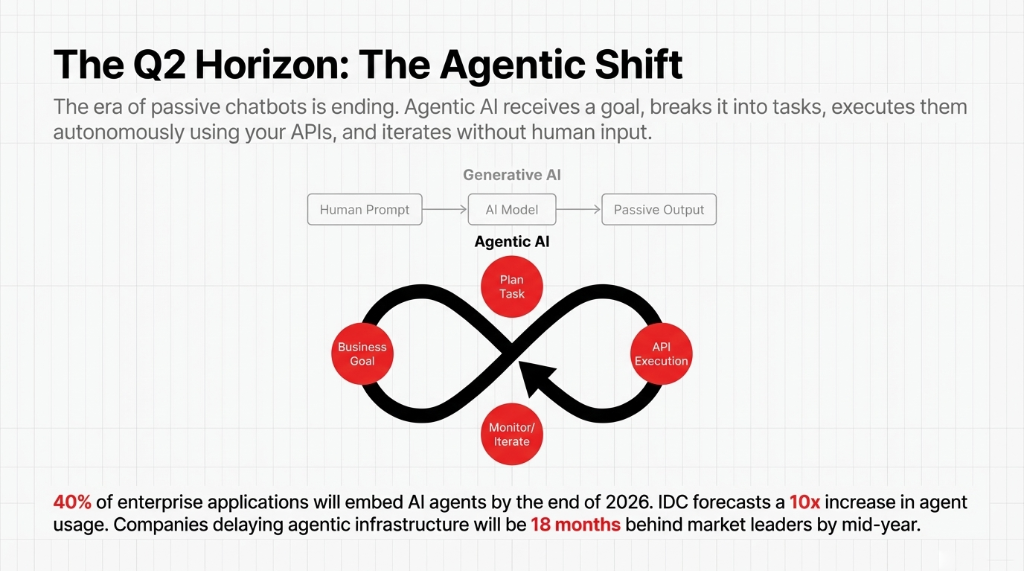

The Agentic AI Shift: What Q2 Looks Like If You Are Still Running Pilots

Gartner predicts that 40% of enterprise applications will embed AI agents by end of 2026 — up from less than 5% in 2025. Deloitte reports only 25% of organizations have piloted agentic systems so far, with that number expected to double by 2027.

Agentic AI — systems that receive a business goal, break it into tasks, execute them autonomously using APIs and tools, and iterate without constant human input — is where the next wave of enterprise AI ROI is coming from. IDC forecasts a 10x increase in AI agent usage by 2027, with compute demands increasing 1000x as inference scales.

The 18-Month Gap That Is Opening Right Now

Organizations that have not started deploying agentic infrastructure by mid-2026 will be 18 months behind the market leaders — not 6, not 12. Eighteen.

What We Build at Braincuber

We use LangChain and CrewAI to build agentic AI frameworks that connect to your existing business systems — your Odoo ERP, your Shopify store, your CRM, your cloud infrastructure on AWS or Azure — and execute real operational workflows, not just generate content.

Creating AI that works for your specific business model. Not off-the-shelf demos.

The LinkedIn AI Moment Nobody Talks About

Every week on LinkedIn, AI posts go viral showing ChatGPT generating a marketing plan in 30 seconds or Copilot summarizing a meeting. We are not dismissing those productivity wins — they are real. But they are learning about AI, not deploying it.

The McKinsey AI ROI Numbers

Average Return

For every $1 invested in gen AI, companies see an average return of $3.70. But that return goes to companies that deployed seriously — with integrated data pipelines and governance.

Financial Services

Leading all industries at 4.2x ROI. They invested in AI infrastructure first, tools second.

Media & Telecom

3.9x ROI. Enterprise AI spending hit $252.3 billion in 2024. Companies with no ROI to show are facing serious executive scrutiny.

AI in 2025 taught the enterprise world that proof of concept is not the same as production. Q1 2026 taught us that production is not the same as transformation. The AI trends for Q2 and beyond point to one thing: AI implementation needs to become a core operational competency — not a side project for the IT department.

If you are still asking "Where do we start?" — you are behind the curve. Not because the questions are wrong. Because your competitors stopped asking and started deploying 12 months ago.

FAQs

Why do most AI implementations in US companies fail?

RAND Corporation identifies five systemic causes: misunderstood problem definition, inadequate data quality, technology-first mentality, insufficient AI infrastructure, and underestimated problem complexity. Companies that define specific business outcomes before selecting AI tools succeed at 67% higher rates than those starting with the technology.

How long does it take to get ROI from AI in business?

Customer service AI: 2-6 months, $500K-$2M annual cost deflection. Data analytics AI: 6-12 months, 40-70% cost reduction. Supply chain AI: 12-24 months, $1M-$15M+ at enterprise scale. Define your ROI target before deployment, not after. Every day of vague success criteria costs you measurable dollars.

What is the difference between generative AI and agentic AI?

Generative AI produces content, analysis, or code when prompted — useful, but passive. Agentic AI receives a goal, plans steps, executes tasks using APIs, monitors its own progress, and iterates without constant human input. Gartner forecasts 40% of enterprise applications will embed agents by end of 2026.

What AI governance framework should we build before scaling?

Three layers: automated output monitoring with drift alerts (deviation caught in hours, not weeks), human-in-the-loop checkpoints for high-stakes finance and customer data decisions, and full model version control with audit logs. Adds 3-4 weeks to timeline. Prevents the $1.2M+ incidents we have documented without it.

What should a US company do first to start AI implementation?

Pick one use case with a measurable, time-bound outcome — not "use AI more" but "cut support resolution time from 9 hours to under 1 hour within 90 days." Audit data quality. Integrate via API into existing systems. Run 6-8 weeks of AI testing with a controlled group. Then scale. Vendor-led implementations succeed at ~67% vs ~33% for pure internal builds.

Stop Running Pilots Nobody Uses

Do not let Q2 look like Q1 did for 80% of enterprises. Book a free 15-Minute AI Implementation Audit with Braincuber — we will identify your highest-ROI AI use case in the first call and give you a production roadmap, not a slide deck. 500+ projects. 4+ years. 40-60% cost reduction via AI.