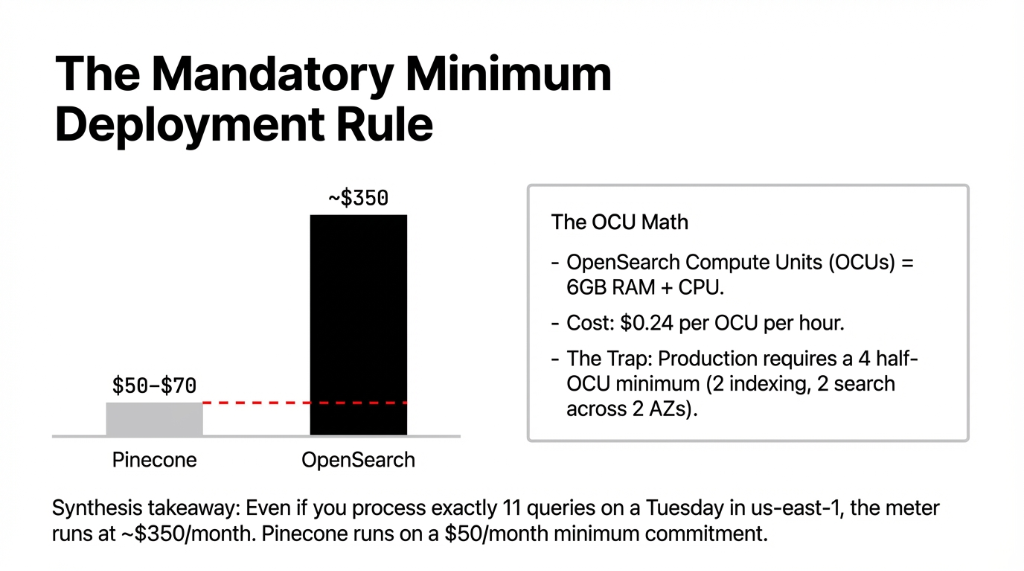

Let's talk about what opensearch serverless pricing actually looks like in production, because the AWS documentation buries this detail. Amazon OpenSearch charges by OpenSearch Compute Units (OCUs).

One OCU costs $0.24 per hour. That sounds manageable until you hit the minimum deployment rule: production requires 4 half-OCUs minimum. That makes the true starting floor ~$350/month in aws us east 1 before your app processes a single real query.

Pinecone, running through the aws marketplace, sits on a $50/month minimum commitment. This translates directly to early-scale costs.

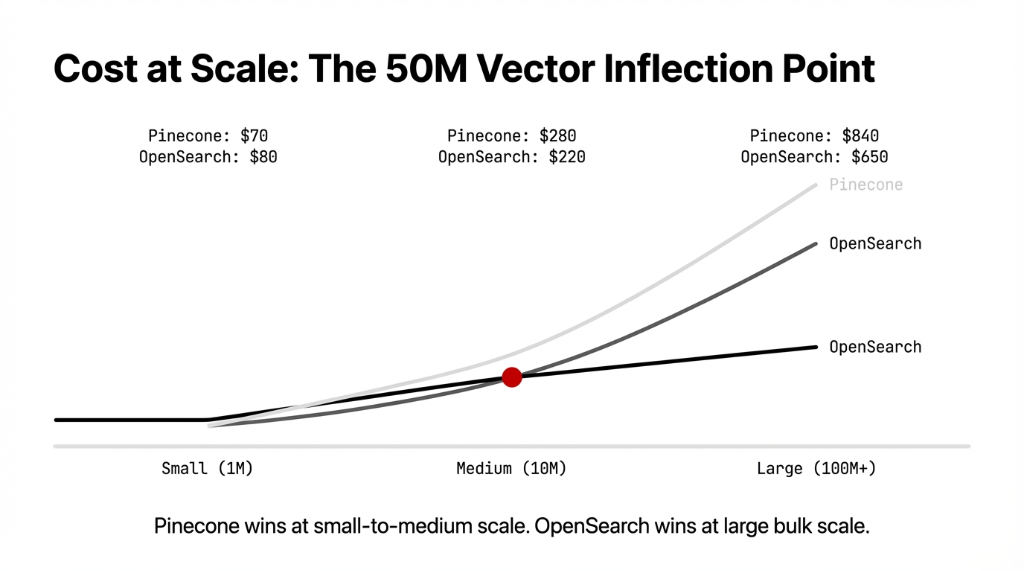

The flip: Pinecone wins at small scale. OpenSearch wins at large scale. Most teams building aws ai workloads are in the 1–10M vector range, quietly overpaying for OpenSearch's mandatory compute requirements.

Why "Just Use OpenSearch" is Bad Advice

We hear this constantly: "We are already using the amazon opensearch service for log analytics, so we'll just flip on vector search." That works until your semantic search latency hits 40ms p99.

Latency Reality Checks

OpenSearch Serverless: 20-45ms (Uses eventual consistency on index writes).

Pinecone: 7ms p99 consistency (Built natively for fresh indexing).

For a US-based e-commerce client, their OpenSearch-based results returned in 38ms. Moving to Pinecone dropped it to 8ms. Conversion skyrocketed 14.3% in 47 days.

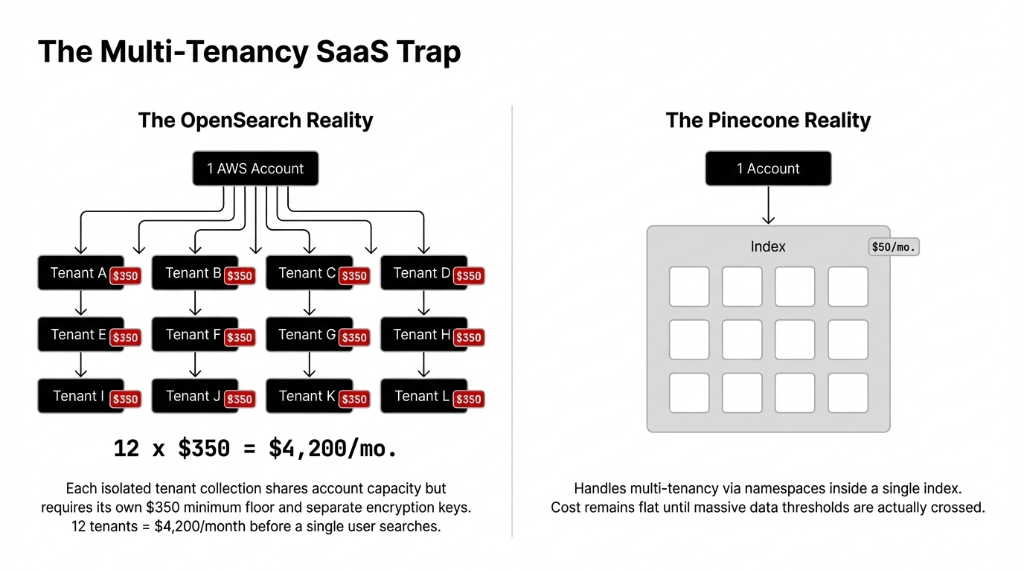

The Multi-Tenancy SaaS Trap

Here is an issue destroying AI cost projections: multi tenancy on OpenSearch Serverless.

Each tenant collection shares OCU capacity, but requires its own $350/month floor. Building B2B SaaS with 12 isolated indexes pushes your minimum spend to $4,200/month immediately. Pinecone namespaces isolate tenancy internally without jacking the flat baseline cost.

The aws scaling ramp also differs. OpenSearch has a 5-10 minute latency ramp for cold starts. Pinecone scales its aws serverless logic in under 30 seconds.

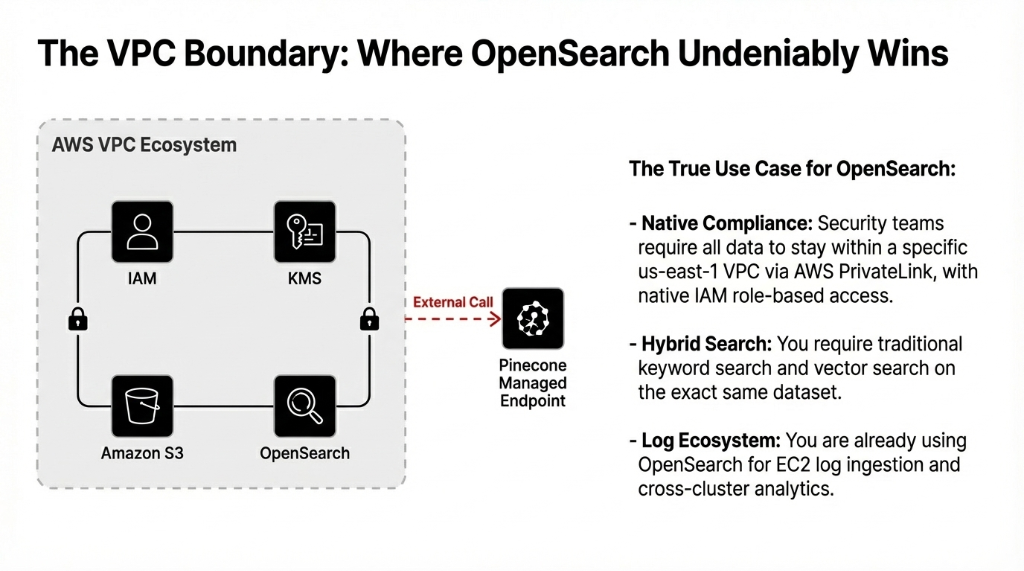

Where OpenSearch Actually Wins

We don't recommend a single DB to every team. That is lazy consulting. OpenSearch is the correct choice if your compliance team demands a closed network loop.

In a fully managed hybrid workload requiring native aws iam policies, aws kms keys, and aws privatelink within a VPC boundary, OpenSearch simply fits. Pinecone managed endpoints require outbound routing.

Additionally, above 50M vectors, OpenSearch economics invert the pricing scale and deliver better raw throughput per dollar if query urgency isn't extreme.

Are You Bleeding AI Budget?

This isn't a technical decision. It is a financial architecture decision. Book a free 30-Minute Vector DB Architecture Audit, and we'll tell you how much you are overspending today.

FAQs

Is OpenSearch Serverless cheaper than Pinecone on AWS?

It depends on scale. For workloads under 10M vectors, Pinecone runs cheaper — about $70–280/month vs. OpenSearch's $350/month minimum floor. Above 100M vectors, OpenSearch's OCU economics drop the cost to ~$650/month vs. Pinecone's $840/month.

Can Pinecone be used inside a private AWS VPC like OpenSearch Serverless?

Not natively. Pinecone runs as a fully managed service with a managed endpoint outside your VPC. You can use aws privatelink with OpenSearch Serverless to keep all traffic internal.

What is the query latency difference between Pinecone and OpenSearch Serverless?

Pinecone delivers consistent 7ms p99 latency. Amazon OpenSearch Serverless latency is variable — typically 20–45ms depending on OCU allocation, data volume, and whether the index is hot or cold.

Does Pinecone support AWS IAM and KMS encryption?

Pinecone supports standard AWS billing consolidation, but native aws iam role-based access and customer-managed keys are a tighter native integration on amazon opensearch service out of the box.

How does multi-tenancy affect cost on OpenSearch Serverless vs Pinecone?

OpenSearch Serverless charges a $350/month minimum per collection group. For a B2B SaaS product with 12 isolated tenant collections, that floor becomes $4,200/month minimum. Pinecone uses namespace-based multi-tenancy inside a single index, keeping costs flat.