Your company bought the OpenAI enterprise license. Your IT team completed the sign-up. You even posted an internal memo titled "Our AI Journey."

Six months later, 88% of your employees are using it only to summarize emails. That is not an AI problem. That is a people problem. And it is costing you more than you think.

Impact: Companies are missing up to 40% of their potential AI productivity gains because their workforce does not know how to use AI for work beyond basic tasks.

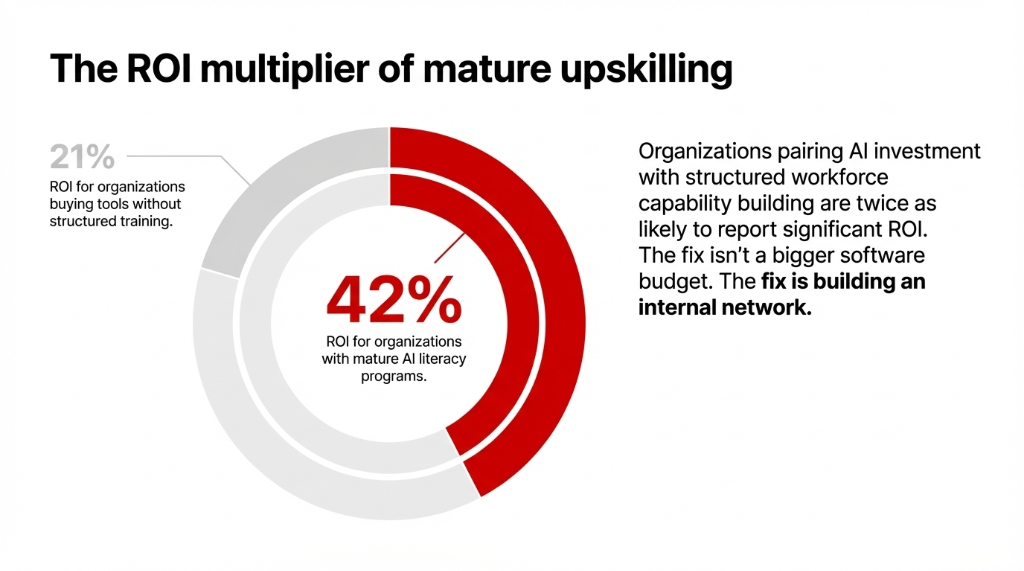

According to EY's 2025 Work Reimagined Survey — covering 15,000 employees across 29 countries — companies are missing up to 40% of their potential AI productivity gains because their workforce does not know how to use AI for work beyond basic tasks. Meanwhile, DataCamp's 2026 research found that organizations with a mature AI literacy upskilling program are nearly twice as likely to report significant AI ROI — 42% vs. 21% for everyone else.

The fix is not more OpenAI tools or a bigger plan budget. The fix is building internal AI champions who own, spread, and scale AI adoption from the inside. Here is exactly how we have seen it done — and what separates the organizations who crack it from the ones who keep funding expensive proofs of concept that go nowhere.

Why Most "AI Rollouts" Quietly Die

78% of US companies already use AI in at least one business function. But only 26% have the internal capability to move beyond pilot projects and generate measurable value at scale, according to Boston Consulting Group.

Here is the ugly truth: most organizations treat AI adoption like a software deployment. They get the OpenAI login set up, they run a 90-minute "How to Use ChatGPT" lunch session, and then they check the "AI training" box on the quarterly report.

That is not AI literacy. That is theater.

The Shadow AI Nightmare

We have worked with organizations across the US and UK where employees were using AI tools daily — but producing outputs they could have generated in Excel. The issue was not access to the OpenAI tool. It was that nobody internally owned the process of teaching teams what AI use cases actually matter for their specific role and workflow.

41% of Millennial and Gen Z employees admit to quietly sabotaging their company's AI strategy when they don't trust the process (Writer, 2025).

23% to 58% of employees across sectors are bringing their own shadow AI solutions to work instead of using firm-approved AI resources — creating data governance nightmares (EY).

You do not fix this with another vendor demo. You fix it with internal champions.

What an AI Champion Actually Does (It Is Not What HR Thinks)

An internal AI champion is not your most tech-savvy developer. It is not the person who signed up for every OpenAI GPT beta waitlist. And it is definitely not someone handed a PowerPoint deck and told to "spread enthusiasm."

What a Real AI Champion Does

Knows Real Use Cases

Identifies the specific AI use cases that save their department 3.5+ hours per week — not theoretical scenarios from vendor decks.

Has Peer Trust

Has the trust of their peers — not just the authority of a title. Credibility comes from being in the trenches, not from a LinkedIn certification badge.

Translates Between Worlds

Translates between what the OpenAI team promises and what a sales manager or finance analyst actually needs. Speaks both languages.

Documents and Scales

Tracks what works, documents it, and creates repeatable processes using practical AI. No documentation = no scale.

Think of them as the connective tissue between your OpenAI organization strategy at the executive level and the day-to-day reality of your teams AI workflows.

Citi: The Proof That Champion Networks Work at Scale

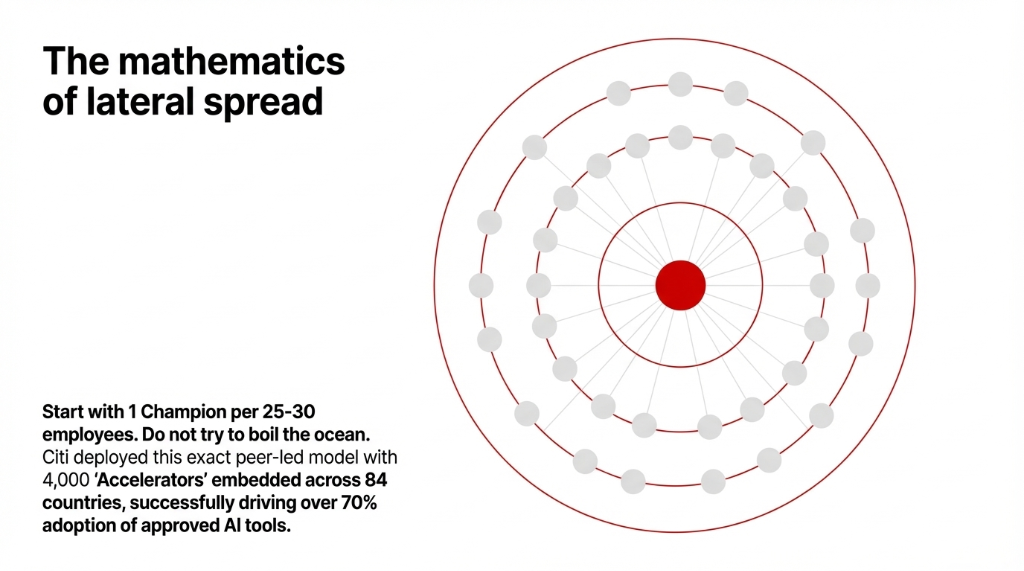

Citi built a network AI of 4,000+ AI Accelerators — peer champions embedded across 182,000 employees in 84 countries — and hit over 70% adoption of firm-approved AI tools.

That number is extraordinary for a regulated financial institution. The reason it worked was pairing peer-led champion networks with clear governance, approved AI use cases, and explicit data boundaries.

The 5-Step Framework to Build Your Own AI Champion Network

We are not going to tell you to "create a culture of AI." That means nothing. Here is what actually works:

Step 1: Identify the Right People — Not the Obvious Ones

Stop defaulting to IT. The best champions come from operations, customer support, finance, and HR — departments where manual, repetitive work is bleeding time and money.

Look for people who already use AI help tools informally. Who in your Slack workspace has been quietly asking "how do I use AI for this?" and sharing answers with colleagues? That person is your champion. They already have peer credibility, and they are already driving organic AI adoption without a budget.

Start with 1 champion per 25-30 employees in your first cohort. Do not try to boil the ocean.

Step 2: Give Them a Real Curriculum — Not a 60-Minute Webinar

Here is what kills champion programs: zero structure. You need to build AI literacy in a structured way. Champions need to know:

The Champion Curriculum Checklist

▸ How to write prompts that generate AI usable outputs — not just Chat GPT OpenAI chatting

▸ Which specific OpenAI use cases apply to their department (customer support vs. finance vs. ops have completely different needs)

▸ How to evaluate AI output quality before sharing it as their own work

▸ The governance guardrails — what data can and cannot be fed into any OpenAI tool

Organizations pairing AI investment with structured workforce capability building are 2x more likely to see significant ROI. Spend the $14,000 on a real curriculum. Do not rely on a vendor's OpenAI learning portal and hope for the best.

Step 3: Give Champions Actual Authority — Not Just a Title

If your AI champion cannot greenlight a 2-week experiment without three levels of approval, they are useless. Champions need the ability to:

Champion Authority Requirements

▸ Run a 30-day AI use case pilot within their department

▸ Access your OpenAI organization dashboard to monitor usage and identify gaps

▸ Present findings directly to a VP-level sponsor quarterly

The Authority Gap That Kills Programs

Companies with a clearly defined AI strategy report success rates of 80% vs. 37% for those without one (BCG). The difference is ownership and authority — not enthusiasm.

Step 4: Build the Internal Network — Not Just Isolated Individuals

Champions working in silos create islands of AI adoption instead of organization-wide momentum.

Create a Teams AI channel or Slack workspace specifically for champions to share wins, failures, prompts that work, and AI use cases that actually saved time this week. High-adoption organizations — those where 50%+ of employees use AI tools — deploy AI across 7 or more internal use cases on average. They did not get there by having one brilliant person. They built network AI structures where knowledge about how to use AI spread laterally.

Run a monthly "Champion Showcase" — 20 minutes where two champions demo how they used AI for work this month. Productivity gains of 15-30% are achievable once teams see real OpenAI examples, not vendor case studies.

Step 5: Track It — Or It Does Not Exist

What gets measured actually scales. Track:

| Metric | Source | Healthy Benchmark |

|---|---|---|

| Active users per champion's dept | OpenAI tool admin dashboard (weekly) | 47%+ active weekly usage |

| Time saved per task category | Champion self-reports + manager validation | 3.5+ hours/week per user |

| Adoption rate by department | Usage analytics cross-referenced | 50%+ active = healthy |

| Output quality score | Manual revision frequency tracking | Used without major revision 70%+ |

If you do not track this, your OpenAI team will tell you adoption is fine. It probably is not.

The Mistake That Kills 7 Out of 10 Champion Programs

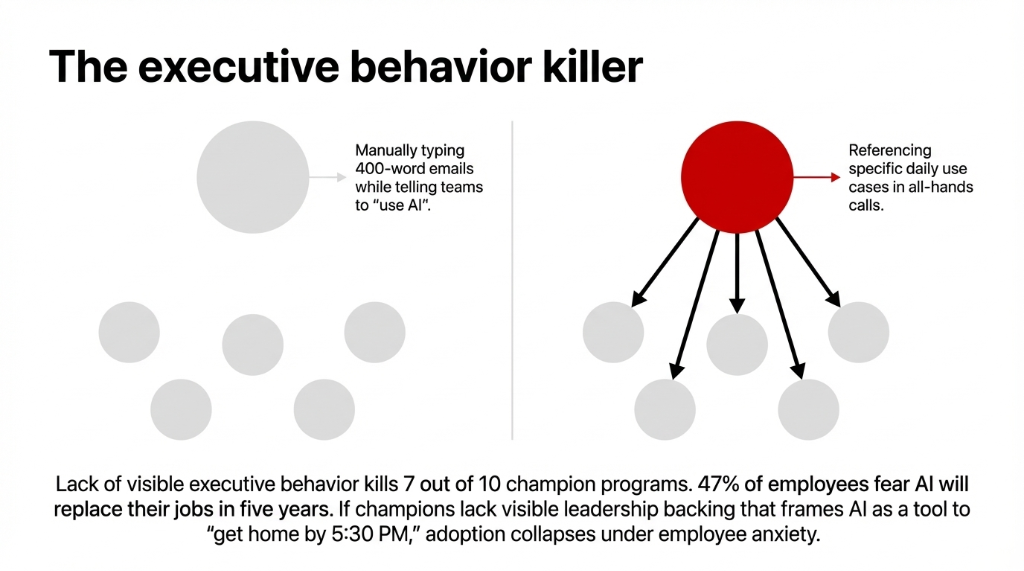

Here is an insider truth: the biggest killer is not resistance to AI — it is lack of visible executive behavior.

When the CEO still emails 400-word meeting summaries typed manually while telling employees to "use AI," no champion program survives it. Champions need to see their own leadership actively using AI at work, referencing OpenAI examples in all-hands calls, and being specific about which AI use cases they personally apply AI to every day.

The Fear Factor You Cannot Ignore

Fears around AI-driven job displacement nearly doubled in 2025 vs. the prior year (KPMG). And 47% of employees fear AI may replace their jobs in the next five years.

If champions cannot point to visible executive support AND a credible "this is how AI helps you, not replaces you" message, adoption collapses under employee anxiety.

The OpenAI public narrative focuses on build AI, create AI, and generate AI. Your internal narrative needs to focus on "here is how to use AI to get home by 5:30 PM on Friday."

What "Ready" Actually Looks Like

When your champion program is working, you will see three things in your data:

The 3 Signals of a Working Champion Program

1. Department-level AI adoption rates crossing 47%+ active weekly usage within 90 days of champion deployment.

2. Shadow AI (employees using personal Chat GPT OpenAI accounts on company devices) dropping by at least 30% as firm-approved OpenAI tools become preferred.

3. At least 3 documented AI use cases per department that produce measurable time or cost savings.

AI literate employees are expected to become up to 20% more productive once they have proper training and internal support structures. That is not a marketing number — that is the ROI your board should be tracking instead of "number of OpenAI accounts provisioned."

The goal is not to create your own AI factory overnight. The goal is to create conditions where your people can build your own AI-powered workflows, one champion, one department, one AI use case at a time. That is how practical AI scales inside real organizations.

Frequently Asked Questions

How many AI champions does an organization of 200 employees need?

Start with 7-8 champions — roughly 1 per 25-30 employees, distributed across key departments (sales, ops, finance, support, HR). Scale to 1 per 15 employees once your first cohort proves ROI and you have documented AI use cases to replicate.

How long does it take to see measurable AI adoption results from a champion program?

Most organizations see meaningful AI adoption movement within 60-90 days of launching a structured champion program. Citi's 4,000-champion network achieved 70%+ adoption over 12-18 months. Your first 90-day benchmark should be 40-50% active usage in champion-led departments.

Do champions need technical skills to use AI tools effectively?

No. Champions need strong peer credibility, communication skills, and enough AI literacy to demonstrate practical AI use cases relevant to their team. Technical depth is the job of your IT or AI ops team — champions are translators and advocates, not engineers.

What is the ROI of investing in internal AI literacy vs. just buying more AI tools?

Organizations with mature AI literacy upskilling programs report significant positive AI ROI at a rate of 42%, compared to 21% for organizations that buy tools without structured training. The ROI multiplier on training your people is nearly 2x vs. tool investment alone.

How do we prevent AI champions from becoming overloaded on top of their day jobs?

Cap champion responsibilities at 3-4 hours per week maximum. Compensate them — either through reduced core workload, recognition in performance reviews, or a quarterly stipend ($500-$1,200/quarter works well for mid-size US companies). Champions who feel burned out become the loudest internal critics of your AI adoption program.

Stop waiting for a top-down AI transformation that never arrives.

Book a free 15-Minute AI Adoption Audit with Braincuber — we will identify exactly where your organization is losing productivity, which departments are ready for AI champions today, and what your first 3 AI use cases should be.