How Long Does AI Actually Take to Implement?

Let us anchor this with realistic ranges for US businesses that want to use AI in production, not just play with an AI app in a browser tab.

AI Implementation Timelines by Company Size

US SMBs

AI chatbot / customer service: 2-4 weeks if you reuse proven AI software and plug it into your helpdesk, CRM, or Shopify store.

Workflow automation: 6-12 weeks when you connect AI to order processing, invoicing, or ticket triage.

AI data analysis dashboards: 4-8 weeks for an AI dashboard that sits on top of your core data and answers real questions.

Mid-Market Brands

Cross-department automation: 12-24 weeks to design the AI blueprint, integrate multiple systems, and meet basic compliance rules.

Custom AI for your data: 12-24 weeks to train, test, and deploy models on your own history, pricing, and customer behavior. This is where project management AI and proper AI planning start to matter.

Large Enterprises

Phased implementation: 12-18 months from strategy through data, pilots, to scaling. Nobody is "just installing an AI app."

Full transformation: 18-36 months with multiple business units. If your CIO is promising a full "AI in the enterprise" rollout in a quarter, you either have a tiny scope or a future post-mortem.

What Actually Drives Your AI Timeline?

The model is rarely the bottleneck. The delay lives in your data, integrations, and decision-making.

The 5 Timeline Killers (In Order of Damage)

1. Scope creep: Starting with "we want AI for work everywhere" instead of one sharp use case. Every extra use case adds 4-8 weeks.

2. Data chaos: Ten versions of the truth, no clear AI data pipeline, no owner for data analysis. A simple customer lookup becomes complex work once your CRM, billing, and warehouse all disagree on who the customer is.

3. Integration complexity: Old ERPs, half-documented APIs, and "that one vendor" who still runs on FTP. Every undocumented integration adds 2-3 weeks.

4. Compliance and policy: AI policy and internal AI laws that nobody has written yet. Legal blocks the rollout at the last minute because nobody asked them at the start.

5. Change management: Training an AI is easy. Training people to trust and use AI optimization is the hard part. We have seen 6-week technical implementations take 16 weeks because nobody prepared the humans.

When we do an AI check at the start — data audit, process mapping, regulation review — we usually shave 4-6 weeks off the back end because we catch the landmines early.

Phase 1: Strategy, Use-Case, and AI Blueprint

1-4 weeks for SMB. 3-6 months for enterprise. This is where we decide one clear problem, map how AI and business actually meet, and draft your AI blueprint, AI policy, and basic compliance guardrails so you do not break any laws in your industry.

What Phase 1 Actually Looks Like

Pick ONE problem. Example: reduce support response time by 40%. Not "transform customer experience." Not "become an AI company." One measurable outcome.

Map the data flows. Where does the data live? Who owns it? What format is it in? If nobody can answer these questions, you are not ready for Phase 2.

Draft compliance guardrails. AI policy, sector-specific laws, internal governance. For enterprises, analysts show this strategy phase alone can run 3-6 months before any code ships. *(Yes, months. Before a single line of code.)*

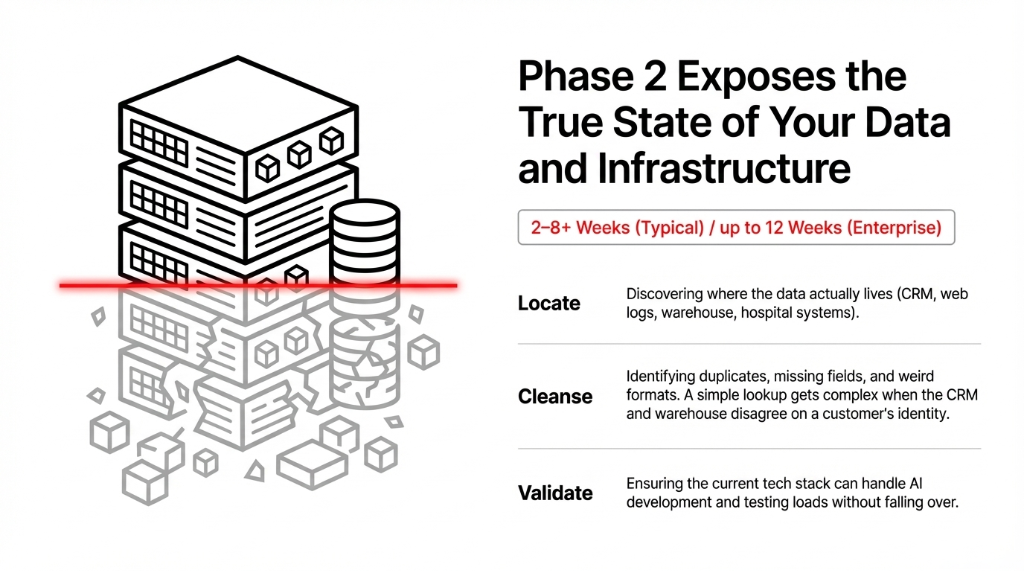

Phase 2: Data and Infrastructure Reality Check

2-8+ weeks typical. Up to 12 weeks for enterprise. This is where we stop guessing and look at your AI data. Where does it live? How dirty is it? Can your stack handle AI development and testing without falling over?

The Data Reality Check

Locate: Where does data live? CRM, warehouse systems, web logs, hospital systems, 14 different spreadsheets someone started 3 years ago. Industry guides show data and infrastructure prep takes 6-12 weeks in serious enterprise programs. For smaller businesses with clean SaaS tools, this compresses to 2-4 weeks.

Cleanse: Duplicates, missing fields, weird formats. A simple customer lookup turns into complex work once your CRM, billing, and warehouse all disagree on who the customer is. *(We once found 4,200 duplicate customer records across 3 systems. Took 11 days to reconcile.)*

Validate: Can your current tech stack handle AI development and testing loads without falling over? This is also when we decide if we lean on existing AI platforms, paid tools, or full custom AI software to hit your goals without burning budget.

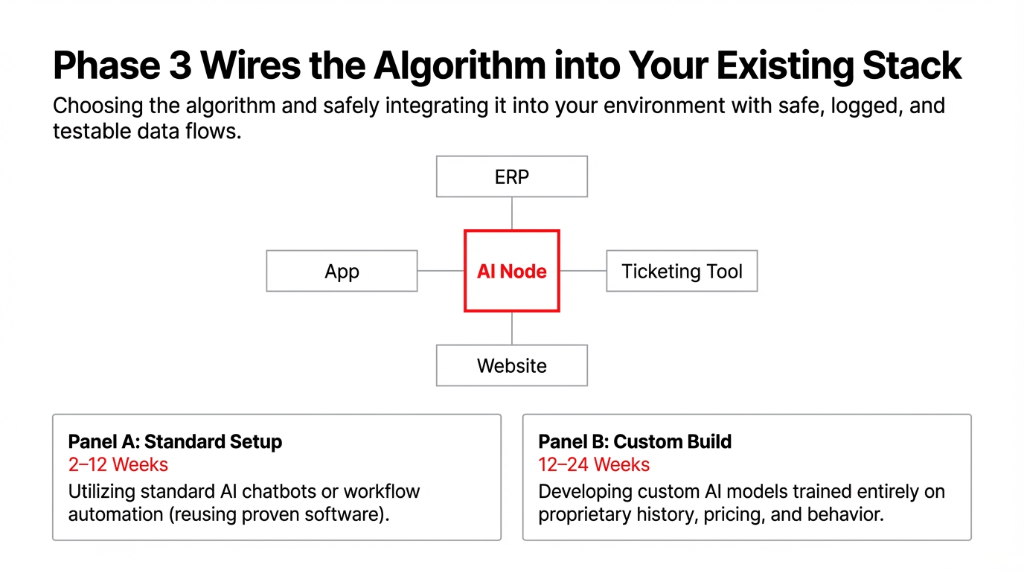

Phase 3: Building and Integrating the AI System

4-16+ weeks. Now we are actually creating AI inside your environment. Choosing or developing AI algorithms that fit your problem. Wiring them into your stack: website, app, ERP, ticketing tools. Making sure AI data flows are safe, logged, and testable.

| Integration Type | Timeline | What Is Happening |

|---|---|---|

| Standard AI Chatbot | 2-4 weeks | Plug proven software into helpdesk/CRM |

| Workflow Automation | 6-12 weeks | Connect AI to order processing, invoicing, ticket triage |

| Custom AI Model | 12-24 weeks | Train on your data, integrate, harden for production |

This is where people underestimate complexity. A "simple" AI account lookup turns into complex work once you discover your CRM, billing, and warehouse all disagree on who the customer is.

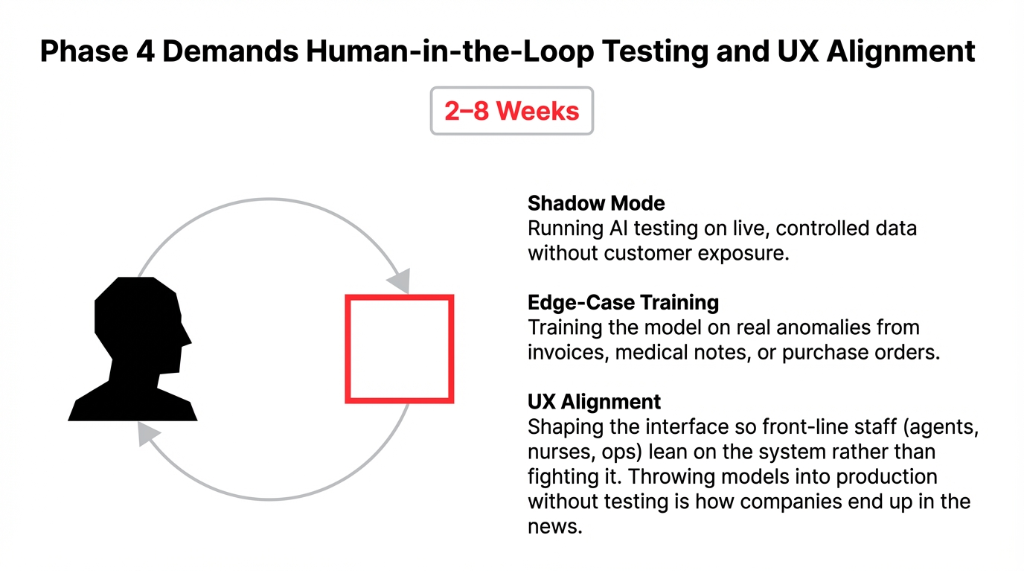

Phase 4: AI Testing, Training, and User Experience

2-8 weeks. Throwing a model into production without testing AI is how you end up in a Wall Street Journal article. Teams that skip this end up with "AI help" that nobody trusts. Teams that invest a few weeks here get user experience and AI aligned so agents, nurses, or ops people actually lean on the system.

What Phase 4 Covers

Shadow mode testing: AI testing and validation on live but controlled data. No customer exposure. The AI generates replies, agents approve or fix them, and we tune prompts based on real-world feedback.

Edge-case training: Training on real anomalies from your tickets, invoices, medical notes, or purchase orders. Not generic data. Your data. Your exceptions. Your weird edge cases.

UX alignment: Shaping the AI user experience so front-line staff can actually use it. This is also where we run AI learning sessions so people understand what the tool can and cannot do.

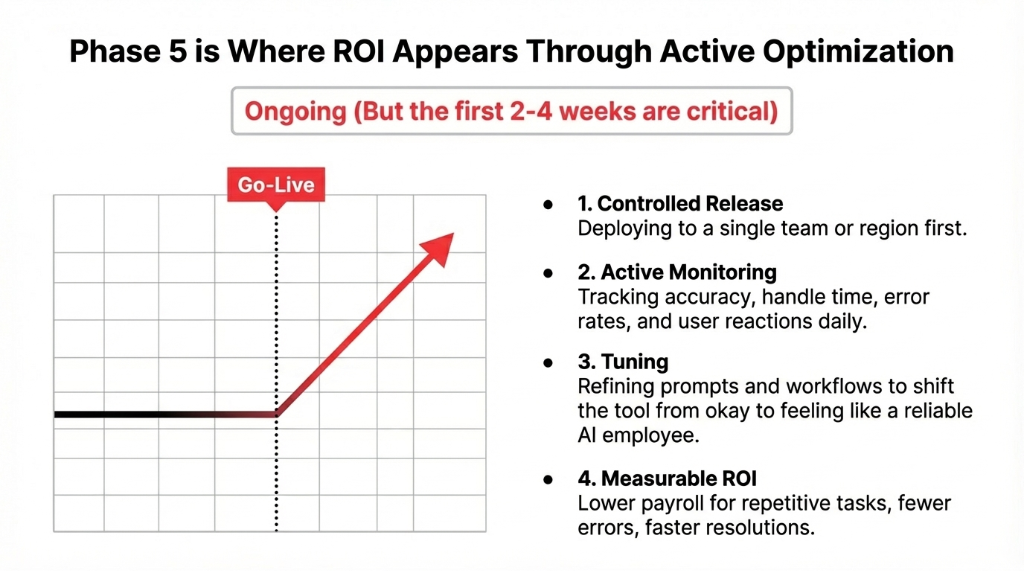

Phase 5: Go-Live, Optimization, and Scaling

Ongoing, but the first 2-4 weeks are critical. The first month after go-live is where most of the ROI appears — or disappears. Enterprise frameworks show scaling and optimization running 6-18 months as you expand to new use cases and geographies.

The Go-Live Playbook

1. Controlled release: Deploy to one team or one region. Not the entire company. Not all customers. One controlled slice.

2. Active monitoring: Track accuracy, handle time, error rates, and customer reactions on an AI dashboard. Daily. Not weekly. Daily.

3. Prompt tuning: Refine prompts, rules, and workflows to get from "okay" to "this actually feels like an AI employee." Your AI user experience evolves daily based on what agents click and override.

4. Measurable ROI: Faster ticket resolution with customer service AI. Fewer stock-outs with AI in supply chain. Better outcomes with healthcare AI. If you do this right, AI impact shows up in lower payroll, fewer errors, and happier customers.

Why 95% of AI Pilots Stall

MIT-linked research shows that about 95% of enterprise AI pilots fail to deliver measurable impact on the P&L, with only around 5% making it into production with real value. The problem is not the model — it is the way companies run the implementation.

Why Pilots Fail (Research-Backed)

"Playground AI"

Teams run cool demos of advanced AI but never tie them to KPIs. 8-16 weeks of AI study, testing, and development that never sees real customers. Fun for engineers. Useless for the P&L.

No Governance

AI regulation, internal AI laws, and AI policy are afterthoughts. Legal blocks the rollout at the last minute because nobody asked them at the start. Every stalled pilot still consumed 8-16 weeks of time.

Build-Everything Mindset

Vendor-led projects that plug into existing workflows succeed roughly twice as often as DIY builds. You do not need to build your own AI. You need AI that works inside your existing tools.

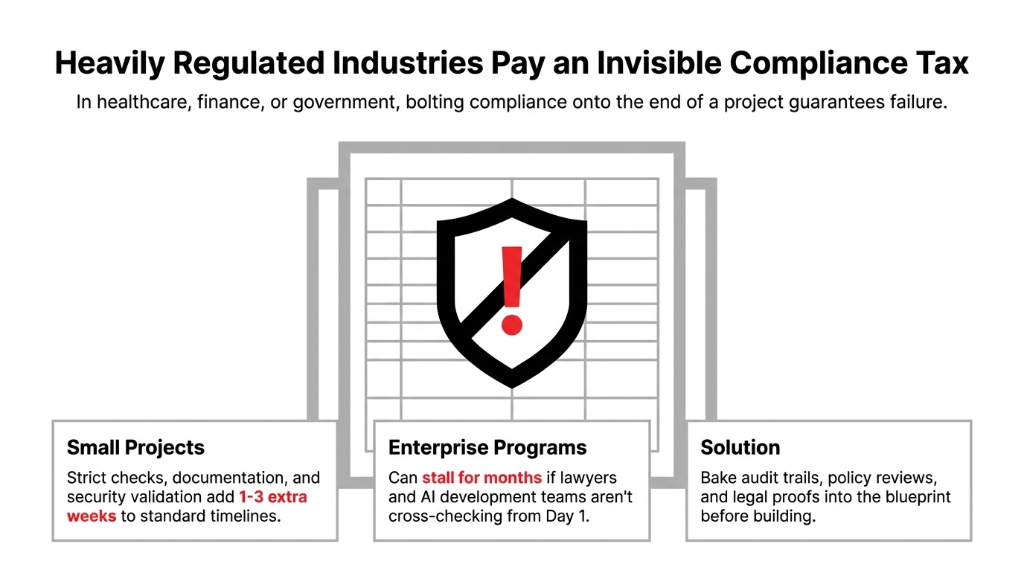

How Compliance Changes Your Timeline

In sectors like healthcare, finance, or government, AI laws and domain regulation add real weeks to the calendar. Strict compliance checks, documentation, and security validation can add 1-3 extra weeks to even small AI automation projects. MIT's work on failed AI programs highlights that heavily regulated industries tend to stall when compliance is bolted on at the end instead of baked into the plan.

If You Work in a Regulated Industry

Documentation and audit trails: Bake them into Phase 1 or watch your "6-week project" turn into 6 months. Every AI decision needs a paper trail. Every data flow needs a compliance sign-off.

Policy reviews for AI content: Legal needs to review how AI generates customer-facing content. Not after launch. Before Phase 3 starts.

Cross-checks between lawyers and AI devs: If these two teams meet for the first time at go-live, you have already failed. Get them in the same room at Phase 1. *(They will hate each other. That is fine. Better now than in a courtroom.)*

A Real-World Timeline: D2C Brand, $14.7M Revenue

You run a US D2C brand doing $14.7 million a year. Your support team lives inside Zendesk, Slack, and a mess of Excel. You want AI for business that actually cuts ticket volume, not another dashboard. Here is what we would map out:

Week 1: AI Check and Scoping

Audit your data, processes, and systems. Look at historical tickets, tags, and macros. Run quick AI data analysis on what is repetitive. Align AI policy, compliance, and sector-specific laws.

Weeks 2-3: Data and Integration Prep

Clean up tags, unify categories, map tribal knowledge. Connect helpdesk, order system, and warehouse so AI and data line up. Spin up staging environments.

Weeks 4-6: Build and Train

Configure the AI app that drafts responses, escalates edge cases, and updates orders. Use your data to train the system to understand refunds, exchanges, and fraud flags in your world.

Weeks 7-8: Testing With Humans

Run AI testing in shadow mode. AI generates replies, agents approve or fix them, we tune prompts and logic based on real-world feedback. No customer exposure yet.

Weeks 9-10: Go-Live to a Subset

Flip from "AI helping humans" to humans supervising AI. Measure handle time, CSAT, and error rates on an AI dashboard. Your AI user experience evolves daily. By week 10, you are not learning about AI in theory — you have AI in customer support reducing handle time per ticket, and your staff is using artificial intelligence actively.

What Does "Done" Look Like?

AI is never "done," but you should draw a clear line where Phase 1 is complete. A defined AI system is live in production. Staff are using AI daily, not bypassing it. Metrics show artificial intelligence benefits — fewer manual touches, faster cycles, fewer mistakes. There is an owner for AI management going forward.

After that, you iterate. Add AI in adjacent teams. Expand customer service coverage. Introduce project management AI. Keep help AI relevant with new data and policies. That is not never-ending scope creep. That is running AI the same way you run any other core system.

FAQs

How long does a basic AI chatbot take to implement?

2-4 weeks if your data and systems are in reasonable shape and you use proven tools instead of building from scratch. That includes setup, integration, testing AI replies, and basic staff training.

How long does it take to build a custom AI model?

12-24 weeks to design, train, integrate, and harden for real-world use. Enterprise roadmaps add several more months to scale across locations and business units.

Why do so many AI projects miss their deadlines?

95% of enterprise AI pilots fail because teams underestimate data work, integration, and change management. Projects that treat AI like digital staff in workflows succeed far more often than DIY builds.

How do regulations affect AI implementation time?

1-3 extra weeks even on small projects due to security reviews, documentation, and policy alignment. In healthcare, finance, and government, this can extend to months if compliance is bolted on at the end.

What is a sane first AI project for my company?

One use case: customer support AI, data analysis in finance, or supply chain reordering. Clear ROI. Decent data. Low regulatory risk. Once that AI employee works, expand with a clear timeline.

Stop Guessing. Get Your Real AI Timeline.

Pick your messiest, most time-consuming workflow. The one where your team wastes 37 hours a week on repetitive tasks. Bring it to a 15-minute call with us. We will map the honest timeline — not the vendor fantasy — and tell you exactly which phase will eat the most time for your specific data, stack, and compliance environment. If we cannot give you a concrete week-by-week plan in 15 minutes, we are not the right partner.