The Real Build vs Buy Question

The question is not: "Is this the best AI model?" or "Can we make a chatbot that looks like the competition's?" or "What is the best AI to use for marketing content?"

The real question is: For this one concrete use case, does building in-house create a repeatable strategic moat, or are we just trying to avoid vendor invoices?

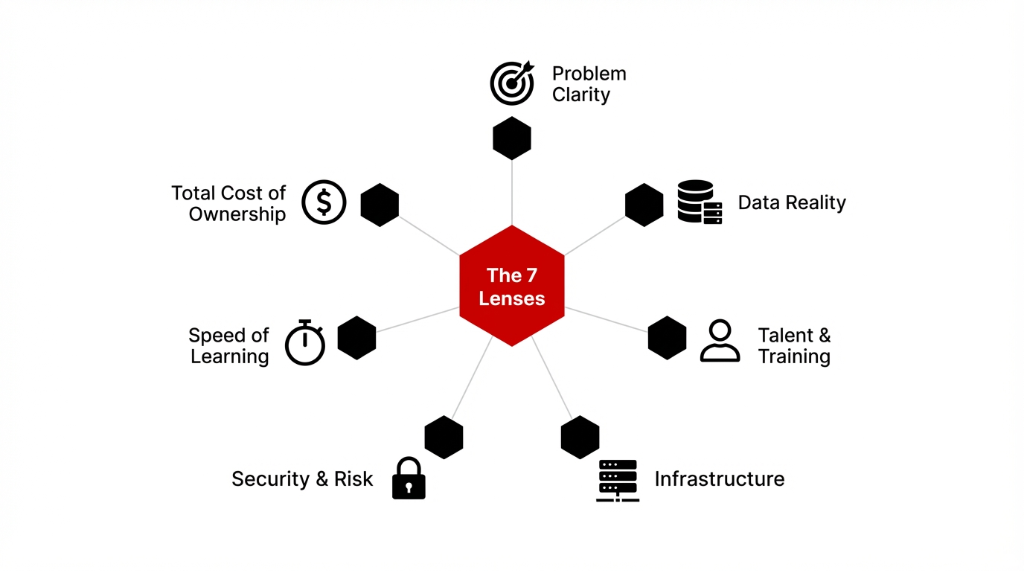

When we advise US clients, we run every AI strategy decision through seven lenses. Let us walk through each like adults, not like a hype panel at a tech conference.

Lens 1: Problem and Use Case Clarity

If your "initiative" sounds like "We want to use AI for everything" or "Let us invest in some AI so we do not fall behind," you are already off the rails. The only time you should even discuss building your own AI is when you have a single, sharp use case.

Sharp Use Cases (These Are Real)

"Reduce email support workload by 60% within 6 months." Clear metric. Clear timeline. You can evaluate build vs buy against this.

"Cut manual invoice processing time from 14 minutes to 3 minutes." Measurable. Tied to ops cost. Easy to calculate ROI.

"Predict churn for B2B contracts 90 days before renewal." Specific AI analytics use case with data you can evaluate for cleanliness right now. Anything fuzzier than this and your build vs buy debate is theater.

Lens 2: Data Reality, Not Data Dreams

Everyone says they want "AI and data science" capabilities. Then we look under the hood and see: 17 exports from Shopify, NetSuite, and Salesforce. 4 BI dashboards nobody trusts. 3 definitions of "active customer."

Before You Build, Ask These 3 Questions

1. Do we have labeled examples for this use case? Not "data" in the abstract. Specific labeled training data for the specific problem you are solving. If no, you are not ready to build.

2. Do we control the data end-to-end? Or is it trapped in vendor tools, third-party APIs, and systems you cannot query without opening a support ticket?

3. Can we realistically maintain the data pipelines? Once the nice slides are done, who is keeping this running? If a vendor already solved the data headache for your exact vertical with production-ready AI models (AI in manufacturing quality checks, AI customer support for e-commerce), buying often beats building by 18-24 months. Building in-house on bad data just means you are paying engineers to formalize your chaos.

Lens 3: Talent, Training, and Education Reality

We regularly meet companies whose "AI team" is one person who watched five YouTube videos on machine learning, a manager who skimmed a newsletter on AI for business, and no time budget for serious AI training.

Building In-House Makes Sense Only If

You hire or grow genuine AI experts, not just enthusiasts. People who have shipped ML models to production, not people who can explain what a transformer is at a dinner party.

You support ongoing AI education, classes, and training for both technical and non-technical staff. This is a core capability, not a side hobby.

You treat AI literacy as infrastructure. If your plan is "We will just build our own AI because ChatGPT made it look easy," you are not building a capability. You are building tech debt. Working with an AI company that already has proven developers and training programs is cheaper and less risky in the first 24-36 months.

Lens 4: Infrastructure, Platform, and Tooling

We constantly see US teams rushing into DIY cloud AI setups on AWS or Azure, a pile of random AI tools and freeware, and vendors shouting about "tech AI stacks." The real question: Do you need to own the platform, or just consume a reliable one?

Build = You Own These Problems

Ops Overhead

Latency, uptime, and observability of your AI system. When the model is slow at 2 AM and your on-call engineer has never debugged inference latency, that is your problem. Not a vendor's.

Model Lifecycle

Model deployment, scaling, and rollback of different AI models. When the new version hallucinates worse than the old one and you need to rollback in production, that is your pipeline to fix.

Compliance Burden

Compliance, logging, and data retention for every AI application. Every prompt, every response, every decision logged and auditable. If you do not already run serious production systems, you have no business building low-level AI stacks.

Lens 5: Security, Governance, and Limitations

Every board is asking about AI limitations but very few teams are documenting them. If you build in-house, AI limitations become your liability: prompt injection, prompt leaking, jailbreaks, training on private data that later leaks, and misuse of internal AI content for sensitive data.

You Need a Written Policy For

When staff use AI on customer data. Which data can go into prompts? Which data is banned? Who approves exceptions? Write it down.

Where AI benefit ends and human review begins. AI can draft, but who signs? AI can score, but who decides? The line must be explicit.

How to track AI use cases across the org. Shadow IT with AI tools is *real*. Someone installed an AI freeware tool last week. You just do not know about it yet. If your security office groans whenever someone says "GenAI," you are not ready to build.

Lens 6: Speed of Learning vs Being "The Best"

Everybody wants "the best AI" or "the best AI tools." Speed beats theoretical perfection.

| Factor | Build | Buy |

|---|---|---|

| Control | High | Less |

| Time to First Value | Slower (12-24 weeks) | Faster (2-8 weeks) |

| Learning Speed | Learn by failing (expensive) | Learn what works first |

| Long-Term Depth | Potentially deeper | Dependent on vendor |

| Risk | All yours | Shared with vendor |

If you have not yet proven basic benefits of AI in your company, you should not be chasing "the top AI stack." You should be executing simple, measurable pilots: route Level-1 requests through AI customer service, use AI analytics to triage tickets, experiment with AI for learning programs internally. Learn what works, then decide where to commit serious investment.

Lens 7: Total Cost, Not Just Engineering Salary

We often hear: "Building our own AI stack is cheaper than SaaS." On paper, maybe. In reality, total cost of "build" includes hiring and retaining AI developers, ongoing model retraining, platform maintenance, and continuous updates when the ecosystem shifts every 3-6 months. Then layer on opportunity cost of leadership attention, migration risk, and hidden support costs when internal users complain that "this is not as good as the external tool."

When Building In-House Actually Makes Sense

Build Only When ALL of These Are True

The use case is core to your moat. Proprietary underwriting logic, unique business workflows, custom decision engines. Not "we want a chatbot."

Your data is proprietary and hard to replicate. If a competitor can buy the same data from a broker, your "proprietary model" is not proprietary.

You are willing to invest in AI education and internal capability. Real budgets. Real headcount. Not "weekend side projects."

You want tight integration with your own stack and do not want to depend on a single AI company or specific vendors. Typical examples: a logistics firm building AI optimization tied to unique operations. A B2B SaaS company embedding AI to differentiate its core product. These can pay off over 3-5 years.

When You Should Absolutely Not Build

Buy Instead When

You just want better AI customer support for standard channels. Proven vendors already solved this. You are 18 months behind them on day one.

You mainly need AI for generic functions like drafting emails, light content creation, or basic analytics dashboards. These are commodity capabilities. Buy them.

You want to "invest in AI" because the board asked. That is not a strategy. That is pressure-driven spending. Use proven AI tools, let vendors handle the messy bits of technology and compliance, and focus on AI strategy: where to apply AI, which use cases to prioritize, and how processes will change.

The Hybrid Pattern: Buy Core, Build Edge

For most US companies, the winning pattern looks like this:

The Hybrid Model

1. Buy generalized capabilities. Off-the-shelf AI platform for chat, search, and content. SaaS AI application for support, routing, and back-office automation. Let the vendor handle infrastructure, scaling, and compliance.

2. Build your differentiating layer. Custom prompts, flows, and AI models tied to your data. Domain-specific use cases wired into your workflows. This is where your moat lives.

3. Invest in internal literacy. AI classes for managers. Training tracks for engineers and analysts. Education for non-technical staff so they can safely use AI. This hybrid model leverages vendor AI technology while ensuring your edge logic and domain expertise stay in-house.

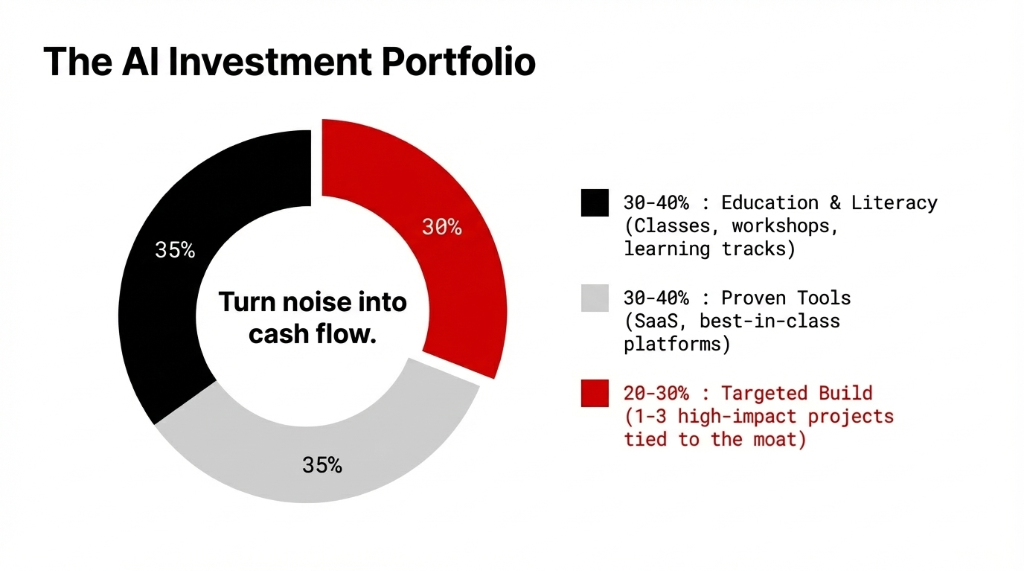

Where to Actually Invest

If you have a fixed annual budget for AI investment, we usually recommend this split in the early years. You are not trying to become one of the AI technology companies selling platforms. You are trying to be one of the companies that actually uses AI well inside your own business. Done right, this is how you turn AI noise into actual cash flow improvement — not just nicer slide decks.

How We Run a Build vs Buy Workshop

The 4-Step Workshop

Step 1: Inventory of current "AI." Every tool you have tried. All shadow IT where people use AI without approval. Any freeware someone installed. *(There is always freeware someone installed.)*

Step 2: Use case mapping. Classify AI use cases across support, finance, ops, sales, product, and manufacturing. Identify where AI can drive measurable outcomes. Not "nice to have." Measurable.

Step 3: Capability assessment. Evaluate current AI experts versus enthusiasts. Check for real education, training, and course exposure across the org. Be honest about the gap.

Step 4: Decision framework. Use the seven lenses for each use case. Tag each as BUILD, BUY, HYBRID, or DEFER. Then design an AI strategy with 60-90 day sprints for hybrid experiments, clear metrics on benefits, and a practical vendor landscape view.

FAQs

How do I know if we should build or buy our first AI use case?

Start with one narrow use case. If a vendor already has proven AI solutions for that exact problem in your industry, buy or go hybrid first. Build only when your data and workflows are genuinely unique.

What skills do we need before building our own AI?

Engineers comfortable with ML and AI who have shipped models to production. Product owners who can define use cases. Leadership willing to fund real AI education. Weekend ChatGPT experiments do not count.

Can small US companies realistically build AI in-house?

Yes, but only for a few sharp use cases tied to their moat. Most should rely on vendors for generic capabilities, then add a thin custom layer. Trying to build everything usually burns 18-24 months.

Is building AI always better for long-term advantage?

No. Buying often gives faster wins and frees your team to focus on true differentiators. Build only where custom models, proprietary data, or specialized implementation give enduring leverage.

Where should we start if we are confused?

List every place people already use AI or wish they could. Map 10-15 potential use cases. Run each through the seven-lens framework. Tag each as BUILD, BUY, HYBRID, or DEFER. That gives you a real roadmap, not a slide deck.

Count Your Stalled POCs. That Is Your Real AI Budget Leak.

Right now, your team has at least 2-3 AI experiments running that will never hit production. Each one consumed 8-16 weeks of engineering time and zero revenue impact. Bring your list of AI projects — the live ones, the stalled ones, and the ones nobody talks about anymore — to a 15-minute call with us. We will run each through the 7-lens framework and tag them BUILD, BUY, HYBRID, or KILL. No slide decks. Just the framework and honest answers.