We have seen this scenario across 40+ cloud AI deployments for US-based companies. The wrong compute choice isn't a configuration problem. It is a convenience decision bleeding your budget.

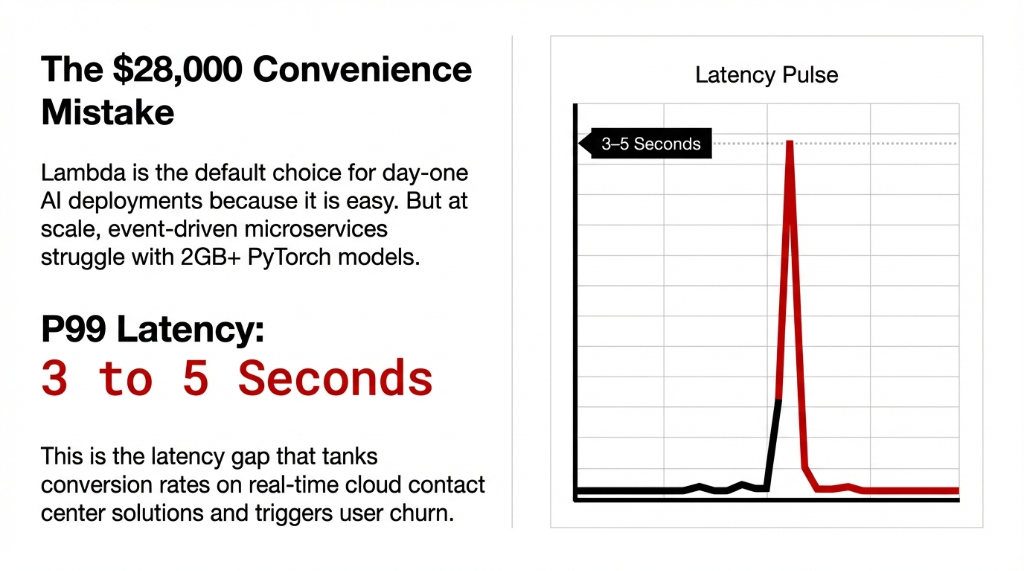

Here is what nobody tells you about your aws lambda function: when a cold start hits, your Python AI model is dead for 3-5 full seconds. That is the kind of latency that tanks conversion rates on cloud call center solutions.

Lambda caps you at 10,240MB RAM. Load a serious LLM and you have hit the ceiling before writing business logic. AWS serverless sounds cost-efficient until your model cold-starts right when US East Coast traffic peaks.

The Latency Numbers They Don't Share

We ran benchmarks on a 3GB NLP classification model averaging 500 requests/day. ECS delivers 37% lower mean latency and completely eliminates the cold start problem.

| Metric | AWS Lambda | Amazon ECS |

|---|---|---|

| Mean Latency | 245ms | 156ms |

| P99 Latency | 1,800ms+ | ~420ms |

| Cold Start | 3–5 seconds | None |

If you are running user-facing ai services where P99 latency matters, Lambda is the wrong platform. Do not compromise product quality for perceived simplicity.

The Scale-Up Intersection Point

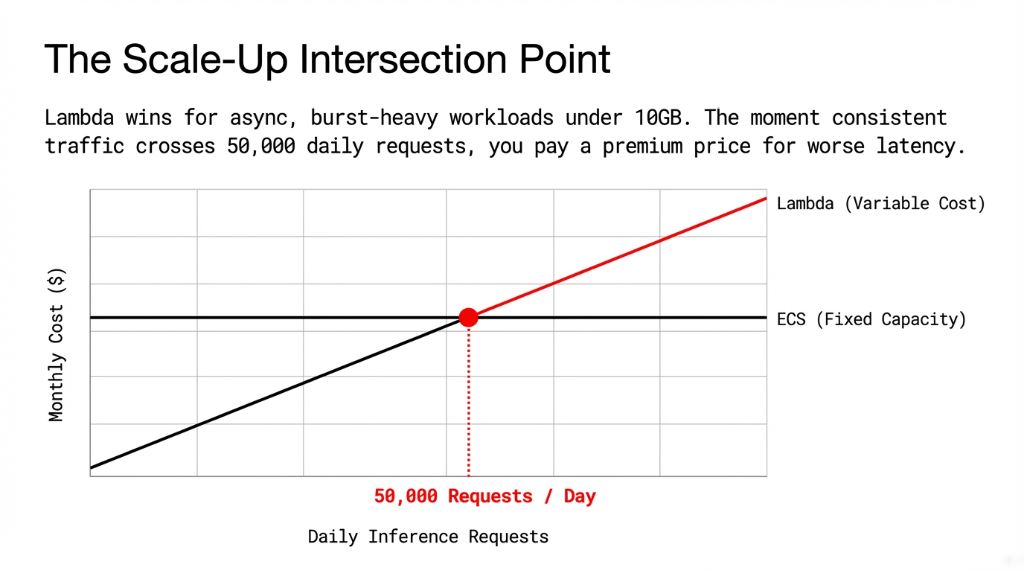

Lambda on AWS genuinely wins in one specific scenario: async, low-traffic, burst-heavy workloads. If your model is under 10GB and processes infrequent offline batches, lambda cost aws metrics are brutally efficient.

But the moment you cross 50,000 steady requests per day, the math flips. You are paying Lambda's premium pricing to get worse latency than ECS. That is a strategic miscalculation.

Lambda at 500k requests/month costs ~$100/month if you factor in the required Provisioned Concurrency to avoid cold starts. ECS Reserved Instances cost ~$62/month with guaranteed consistent response times.

Reclaiming Your Architecture with ECS

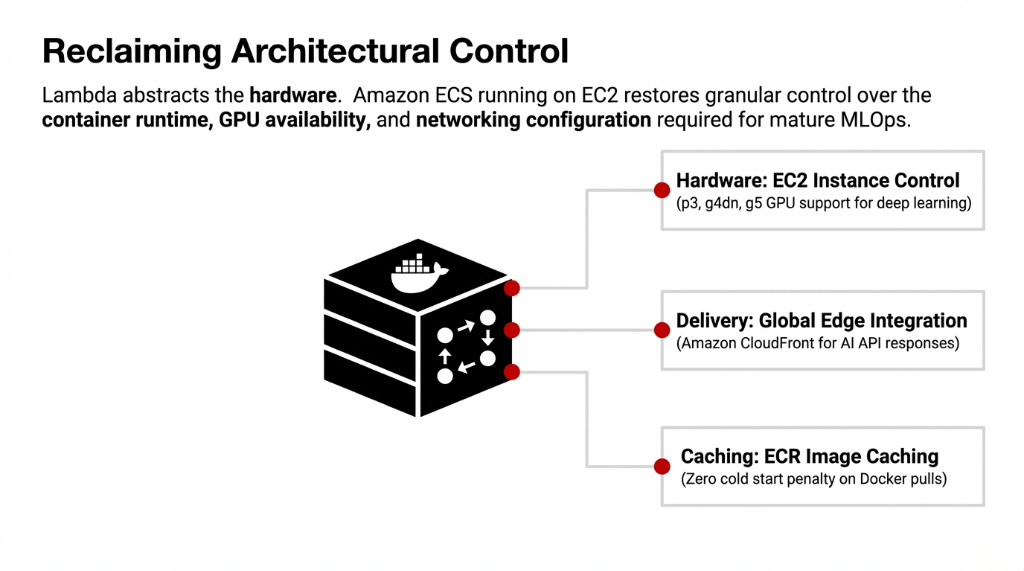

Amazon ECS running on ec2 costs gives your devops engineers full control. Lambda abstracts away your access to GPU hardware and networking routes.

- ▸ GPU Access: ECS enables p3, g4dn, and g5 instances needed for serious multi cloud ai computing models.

- ▸ Zero Penalty Image Pulls: Connect natively to aws ecr for instant Docker caching.

We moved a US-based fraud detection model off Lambda. We put them on aws ecs Graviton instances, and their P99 dropped from 4.2 seconds to 310ms. A 93% improvement.

Managed it services rely heavily on ECS because it allows for actual aws costs optimization without sacrificing latency.

Fix Your AI Inference Architecture

Stop over-provisioning Lambda and tanking your user experience. We surface an average of $2,400-$4,100/month in wasted compute on our first call.

FAQs

Does AWS Lambda support GPU for AI inference?

No. As of 2026, AWS Lambda does not support GPU instances. Lambda is limited to CPU-based compute with up to 10,240MB RAM. For any deep learning inference workload requiring GPU acceleration you must use ECS on EC2 GPU instances or Amazon SageMaker endpoints.

What is the real cost difference between Lambda and ECS for AI at 100,000 requests/month?

At 100,000 inference requests/month with a 2-second average execution time and 3GB memory, Lambda costs approximately $10–$16/month before Provisioned Concurrency. An ECS t3.medium Reserved Instance runs ~$15/month with zero cold starts and consistent P99. At this volume, costs are nearly equal, but ECS wins on latency by 37%.

How do you eliminate Lambda cold starts for AI models?

Three approaches: (1) Provisioned Concurrency — keeps containers pre-initialized but adds $40–$80/month. (2) Scheduled pings — a CloudWatch rule hits your Lambda every 5 minutes to keep it warm. (3) Migrate to ECS — eliminates cold starts entirely. For production AI serving, ECS is the right answer.

Can ECS on AWS scale as fast as Lambda for sudden traffic spikes?

ECS with Fargate and Application Auto Scaling can scale new tasks in 45–90 seconds. Lambda scales in milliseconds. For sudden burst traffic Lambda responds faster. For sustained traffic growth over 5–15 minutes, ECS autoscaling catches up completely and delivers better performance.

Is EKS better than ECS for large AI deployments on AWS?

EKS on Amazon is better when you need Kubernetes-native features: Horizontal Pod Autoscaler, custom resource definitions, and portability to other cloud providers. ECS is simpler to operate and cheaper to run for single-cloud AWS deployments. EKS starts making sense at 50+ microservices.