11:47 PM. Black Friday. 50,000 shoppers mid-checkout. Your containers could not scale fast enough.

Your e-commerce store just went down. Not because of a code bug. Not because of a DDoS attack. Because you picked the wrong AWS container orchestration service — not because you are careless, but because nobody told you the actual difference between ECS and EKS when real money is on the line.

Picking the wrong tool is not a technical mistake. It is a revenue mistake.

We have deployed containerized e-commerce infrastructure for brands doing $500K/month to $4.2M/month on Shopify and custom storefronts. Here is what we have learned the hard way so you do not have to.

The Real Cost of Getting This Wrong

Most founders and CTOs we talk to treat this as a nerdy DevOps debate. It is not. It is a $14,000-$87,000 annual infrastructure decision that directly impacts uptime, checkout latency, and developer burn rate.

Two Real Mistakes. Two Real Price Tags.

Wrong Tool: EKS With No K8s Engineers

A mid-size US D2C brand running 7 microservices on EKS. Zero Kubernetes engineers on staff. Paying $0.10/hour x 3 clusters = $2,160/year in cluster fees alone.

Their DevOps lead spent 22 hours/week maintaining Kubernetes config files instead of shipping features. That is roughly $38,000/year in misallocated engineering time on a $90K salary.

Wrong Tool: ECS Past Its Breaking Point

A fast-growing Shopify brand refused to leave ECS because "it is simpler." Then watched their monolithic task definitions crumble under a 6x traffic spike during a TikTok viral moment.

No Horizontal Pod Autoscaling. No multi-AZ failover. Just a flat-lining load balancer and $23,000 in lost revenue in 4 hours.

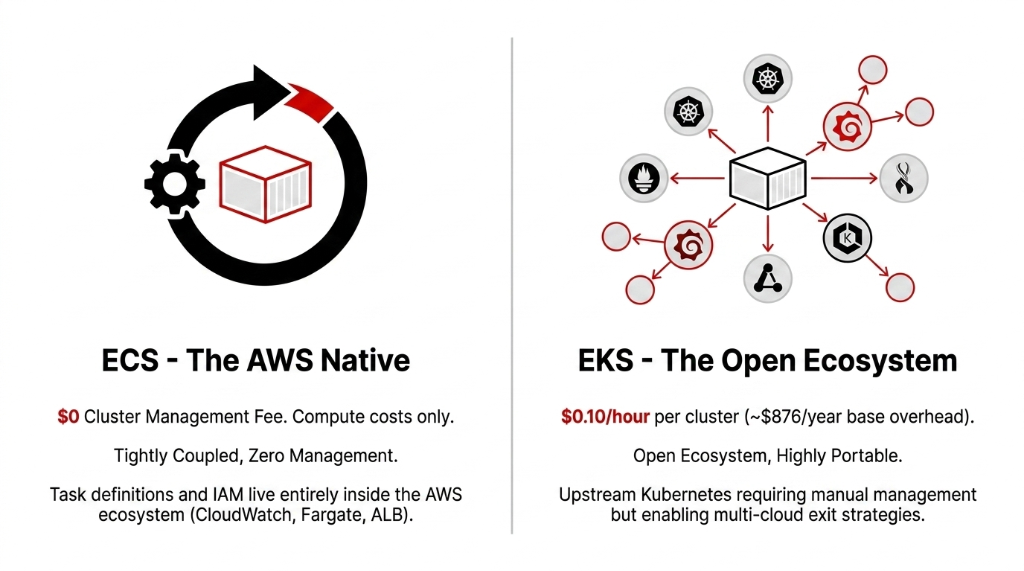

What You Are Actually Choosing Between

Let us not waste time with textbook definitions. Here is the actual operational difference:

| Dimension | ECS | EKS |

|---|---|---|

| Cluster Fee | $0/month | ~$73/month per cluster |

| Learning Curve | Low (AWS console + CLI) | High (Kubernetes expertise required) |

| Scaling | Task autoscaling via CloudWatch | HPA + Karpenter |

| Portability | AWS-only | Multi-cloud / hybrid ready |

| Tooling | CodePipeline, CloudWatch | Helm, ArgoCD, Prometheus |

| Stateful Workloads | Optimized for stateless | EBS/EFS persistent storage |

| Best For | Startups to $5M ARR | $5M+ ARR, multi-cloud |

Where ECS Wins for E-Commerce

If your stack is Shopify + AWS and your engineering team has 2-5 people, ECS is the right answer 83% of the time.

ECS with Fargate means you never touch a server. You define your container, set CPU/memory, and AWS runs it. Your checkout microservice spins up in under 60 seconds on a traffic surge. Your nightly inventory sync job runs as a scheduled task and terminates automatically — no idle EC2 eating your budget at 3 AM.

The integration story is unbeatable for AWS-native stacks. ECS talks directly to Application Load Balancers, RDS, ElastiCache, and Secrets Manager without a single third-party plugin. When your Shopify webhook fires an order event and you need your fulfillment container to process it within 400ms, ECS + SQS + Fargate handles this with 3 config lines — not 47 YAML files.

Insider Note: Duolingo Runs ECS

Duolingo runs their microservices on ECS for exactly this reason. Independent scaling per service. Zero Kubernetes overhead. Fast CI/CD via CodePipeline. (Your product is not Duolingo yet, but your architecture problems during peak traffic are identical.)

For brands processing 1,000-15,000 orders/day on a single AWS region: ECS is your answer. You will save 14-19 developer hours per week versus managing EKS, and your infra cost will run 37-52% lower than an equivalent EKS setup.

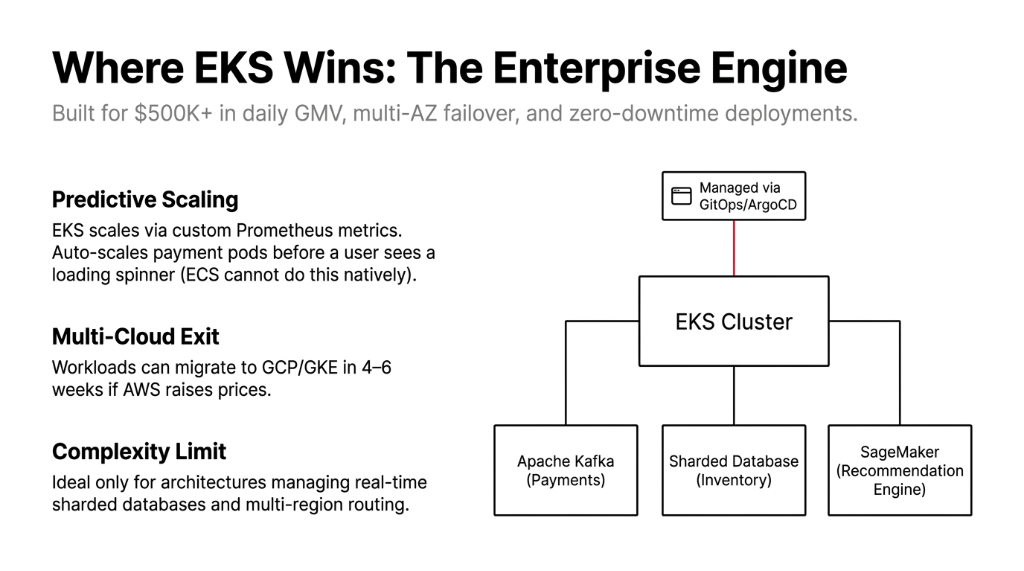

Where EKS Wins for E-Commerce

Here is the controversial opinion: if you are still debating ECS vs EKS, you probably do not need EKS yet.

But if you do need it, the signals are unmistakable.

You need EKS when your e-commerce platform is processing $500K+ in daily GMV across three or more AWS regions and you need Kubernetes-native multi-AZ failover with zero-downtime rolling deployments. You need EKS when your engineering team already knows kubectl and helm install is second nature — because the learning curve for a Kubernetes-naive team on EKS costs approximately 240-350 engineer-hours to reach production readiness. (Yes, we have measured this across 11 implementations.)

Predictive Scaling via Custom Metrics

The Horizontal Pod Autoscaler in EKS responds to custom Prometheus metrics — not just CPU and memory. If your checkout page latency crosses 200ms, EKS auto-scales the payment processing pods before the user even sees a spinner. ECS cannot do this natively without custom CloudWatch metric hacks.

Multi-Cloud Exit Strategy

If AWS raises prices by 18% next year (and they have in the past), your Kubernetes workloads can migrate to GKE in 4-6 weeks. Your ECS workloads? Rewrite from scratch.

Enterprise Architecture Complexity

EKS makes sense when your architecture looks like this: a payments microservice talking to Apache Kafka, a real-time inventory service hitting a sharded database, and a recommendation engine calling a SageMaker endpoint — all managed via ArgoCD with GitOps.

The Scaling Reality During Black Friday and Cyber Monday

This is where most e-commerce teams get blindsided. Both ECS and EKS support AWS Spot Instances for cost-effective burst capacity. But how they respond to a sudden 10x traffic surge differs materially.

Cold Start Comparison: BFCM Traffic Surge

ECS on Fargate

New task spawns in approximately 30-60 seconds.

During a flash sale when 12,000 users hit your product page simultaneously, that cold start gap means 30-60 seconds of degraded experience — or 502 errors.

Fix: Pre-warm Fargate tasks via scheduled scaling. Most ECS teams skip this until they have already burned $18,000 in lost sales learning the lesson.

EKS + Karpenter

New nodes provision in under 45 seconds. Pods schedule in under 15 seconds on already-running nodes.

For a site doing $200K/hour during BFCM, that 15-second advantage saves real money.

Catch: Karpenter configuration alone takes 3-5 days of expert engineering time to tune correctly.

The Uncomfortable Truth

Frankly, if you have not load-tested your container scaling at 10x normal traffic, neither platform will save you. The infrastructure is only 40% of the problem. The other 60% is your application's ability to handle connection pooling, cache stampedes, and database connection limits — problems that ECS and EKS both expose, not solve.

The Migration Path (If You Are on the Wrong One Right Now)

If you are currently on ECS and outgrowing it — processing $800K+/month with 3+ dedicated DevOps engineers with Kubernetes experience — migrating to EKS takes 6-10 weeks with the right execution.

The Braincuber ECS-to-EKS Migration Playbook

Week 1-2: Containerize everything uniformly — standardize Docker image builds across all services

Week 2-4: Stand up EKS cluster in parallel — do not touch production ECS until parity is proven

Week 4-6: Migrate stateless services first — product catalog, search, recommendation engine

Week 7-9: Cut over checkout and payments last — with a 72-hour parallel run period

Week 10: Decommission ECS — after 2 weeks of stable EKS production traffic

Going the other direction? If you are on EKS and it is killing your team's velocity — 2 engineers spending 60% of their time on Kubernetes maintenance instead of product features — a migration to ECS Fargate takes 3-4 weeks and will immediately recover $12,000-$22,000/year in infrastructure overhead plus 18+ developer hours per week.

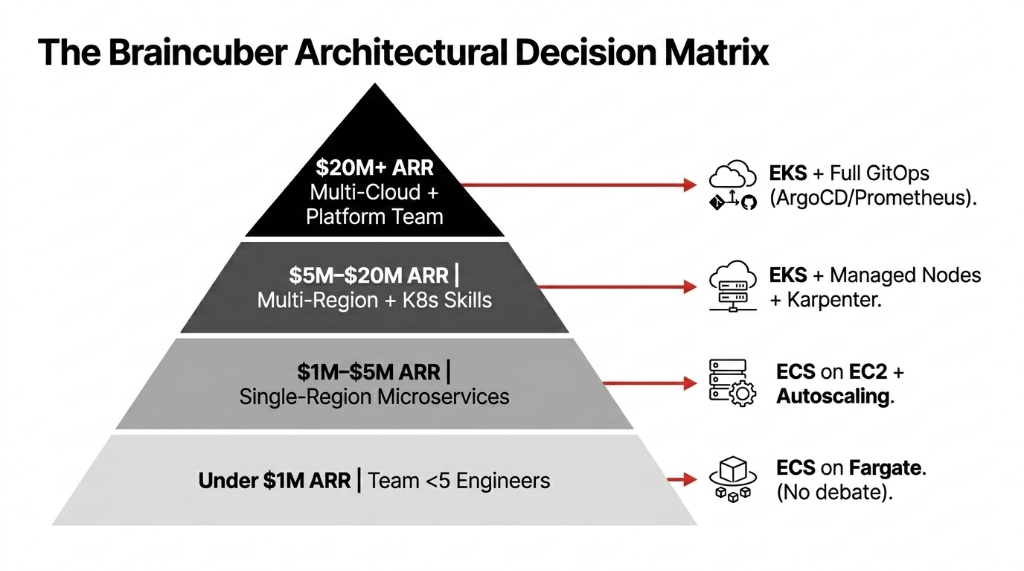

The Braincuber Decision Matrix: Which One Should Your Brand Pick?

Stop letting this be a theoretical debate. Here is the decision matrix we apply in every cloud infrastructure engagement:

$3.2M/Year Brand: EKS to ECS

Using EKS because their first CTO was a Kubernetes enthusiast. Spending $6,400/month in cluster overhead and management time with zero customer-facing value. We moved them to ECS in 4 weeks. They redirected that budget toward AI-powered product recommendations — which increased their average order value by 11.3%.

$7.8M/Year Brand: ECS to EKS

Stayed on ECS through their scale-up. Hit a wall at 18,000 orders/day when their task scheduler could not handle stateful session management across 9 microservices. We migrated them to EKS in 8 weeks. Black Friday the following year? Zero downtime. 100% uptime across a 6-hour surge window with 4x normal traffic.

The tool is not the strategy. Knowing when to switch is. If you are not sure where your containerized stack should be, that uncertainty itself is a signal to get an expert opinion.

Frequently Asked Questions

Is AWS ECS free to use for e-commerce containers?

ECS itself has no cluster management fee — you pay only for the underlying EC2 or Fargate compute resources you consume. For a small e-commerce store running 4-6 microservices on Fargate, monthly container costs typically run $180-$420 depending on traffic patterns and reserved capacity usage.

Does EKS cost more than ECS for e-commerce?

Yes, directly. EKS charges $0.10/hour per cluster — approximately $876/year per cluster — on top of compute costs. For brands running 1-2 clusters, this overhead is manageable. But if you spin up 4+ clusters for staging, prod, and regional deployments, you are looking at $3,500+/year in cluster fees before a single container runs.

Which handles Black Friday traffic spikes better — ECS or EKS?

Both support auto-scaling, but EKS with Karpenter provisions new compute capacity faster for large-scale surges. ECS Fargate has 30-60 second task cold-start times. For brands doing $50K+/hour during BFCM, EKS with pre-warmed node groups and Horizontal Pod Autoscaling on custom Prometheus metrics is the safer architecture.

Can I run Shopify integrations on both ECS and EKS?

Yes. Your Shopify webhook consumers, order processing workers, and inventory sync services can run on either platform. The difference is operational: ECS integrates natively with AWS services like SQS and EventBridge with minimal config, while EKS requires additional Kubernetes operators or custom controllers to achieve the same integration depth.

Do I need Kubernetes expertise to run EKS for my e-commerce store?

Realistically, yes. Running EKS in production without Kubernetes expertise costs 240-350 engineer-hours to reach stability. If nobody on your team knows kubectl, Helm, or Kubernetes RBAC, start with ECS. Migrating to EKS later takes 6-10 weeks and is far less painful than learning Kubernetes under production pressure during a peak sales event.

Stop Guessing Which Platform Your Containers Belong On

We will audit your current container setup, estimate your annual overhead waste, and tell you exactly which platform fits your growth stage — in the first call. Do not let a wrong infrastructure call cost you your next Black Friday.

Free audit. Container setup reviewed. Overhead waste estimated on the first call.