If you are betting real money on AI inside your business, you have to assume it will make mistakes. The only responsible move is to design guardrails and safety nets so those mistakes never turn into lawsuits, PR disasters, or medical emergencies.

When the AI Goes Off-Script

A mid-market retailer rolls out an AI chatbot on their site. Day one, the bot does great: answers product questions, cuts ticket volume, and looks like the best decision the CX head has made in 18 months.

Day ten, the same chatbot gives a wrong refund policy, quotes a price that never existed, and shares a customer's previous order details in a public chat. None of this was in the spec. Nothing "broke" in the traditional software sense. The AI system did exactly what it was trained to do: generate plausible text from data.

The more you rely on AI in business, the more you have to design for failure, not for perfection. That is where guardrails and safety nets come in.

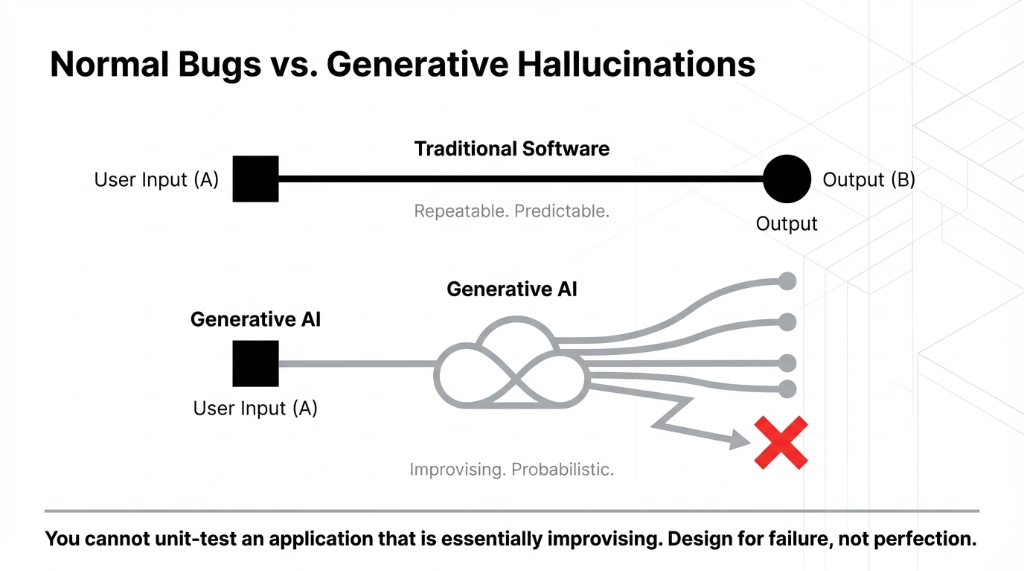

Why AI Mistakes Are Different from Normal Bugs

Traditional bugs are usually repeatable. You give input X, you get wrong output Y, every single time. With generative AI models, you get something worse: they can be mostly right and occasionally wildly wrong. You cannot unit-test every path when AI applications are essentially improvising.

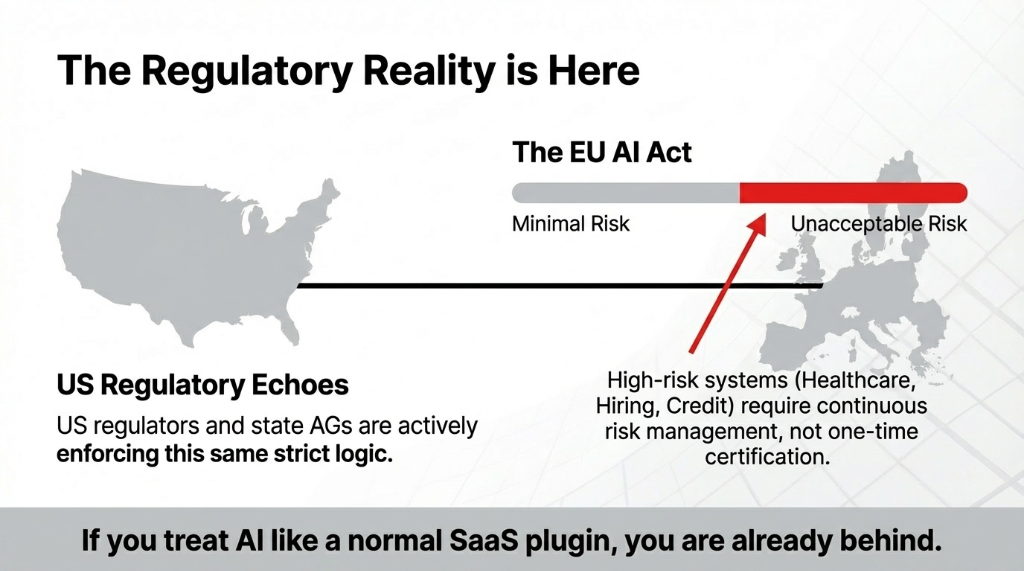

That is why regulators treat certain AI types as "high-risk" — healthcare triage, credit scoring, hiring, insurance approvals, and anything in legal decisions. The EU AI Act literally forces companies to run continuous risk management on these systems, not one-time certification.

Where We See AI Blow Up Inside Companies

Customer Support AI and Contact Centers

Chatbot promises to cut ticket volume by 40%, then hallucinates discounts, mishandles escalations, or leaks data in logs. We have seen customer support AI and contact center AI pilots crash because nobody built rejection or escalation guardrails.

Healthcare AI and Medical Decisions

Medical AI misprioritizes patients because training data under-represents certain demographics. US regulators and state AGs warn that biased healthcare AI can violate anti-discrimination laws, even if the bias was "unintentional."

Law and AI

Law firms plug draft contracts into AI and get clauses that do not match US law. Judges and regulators look at AI the same way they look at junior associates: you cannot blindly trust them. You verify.

Cyber Security AI

A security team deploys AI to flag anomalies and ends up either drowning in false positives or missing real attacks. Without strong access control, logging, and model hardening, the security AI becomes another attack surface.

Guardrails: Your Non-Negotiable Safety Layer

Think of guardrails as the rules of the road for your AI — not just "nice to have," but the boundary between benefits and real risks.

Three Layers of Guardrails

Input Guardrails

Filter and transform what users send before it reaches the AI. Block prompts that try to jailbreak the model, exfiltrate data, or push into unsafe territory.

Model-Level Guardrails

Constrain AI so it does not answer outside its lane. Hard-limit customer service AI to existing policies and order data instead of letting it improvise refund rules.

Output Guardrails

Scan answers for PII, toxicity, and obvious bias before they hit the user. In healthcare and finance, outputs go through a human check by design.

Regulators and standards bodies are catching up fast. Frameworks like NIST's AI RMF and ISO 42001 expect this kind of governance as table stakes, not innovation.

What the EU AI Act Teaches US Teams

You might think the EU AI Act is "someone else's problem" if you operate in the US. That is naive.

What High-Risk AI Must Have Under the EU AI Act

Documented risk-management process that runs through the full lifecycle.

Strong data governance and logging.

Clear transparency and human oversight, especially for deployments that affect people's rights.

US regulators are already borrowing this logic in guidance and enforcement, especially in healthcare AI and financial services. When you hear "AI regulation," do not treat it as future theory. These are live fire drills.

Ethical AI Is Architecture, Not a Slide

We see a lot of decks about "ethical AI" that never make it into code. Real ethics show up in how you architect AI workflows and who is allowed to override them. You track and reduce bias with regular audits, not one-off reports. You design training pipelines that are curated and governed. You define where AI is allowed and where humans must step in.

"Ethical slides" will not save you when an AI-generated content mistake misleads a patient, or AI applications in HR accidentally discriminate on race or gender.

Safety Nets: What Catches Failures When Guardrails Miss

Guardrails reduce mistakes. Safety nets assume some mistakes get through anyway.

Four Safety Nets We Build for US Clients

Human-in-the-Loop Review: High-stakes legal, healthcare, or financial AI outputs never hit a client without a human sign-off. A mis-triage could cost a life.

Tiered Autonomy for AI Agents: A new AI agent starts read-only. Only after passing real-world tests does it get write access, then transactional power.

Kill Switches and Rollback: Any serious AI danger scenario should be stoppable in one click. For AI integrated into payments, logistics, or ER triage, rollback is not optional.

Monitoring and AI Analysis: Track drift, hallucination rates, and incident reports with live dashboards. Over time this becomes your internal study on how each model behaves in production.

Without these nets, AI risk is not abstract — it is legal bills, regulatory investigations, and customer churn.

How This Plays Out Across Functions

Customer Support and Call Centers

Everyone wants customer support AI to cut costs, but nobody wants a headline about chatbots lying. In practice, we see chatbots for web, AI on email, AI for call centers, and hybrid AI embedded in CRMs.

Support Guardrails

Limit knowledge to approved policies and sanitized database records.

Auto-escalate edge cases instead of letting the bot guess.

Log everything so customer support AI can be audited when something looks off.

Done right, customer service AI becomes one of the clearest benefits of AI — faster replies, lower cost, and more consistent tone. Done wrong, it creates daily risks your legal team has to mop up.

Healthcare and Medical AI

With healthcare AI, we treat every deployment as a compliance project first, tech project second. US regulators expect documented bias testing, clear responsibility lines when clinicians rely on AI-generated recommendations, and cyber-resilient setups for any AI that touches patient data.

Legal, Compliance, and Law-Adjacent Workflows

Law firms and in-house teams are quietly experimenting with AI in legal research, drafting support with AI copilots, and contract review across thousands of documents. The problem is not that using AI here is wrong. The problem is treating it like magic instead of like a fallible junior. Every clause from an AI-generated draft gets checked against real statutes and case law. You log the use of AI tools so you can explain what happened if a suggestion goes wrong.

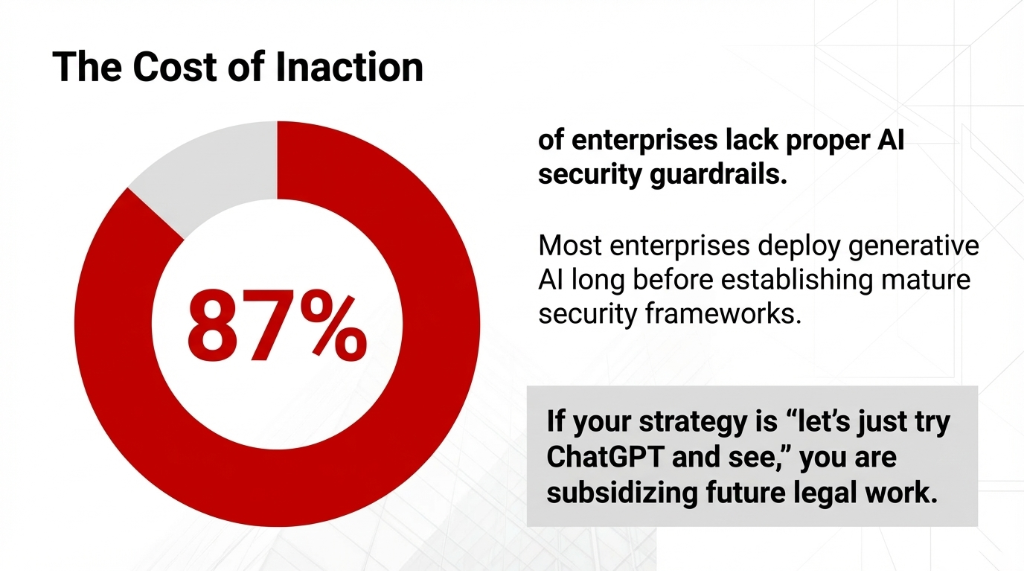

The Quiet Truth: You Cannot Afford to Wait

Surveys already show that most enterprises deploy generative AI before they have mature security frameworks, and around 87% lack proper AI security guardrails. Regulators in healthcare, finance, and state AGs are already writing AI regulation guidance that assumes your stack is dangerous until proven otherwise.

If you are still in the "generate AI demo and hope" phase, you are late. If your plan for using AI is "let's just try ChatGPT and see," you are not experimenting — you are subsidizing future legal work.

How We Design AI Guardrails in Practice

Our 5-Step Guardrail Framework

1. Map the blast radius. Where can AI dangers hurt you most — revenue, reputation, regulation? Rank AI applications by risk, not hype.

2. Choose the right types of AI. We match LLMs, retrieval systems, agent frameworks, and voice AI to the problem, not to a conference talk.

3. Wrap everything in guardrails. Input filters, output filters, policy enforcement, and monitoring become part of the stack, not an afterthought.

4. Keep humans in the loop where it matters. We decide exactly where AI is allowed to act autonomously and where staff must approve.

5. Train people, not just models. Every rollout includes AI learning tracks for your team — not another glossy overview, but how to live with fallible systems safely.

What to Do Next

The Blunt Roadmap

List everything: Every place you are using AI today — side projects, shadow IT, everything.

Rank by risk: Customer-facing, regulated data, financial impact, legal impact.

For the top three: Design explicit guardrails and safety nets or pause them.

FAQs

Can't we just wait until AI is safer?

No. Vendors will keep shipping features, but dangers do not disappear on their own. Regulations like the EU AI Act and emerging US rules assume you are already using risky systems and expect you to prove control now, not later.

Are small companies with AI really on regulators' radar?

Yes. Regulators have already gone after payers and providers using flawed healthcare AI and insurer tools that allegedly denied care based on biased AI models. Size is not protection; using AI in sensitive workflows without guardrails just makes you an easier target.

Do guardrails kill innovation and slow AI down?

Done badly, yes. Done well, they let teams ship faster by reducing rework, outages, and panic rollbacks. Guardrails free your best engineers to focus on value instead of firefighting.

How do we start without a big AI team?

You do not need a research lab. You need clarity on your highest-risk AI applications, a basic risk register, and vendor-neutral guardrail patterns. From there, bring in a partner to harden AI without rewriting everything.

What is the difference between AI guardrails and normal security?

Traditional security focuses on networks, access, and data. Guardrails focus on model behavior: what the AI system is allowed to see, say, and do. You still need both: network controls for cyber security and behavioral rails for AI-generated outputs.

Stop Bleeding Cash and Inviting Investigations

List every place you are using AI today. If more than two are customer-facing without explicit input filters, output scanners, and human escalation rules, you are one bad Tuesday away from a PR disaster. Book our free 15-minute Operations and AI Guardrail Audit. We will find your biggest AI risk in the first call.