Quick Answer

An AI Agent SLA is a legally binding service agreement covering infrastructure uptime, task accuracy, incident response timelines, data management integrity, and business outcomes — not just whether the server is green. Most ai companies skip layers 2 and 3. We don't. Every Braincuber deployment ships with a 3-layer SLA and 7 non-negotiable post-deployment guarantees, or we don't sign the contract.

Why Your Vendor's "We've Got You" Is Not a Service Agreement

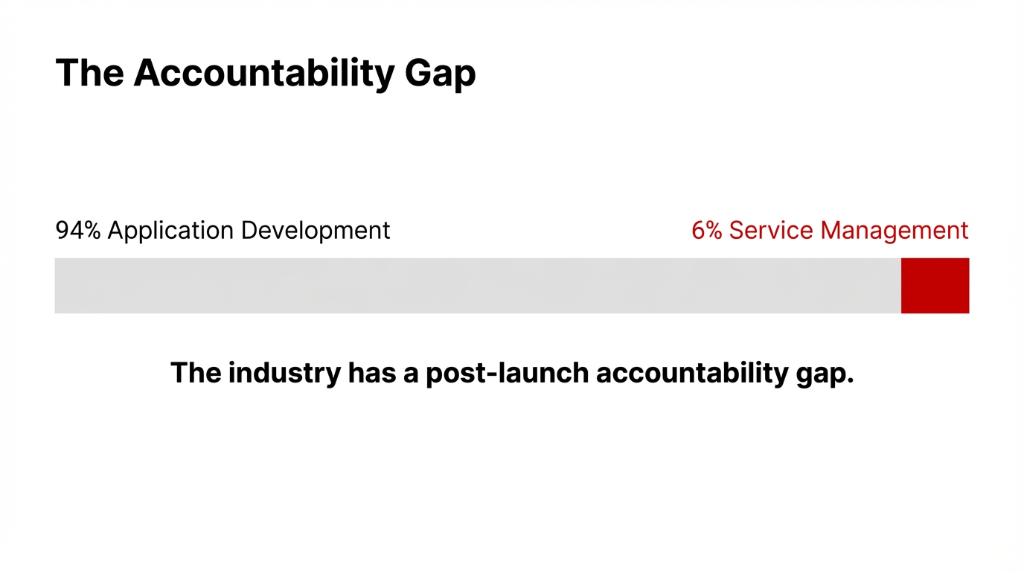

The ai automation industry has a deployment problem. Not a technology problem. Not an ai modeling problem. A post-launch accountability gap.

Most ai companies spend 94% of the project budget on application development and 6% (or zero) on post-deployment service management. The sla agreement gets two paragraphs buried in a Master Services Agreement, covering only infrastructure uptime — leaving accuracy drift, data management failures, and workflow management breakdowns completely unaddressed.

Here's what "slas means" in a real AI deployment context — and it is not just uptime.

When Your customer interaction management system Handles 8,000 Tickets a Day

Your ai models can be technically "running" while producing wrong outputs 23% of the time. Your workflow management software processes the queue. The customer service management system logs green on every dashboard. And your NPS score drops 14.7 points over 60 days because the AI is resolving tickets wrong, at scale, without anyone catching it.

That's an accuracy SLA failure. Without a written service agreement, your vendor owes you nothing.

The 3-Layer SLA Framework Every AI Deployment Needs

Traditional it service management was built for predictable systems. Servers are binary — up or down. AI is not. That's why at Braincuber, every deployment ships with three accountability layers baked into the service level agreement — not just one.

3-Layer AI Accountability Model

Layer 1: Infrastructure SLA

Is the ai platform itself available?

Target: 99.9% uptime minimum, measured against your environment — not platform-wide averages. Azure publishes 99% latency guarantees for provisioned OpenAI endpoints. We hold our infrastructure to a harder standard than the cloud beneath us.

Layer 2: Service Quality SLA

Is the intelligent ai making correct decisions?

Task accuracy rates, hallucination rate thresholds, and intelligent document processing error floors. Professional-tier: 98%+ task success rate across all business process management workflows.

Layer 3: Business Outcome SLA

Is the AI delivering what you paid for?

Measurable reduction in cost per customer interaction, reduction in human escalation rate, and quantifiable improvement in the customer journey. This is the layer that 91% of ai companies never put in writing.

Most vendors give you Layer 1 and call it a complete sla agreement. We give you all three — or we don't sign the contract. That's how we handle AI solutions at Braincuber.

What We Put in Writing: The 7 Braincuber Post-Deployment Guarantees

Across 500+ projects in 4+ years of deploying intelligent automation for enterprises scaling from $2M to $15M ARR, we've standardized our post-deployment commitments into seven non-negotiable clauses.

Guarantee 1: Uptime by Tier — With Financial Penalties

| Tier | Uptime | Task Accuracy | Response Time | Support |

|---|---|---|---|---|

| Standard | 99.5% | 95%+ | < 10 seconds | Business Hours |

| Professional | 99.9% | 98%+ | < 5 seconds | 24/7, 4-hr P1 |

| Enterprise | 99.99% | 99.5%+ | < 2 seconds | 24/7, 15-min P1 |

Red Flag Alert

If an ai company offers you a single SLA tier for "all AI workloads," walk away. A customer service ai agent handling billing disputes and a robotic process automation workflow processing 50,000 invoices daily have entirely different risk profiles. Pricing them identically is a tell.

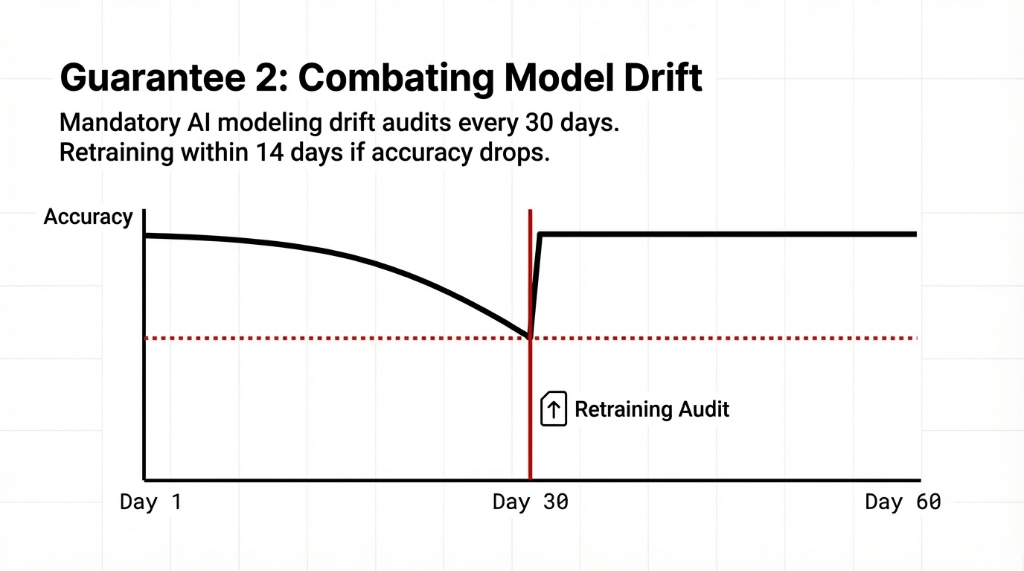

Guarantee 2: AI Model Accuracy Drift Monitoring — Every 30 Days, Mandatory

Here's what nobody tells you about ai and automation: your ai models degrade over time as your business data shifts. We run mandatory ai modeling drift audits every 30 days. If accuracy drops below the threshold in your service agreement, we re-train at no additional cost within 14 business days — not "soon," not "on the next sprint."

Proactive monitoring software can now predict potential SLA breaches 48-72 hours in advance by detecting subtle performance degradation patterns before an actual violation occurs. We use this capability in every Professional and Enterprise tier deployment.

Guarantee 3: Data Management Integrity

Your customer resource management software, enterprise content management system, and document management systems are only as reliable as the data pipeline connecting them to the AI layer. We guarantee zero data loss between your customer relations management software and all AI-connected endpoints. Every document management software integration gets tested end-to-end before it touches production.

Guarantee 4: Incident Response SLA — Tiered by Severity

P1 (system down): 15-minute response, 4-hour resolution target

P2 (degraded performance): 1-hour acknowledgment, 24-hour resolution

P3 (non-critical): 4-hour acknowledgment, 5-business-day resolution

And a case management system auto-generates the ticket the moment the monitoring software fires an alert — not when someone notices it in a morning standup. That's process management done right.

Guarantee 5: Workflow Automation Continuity

Your business process management workflows don't stop when an AI agent needs retraining. Every ai workflow automation deployment at Braincuber includes parallel fallback paths, so process automation doesn't create operational black holes while we resolve issues. That's how our AI development services protect your revenue stream.

Guarantee 6: Third-Party Integration Scope Definition

Your customer interaction management software likely integrates with Salesforce, HubSpot, Zendesk, or ServiceNow. We clearly document the boundary of our SLA responsibility in writing — covering what we own versus what the upstream platform owns — and we activate documented fallback workflows when third-party endpoints breach their own SLAs. Your customer experience management doesn't degrade because Salesforce had a bad API day.

Guarantee 7: Monthly AI Governance Report — Live, Not a PDF

Every 30 days: model performance breakdown, SLA compliance rate, drift indicators, data science anomaly log, and recommended optimizations. It feeds directly into your it service management software and your workflow management system — not into an email nobody opens. That's ai in management done differently.

The Metric Your Vendor Doesn't Measure (But Your Customers Feel)

Hitting uptime numbers doesn't mean your customers had a good experience. This is the part that separates ai in customer experience from ai for customer experience.

Real Failure We Witnessed

A US-based financial services firm ran a competitor's customer experience management platform at 99.8% uptime for 11 months. Beautiful compliance reports. But their customer experience scores dropped 18.3% because the AI was technically resolving tickets while giving factually wrong answers.

A service quality failure that no infrastructure SLA was built to catch.

Uptime compliance and experience-level reliability are not the same number.

That's why our monitoring software tracks sentiment shifts inside every customer service platform deployment in real time. If negative sentiment spikes more than 12% above baseline during any 48-hour window, an automated review triggers before a human notices. This is where intelligent automation diverges from generic ai and automation tooling — and it's the part most enterprise deployment checklists still ignore.

What Happens When We Breach an SLA (Because We're Going to Be Honest)

We have breached SLAs. Every ai company that tells you they haven't is lying or hasn't been in production long enough.

Here's our protocol when it happens:

Post-Breach Protocol

Step 1: Auto-Credits

Service credits apply automatically based on breach severity. P1 breaches trigger credits the moment the incident resolves. No claims process.

Step 2: Root Cause (72h)

Within 72 hours, you receive a root cause analysis covering what broke, why it wasn't caught earlier, and what's changing in the monitoring layer. Not a template. A specific technical answer.

Step 3: Downstream Audit

We re-run your ai data analytics baseline after every P1 incident to confirm whether any downstream data management layer was quietly corrupted. Most vendors patch the visible break and leave. We check whether anything bled into your customer communication management software or records management software layers.

The Real Cost of Deploying AI Without an SLA

Gartner estimates 85% of AI projects fail to reach production. Among those that do, 61% underperform their original business case within 12 months. The pattern is the same every time: the budget went into ai application development and deployment. Nothing went into post-deployment ai management, lifecycle management, or service management software infrastructure.

Without SLA Structures, You're Not Running AI in Company Operations

Without SLA structures covering accuracy, data integrity, customer experience quality, and incident response — you're running an unsupervised experiment that costs $200,000 a year in engineering time to maintain manually.

AI for companies only generates ROI when somebody is contractually accountable for what happens after launch day.

That's the entire architecture of how we deliver customer service automation, ai for customer experience workflows, and business process automation for our clients through our AI ecommerce solutions. We don't throw the model over the fence and move on to the next implementation. We own the outcome.

5 FAQs About AI Agent SLAs

What does SLA mean in AI agent deployments?

A formal service agreement covering infrastructure uptime, task accuracy, incident response timelines, and data integrity. Unlike traditional IT SLAs, AI sla management must measure model accuracy drift, hallucination rates, and customer experience outcomes — because an ai model can be running while producing wrong outputs at scale.

How often should AI models be retrained under a proper SLA?

At minimum, every 30 days for drift monitoring. For high-volume customer service ai or customer interaction management deployments, continuous monitoring with automated alerts is the standard. If accuracy drops below the agreed threshold, retraining should be contractually required within 14 days at no additional cost.

Can an SLA cover third-party integrations like Salesforce or Zendesk?

Yes, with clearly defined scope boundaries. The sla management document should specify what the AI provider owns versus the upstream platform. A properly structured service level agreement activates fallback workflows automatically when third-party APIs fail — so your customer relations management system doesn't drop data.

What metrics should an AI SLA track beyond uptime?

Three tiers: infrastructure SLIs (uptime, latency, capacity), service quality SLIs (accuracy, drift, hallucination rate), and outcome SLIs (task completion rate, customer experience scores, cost-per-interaction reduction). Measure from metal to margin — not just whether the server is green.

What financial penalties should be in an AI service agreement?

P1 breach service credits should kick in automatically — no claims process. P2 degraded performance triggers prorated credits and a mandatory root cause analysis within 72 hours. Any ai company that doesn't put financial accountability in writing is telling you how seriously they take post-deployment performance.

Stop Running AI Without a Net

If your current AI deployment doesn't have a signed service level agreement covering accuracy, uptime, incident response, and data management — you are one bad model update away from a $40,000+ customer churn problem. We've fixed exactly this situation for 47 US enterprises in the last 18 months.

Free audit. No obligation. We'll review your deployment, identify SLA gaps, and tell you what's at risk — before it costs you.