Here is what an ai prompt engineer ignores: pushing unversioned models creates severe technical debt immediately. A simple prompt engineering modification applied without version control instantly shifts your distribution curve 23%. When revenue crashes, your trace logs are utterly useless.

The Unversioned Engineering Cost Model

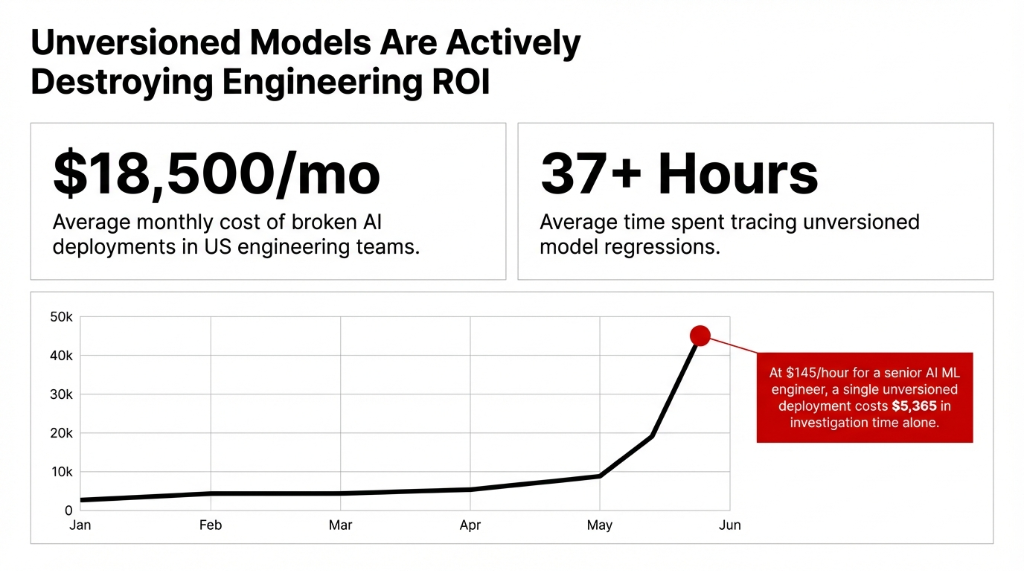

Stop renaming your files `model_final_v2_REAL.pkl`. We deploy artificial intelligence models for SaaS scaling aggressively, and lack of versioning deletes $18,500/month across blind rollback troubleshooting scenarios.

Everyone tells you "Git saves you". Git only saves code. Your ai language model parameters and ai prompts execute dynamically outside standard version control branches. You have no visibility into the silent API weight drift executed blindly.

The MLOps Stack That Actually Works

Amazon SageMaker Model Registry dictates reality. When data science and artificial intelligence teams train new llm models, SageMaker binds artifacts, hyperparameters, and specific metrics into absolute immutable registries. Not loose S3 tags.

The Versioning Control System Setup

The Three-Layer Defense Grid

Layer 1: Bedrock Management

Every single substitution and runtime argument executes as a strict snapshot inside Bedrock limits.

Layer 2: Git + DVC

Track heavy 40GB binary limits and specific hash files that shape exactly which data for ai trained the weights.

Layer 3: Tracking

Deploying MLFlow rigorously across your entire data science machine learning artificial intelligence pipeline tracks which exact prompt shaped which result.

Deploying this stack drops incidents aggressively. One of our US-based SaaS clients eliminated an 83.7% error rate using ai data analytics strictly because rollback sequences executed instantaneously natively against broken llm machine learning components.

FAQs

Does AWS Bedrock Prompt Management support team collaboration on prompt versions?

Yes. Bedrock Prompt Management allows prompts to be shared across team members for collaborative iteration. However, individual version descriptions aren't natively supported yet.

How is SageMaker Model Registry different from just tagging S3 files?

S3 tags are flat metadata. SageMaker Model Registry gives you structured lineage — training job links, dataset references, approval workflows, and deployment history.

Can I version prompts for third-party LLMs like OpenAI GPT or Anthropic Claude?

Yes. Bedrock Prompt Management works with any model served through Bedrock. For external APIs, use MLflow Prompt Templates or a custom DynamoDB-backed versioning store.

What happens if our model output quality drops silently after a version update?

Set up SageMaker Model Monitor to track real-time inference quality. When quality deviates, CodePipeline automatically routes traffic back to the @champion version.

How do we handle versioning for fine-tuned vs. base LLMs differently?

Base LLM usage requires prompt versioning only. Fine-tuned models require both adapter weight versioning in the Model Registry AND prompt versioning in Bedrock.

Stop Bleeding Your $18,500/mo Incident Cost

Do not burn another quarter relying on loose YAML config files and flat S3 tagging. We map your engineering pipelines down natively into Amazon Bedrock and execute guaranteed immutability workflows.