We have seen US enterprises lose between $187,000 and $340,000 in wasted sprint cycles, idle SageMaker instances, and misaligned ai investments before a single model ever hit production.

This checklist exists because 89% of executive teams are directly involved in gen AI decisions — but fewer than 4 in 10 know what to actually ask before approving the spend.

The Budget Trap Every CTO Walks Into

Here is the ugly truth about ai technology evaluations at the enterprise level: most CTOs start by asking, "Which AWS service is the best ai option?" That is the wrong question.

The right question is: "What specific business outcome am I automating, and what does failure cost per quarter?"

We worked with a $47M SaaS company out of Austin that approved a $312,000 AWS AI budget to "improve customer support." Eleven months later, they had a partially deployed Amazon Lex chatbot answering 23% of tickets — not the 70% they projected. The remaining 77% still routed to human agents. The ai and business case evaporated because nobody defined "improve" with a dollar figure up front.

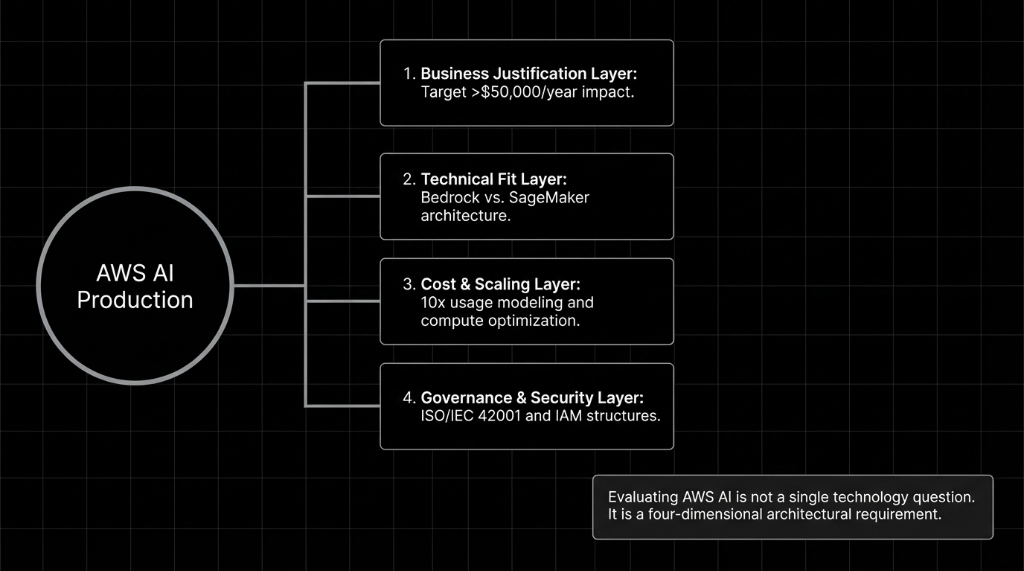

The Braincuber AWS AI Evaluation Checklist

Before you approve a single dollar toward AWS AI development, your team needs answers to these 11 questions. We use this exact framework when onboarding enterprise clients into ai programs.

Business Justification Layer

What is the fully-loaded cost of NOT automating this process? (Not vague "efficiency" — an actual $X/month figure.)

Which ai use cases map directly to revenue gain or cost reduction above $50,000/year? If you can't name one, you are not ready to build ai.

Does the CTO or VP of Engineering own this project end-to-end? Orphaned ai projects die in committee. We see it every quarter.

Technical Fit Layer

Are you using Bedrock or SageMaker? Bedrock is best for pre-built models (Claude, Mistral) under 90 days. SageMaker is for MLOps control and custom ai models. Using SageMaker when you should use Bedrock adds 8–14 weeks of unnecessary development time and $60,000–$110,000 in extra costs.

Is your data foundation ready? 52% of organizations rate their data readiness as inadequate. Bad ai data corrupts every model.

What is the MLOps plan? Neglecting ai testing post-launch means missing when your AI gives wrong answers for 3 months.

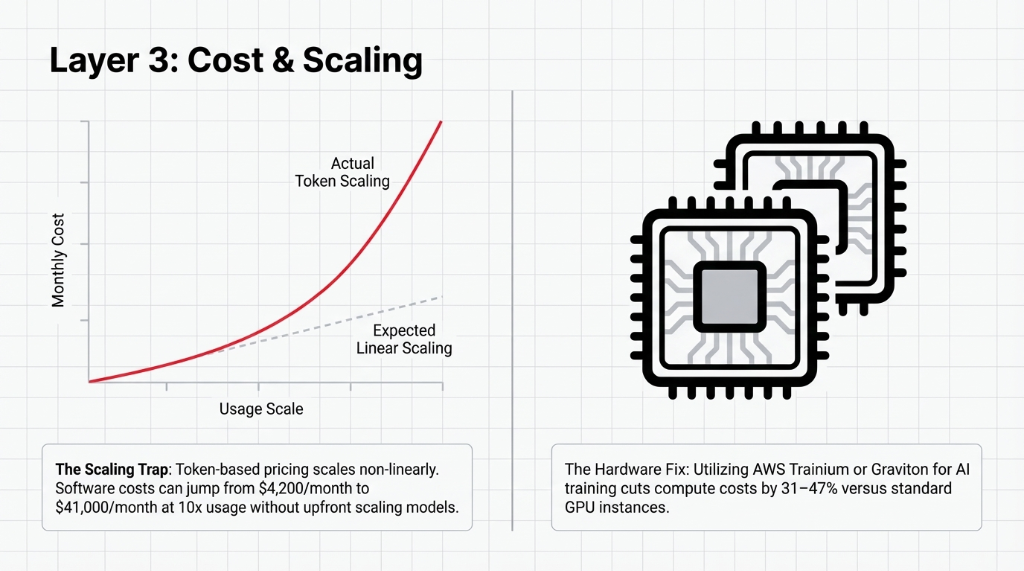

Cost & Scaling Layer

What does your AWS AI bill look like at 10x usage? Token pricing scales non-linearly. We've seen costs jump from $4,200/mo to $41,000/mo without prior modelling.

Are you using AWS Graviton or Trainium chips for ai training? Trainium cuts compute costs by 31–47% versus standard GPU instances.

Governance & Security Layer

Does your ai governance framework meet ISO/IEC 42001? If your internal policy is a 2-page Word doc, you have a risk problem.

Who is the named owner for ai and security compliance? Cross-functional collaboration must exist. "Legal will review it eventually" guarantees an incident.

Bedrock vs. SageMaker: Where US Teams Pick Wrong

We are going to be blunt: most mid-size US enterprises should start with Amazon Bedrock, full stop. The ai business case for custom model training only makes sense when you have proprietary data with 500,000+ unique training records and a dedicated MLOps engineer.

| Criteria | Amazon Bedrock | Amazon SageMaker |

|---|---|---|

| Time to first production deploy | 4–8 weeks | 14–24 weeks |

| Team requirement | 1–2 ML engineers | 3–5 MLOps + data engineers |

| Typical monthly cost (mid-scale) | $3,800–$18,000 | $12,000–$65,000 |

The Governance Layer Nobody Actually Tests

Here is something the AWS sales team will not lead with: your ai system is only as trustworthy as your IAM policies. We have audited enterprises where any developer with S3 read access could invoke production Bedrock models. You must configure Bedrock Guardrails, strictly define ai positions, and run quarterly testing ai reviews.

5 FAQs CTOs Ask Before Approving AWS AI Budgets

How long does AWS AI implementation actually take for a US enterprise?

For a Bedrock-based deployment with RAG architecture, expect 8–12 weeks from kickoff to production. SageMaker with custom fine-tuning adds 6–14 weeks.

What ROI should we realistically expect from AWS AI in the first 90 days?

Clients with well-scoped ai use cases see $1.40–$2.30 returned per dollar spent in the first 90 days. That number jumps to $3.80 by month 9. Poorly scoped projects return $0.12 on the dollar in year one. Scope is everything.

Is Amazon Bedrock secure enough for regulated industries in the US?

Yes — but only when you configure it correctly. Out-of-box, Bedrock is not enterprise-hardened. You need an IAM policy architecture and Guardrails configuration specific to your compliance requirements.

How is Braincuber different from hiring an in-house AWS AI team?

Building an in-house ai development team costs $480,000–$720,000 per year in fully-loaded US salaries. Braincuber delivers the same capability — plus pre-built tooling — at a fraction of that cost.

What are the biggest ai challenges enterprises face after go-live?

Model drift, rising token costs, and lack of ai governance ownership. We fix this by establishing an MLOps monitoring baseline on day one of production, not as an afterthought.

Stop Letting "Evaluation Mode" Drain Your Budget

AWS AI isn't confusing. Bad evaluation frameworks are. Book your free 15-Minute AWS AI Architecture Audit. We will tell you exactly which AWS services fit your use case and where your current ai strategy has gaps.