Here is the number nobody in the AI for business space wants to say out loud: 95% of generative AI pilots in US companies are failing to deliver measurable impact. That is not a software bug. That is an organizational epidemic.

We have worked across dozens of AI implementations — from agentic AI deployments for mid-market manufacturers to building custom AI chatbot systems for US-based D2C brands. The failures we see are never random. They follow the exact same 7 patterns, every single time.

The $30 Billion Wake-Up Call Nobody Heeded

US enterprises poured $30–40 billion into artificial intelligence pilots between 2023 and 2025. S&P Global found that 42% of companies scrapped most of their AI technology initiatives in 2025. The average organization killed 46% of its AI proof-of-concepts before a single one reached production.

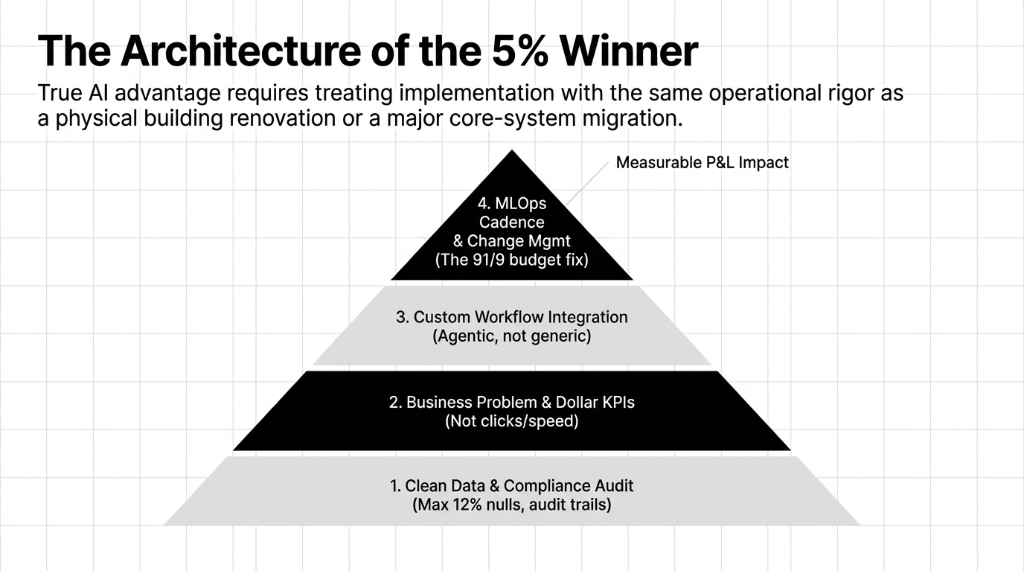

That is not a technology problem. That is a strategy problem. The companies winning with AI automation — Walmart saving $75 million, JPMorgan automating 360,000 staff hours — didn't use smarter models. They followed smarter implementation logic.

Lesson 1: Deploying an AI Platform Before Cleaning Your Data

Volkswagen's Cariad project burned $7.5 billion in operating losses over three years. The root cause wasn't bad artificial intelligence technology — it was inheriting 200+ suppliers' worth of legacy data with zero standardization.

The Data Execution Trap

A US retail company spends $180,000 deploying an AI agent for inventory forecasting, only to discover their product SKU database has 34% duplicate entries. The tool runs perfectly on garbage data.

The fix: Before you open a conversation with any artificial intelligence companies, run a data audit. Check for duplicate records, null fields exceeding 12%, and date format inconsistencies. These three things kill 61% of early-stage AI analytics implementations.

Lesson 2: Buying a Generic AI Assistant Instead of Building for Your Workflow

A large California law firm bought a paralegal bot marketed as a full artificial intelligence assistant for $340,000 upfront. Result: a paperweight. The firm hadn't connected its internal research systems, so the AI application had nothing to work with.

Generic tools like ChatGPT fail at the enterprise level because they don't learn your workflows. An AI chatbot online built for general use will always underperform a custom-built artificial intelligence chatbot trained on operational data.

Lesson 3: Optimizing for the Wrong KPI

Taco Bell deployed voice AI across 500+ drive-throughs. Their KPI was "faster service." What actually happened? The AI bot couldn't handle accents and background noise. Staff intervention rates went up, not down.

We see AI for companies fail this way in B2B settings too. A $2M ARR SaaS company deployed an agentic AI for lead scoring optimized purely for click-through rates. The model flagged tire-kickers as top prospects. Sales team productivity dropped 22% in 90 days. Before choosing AI tools, define the outcome in dollars.

Lesson 4: Skipping the Change Management Layer

70% of AI projects fail to move from pilot to production. The primary reason isn't model accuracy or AI data center costs — it is employee adoption. Most companies deploying AI technology spend 91% of their budget on the platform and 9% on training the humans.

The Adoption Fallback

A manufacturing firm deployed a clean generative AI document system. Six months later, the operations team was still typing invoices into Excel manually because nobody ran a training session. That cost $14,200 per month in unused licensing fees.

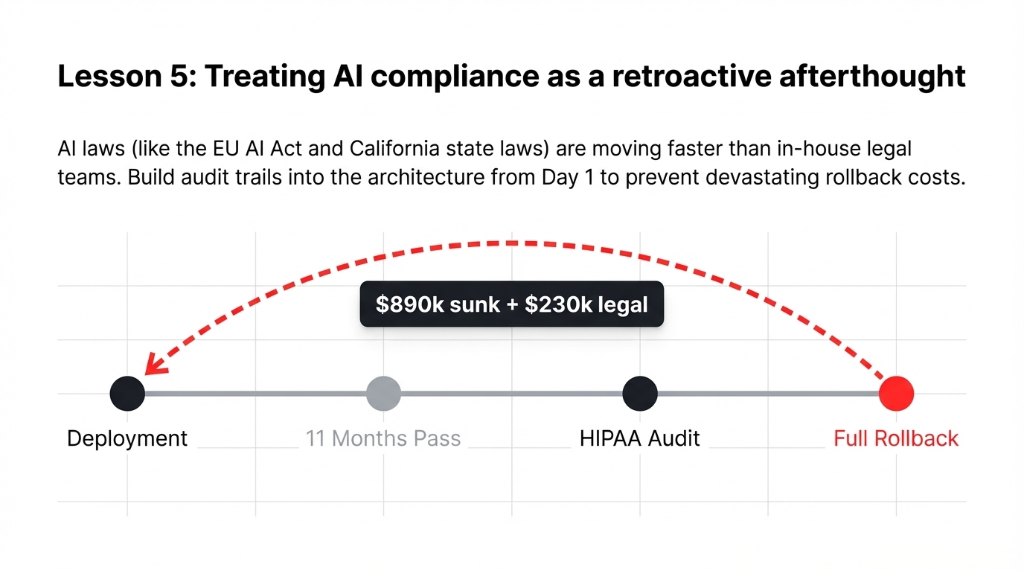

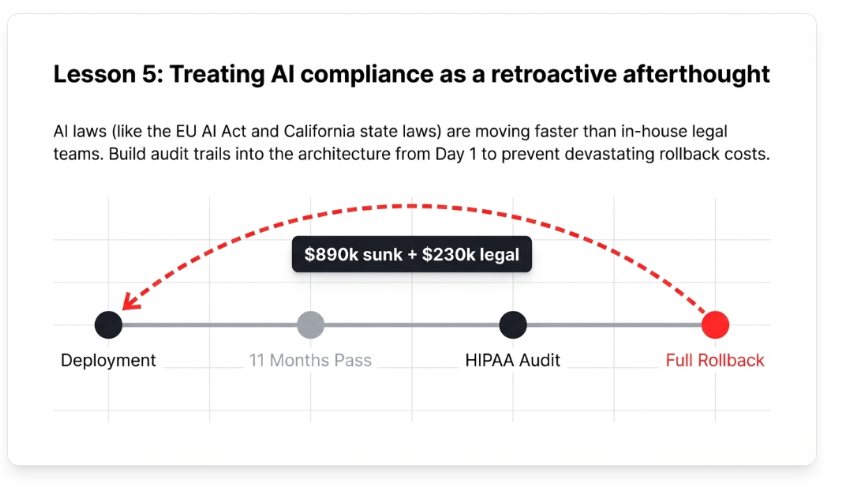

Lesson 5: Treating AI Laws and Compliance as an Afterthought

This is accelerating fast for California AI regulations. EU AI laws are reshaping how artificial intelligence companies build products. US companies operating in finance and health are being blindsided by compliance gaps mid-deployment.

One Ohio healthcare network deployed an AI application for triage. Eleven months in, the legal team discovered the model's outputs couldn't satisfy HIPAA audit trail requirements under new AI and law guidance. It required a full rollback costing $890,000 in sunk development plus $230,000 in legal fees.

Lesson 6: Chasing AI Trends Instead of Business Problems

Half of all AI investing decisions in 2024 were driven by competitive fear, not identified business need. "Competitors are doing it" is not a use case. Companies spend $200,000 building a writing AI engine only to realize their core problem was a broken sales qualification process.

Lumen Technologies correctly identified that reps spent 4 hours per call researching customer backgrounds. They quantified it as a $50 million annual opportunity. Only then did they build the AI agent that compressed that research to 15 minutes.

Lesson 7: Confusing a Demo With a Deployment

This is the most expensive mistake in the business of AI. A beautiful generative AI demo runs on clean data. Your production environment has 11 legacy systems, 3 different date formats, and a CRM nobody has touched since 2019.

A model that performs at 94% accuracy in testing can drop to 71% accuracy within 90 days. For a voice ai system handling 3,000 calls per day, that 23-point accuracy gap translates to roughly 690 broken customer interactions every single day.

The Pattern Is Clear — So Is the Fix

Every one of these failures boils down to the same root problem: companies rush to apply AI before defining what success looks like in dollars. Bad engineering costs real money — $7.5 billion for Volkswagen, $890,000 for an Ohio hospital, $14,200/month for a Texas manufacturer.

Frequently Asked Questions

What is the most common reason AI implementation fails in US companies?

The #1 cause is not a bad model — it's poor workflow integration. Generic AI tools fail at the enterprise level because they don't adapt to specific business processes.

How much does a failed AI implementation actually cost?

It varies widely, but enterprise-scale failures run from $200,000 for a scrapped pilot to $7.5 billion (Volkswagen's Cariad project). The average US company loses between $180,000–$420,000 in sunk development.

Is generative AI reliable enough for business use right now?

Yes — but only in specific, well-defined workflows with clean data. Generative AI deployed in back-office automation delivers the highest ROI.

What should I check before starting an AI project?

Run three checks first: Data quality audit (flag duplicates, nulls), Compliance review against current laws, and define ROI in an exact dollar figure.

How is an AI agent different from a regular AI chatbot?

An AI chatbot answers questions. An agentic AI takes actions — pulling data, updating ERPs autonomously. Agentic AI is what drives cost reduction, not simple interfaces.

You are treating AI adoption like a software update.

Stop pouring six figures into AI pilots that will never reach production. If you want to know exactly where your implementation plan has gaps before spending another dollar, book a review with us.