The Multi-Vendor Tax Nobody Accounts For

Here is what a standard e-commerce setup on AWS looks like: EC2 instances, an RDS database, maybe a CloudFront CDN layer slapped on top, and a load balancer that someone set up in 2022 and never touched again. That works fine when you have one seller and one product catalog.

The moment you add a second vendor — and a third, and a fifty-third — your architecture starts carrying what we call the multi-vendor tax: the compounding cost of isolated vendor data, separate commission logic, concurrent payout processing, and real-time inventory sync across sellers who share zero infrastructure but must never share data.

Real Audit: UK Marketplace, 700 Vendors

Problem: Single-tenant RDS instance. Read queries hitting the DB 14,000 times per minute during peak hours. DB bill: $6,800/month.

Fix: Multi-tenant PostgreSQL on Amazon Aurora with read replicas

Result: DB cost dropped to $2,100/month in 6 weeks. Savings: $4,700/month ($56,400/year)

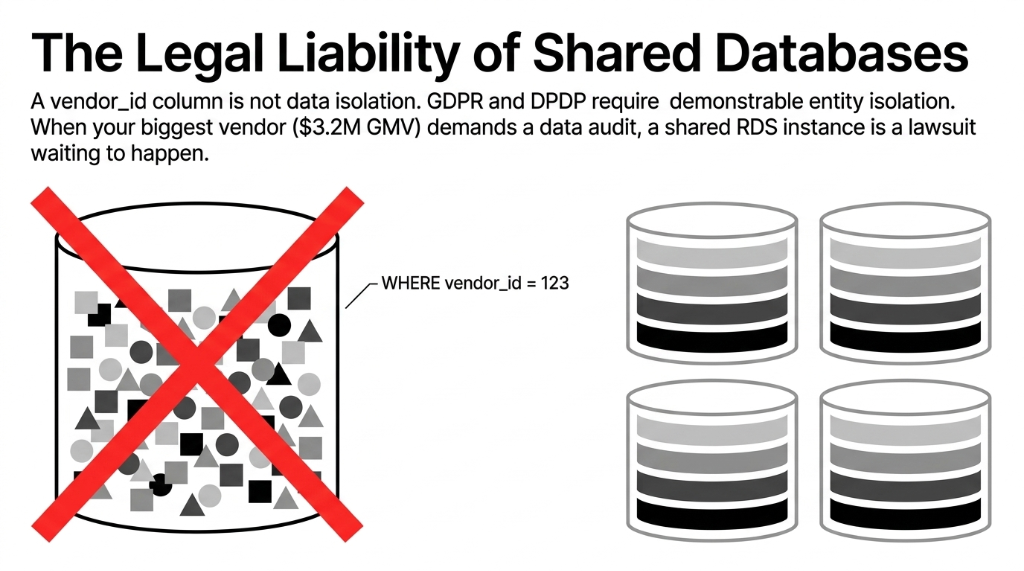

Why Your "Shared Database" Architecture Is a Legal Liability

The biggest mistake we see — and we see it constantly — is marketplace founders running all vendor data in a single shared RDS or DynamoDB table with a vendor_id column as the only isolation layer. That is not architecture. That is a lawsuit waiting to happen.

GDPR, India's DPDP Act, and emerging data residency laws in the UAE and Singapore all require that you can demonstrate data isolation per vendor entity. A WHERE vendor_id = 123 clause in a query does not satisfy a data protection regulator. It does not satisfy a vendor's enterprise legal team either. When your biggest vendor (the one doing $3.2M in GMV through your platform) asks for a data audit, you will not be able to hand them one.

Cell-Based Isolation: The Correct Approach

Each vendor group gets a dedicated VPC subnet with a /28 CIDR allocation, tracked atomically in DynamoDB. You scale across cells, not within a single account. Each cell has its own blast radius — when one vendor's spike hits 5,000 queries per second, it does not drag down every other seller on your platform.

Result: Southeast Asian fashion marketplace, 1,400 vendors. P99 API response dropped from 1,840ms to 312ms in 8 weeks.

The AWS Stack That Actually Handles Multi-Vendor at Scale

Stop using generic "serverless-first" advice from AWS blog posts written for single-tenant SaaS products. A multi-vendor marketplace has specific throughput patterns that look nothing like a standard SaaS app. Here is the architecture we deploy at Braincuber for marketplace platforms doing $500K+ GMV/month:

Compute Layer

Production Marketplace Compute Architecture

Amazon ECS with Fargate

Vendor-specific microservices (catalog, inventory, payouts). Not Lambda for everything — Lambda cold starts kill vendor onboarding API at scale. Reserve Lambda for event-driven workflows like fraud detection and payout webhooks.

Auto Scaling Groups

Predictive scaling policies tuned to your platform's historical traffic curves — not just reactive CPU-based scaling.

Data Layer

Amazon Aurora Serverless v2

Tenant-scoped connection pools via RDS Proxy. Eliminates the connection exhaustion problem that destroys most marketplace DBs past 300 concurrent vendor sessions.

DynamoDB for Real-Time Operations

Cart and inventory state. One rebuild: MySQL cart at 31% abandonment, 420ms latency. After DynamoDB: 18.4% abandonment, 11ms latency.

API, Routing, and Protection

The Piece 90% of Marketplace Builders Skip

Amazon API Gateway with per-vendor usage plans and throttling. Without per-vendor API quotas, a single vendor running a bulk import job will consume 100% of your gateway capacity and take every other vendor's storefront offline. AWS WAF at the CloudFront layer with bot detection rules. One client was losing $4,700/month to scrapers harvesting vendor pricing data. WAF rules eliminated 94% of that traffic in 2 weeks.

Payout and Commission Engine

Never Calculate Commissions Synchronously

Amazon SQS + Lambda for asynchronous commission calculation. We have seen checkout pipelines with embedded commission logic add 800ms to every single transaction — that is a direct conversion killer. Amazon EventBridge for multi-vendor event orchestration: when vendor A's inventory hits zero, EventBridge triggers a cascade — notify buyer, update search index, fire re-stock webhook, log to vendor analytics. All without coupling your services.

Search and Discovery

Amazon OpenSearch Service with per-vendor index aliases gives you vendor-level search customization (boosted products, sponsored listings, custom ranking) without building a search engine from scratch. At 1.8 PB of product assets — high-res images, video demos, vendor brand kits — Amazon S3 with intelligent-tiering cuts your storage costs by 23–41% compared to standard S3 pricing.

What AWS re:Invent 2025 Changed for Marketplace Operators

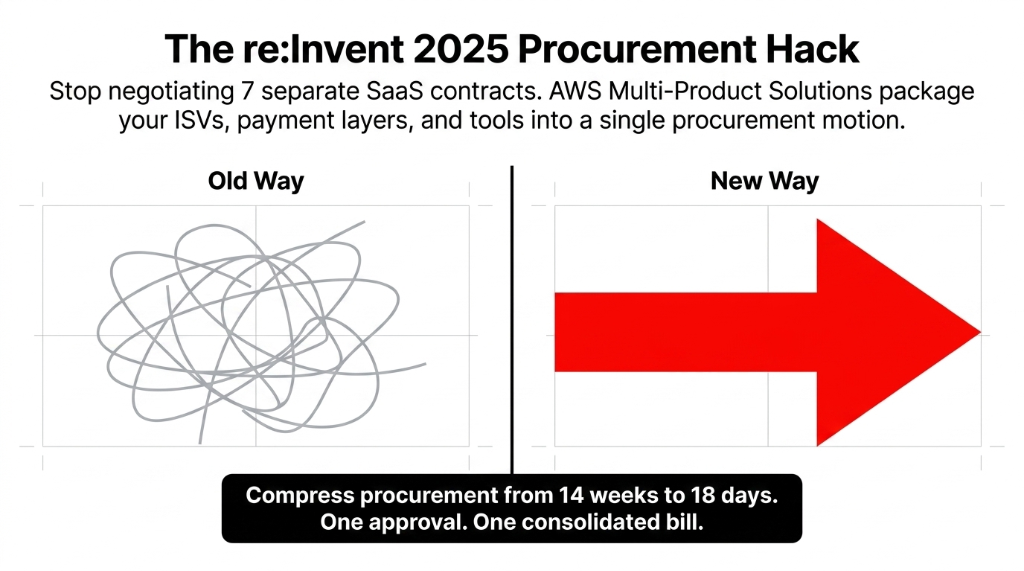

If you run a B2B marketplace or an industry-vertical platform, the November 2025 announcement from AWS changes your procurement strategy entirely. AWS Marketplace now supports multi-product solutions — pre-packaged combinations of ISV software, professional services, and AWS-native tools sold as a single offer through one lead partner.

You no longer need to negotiate 7 separate contracts to assemble your marketplace tech stack. A single procurement motion through AWS Marketplace can cover your vendor management platform, your payment orchestration layer, your fraud detection engine, and your analytics stack — with one approval, one billing consolidation, and individual renewal flexibility per component.

(We watched a Dubai-based B2B marketplace spend 11 weeks getting legal sign-off on three separate SaaS contracts. Multi-product solutions would have compressed that to 18 days.)

The Payout Problem That Quietly Destroys Vendor Trust

Multi-vendor payout reconciliation is the #1 reason vendors abandon marketplace platforms in their first 90 days. When a vendor sees $14,230 in expected earnings but receives $12,890 in their account with no explanation, they open a support ticket. Then they post about it on Twitter. Then 12 other vendors DM them asking if they had the same problem.

This is not a payment gateway problem. It is a data pipeline problem. Most marketplace platforms have payout logic spread across three systems: the order management database, the payment processor (Stripe, Razorpay, or Payoneer), and a finance spreadsheet that someone in accounts updates manually every Friday.

The Fix: AWS Step Functions Payout Workflow

Single orchestration: Order confirmed ▸ commission calculated ▸ refund deductions applied ▸ net amount dispatched ▸ reconciliation record written to Redshift. Entire chain runs automatically, generates an auditable trail per vendor per transaction, publishes a real-time payout dashboard to each vendor's portal.

Before: 23% of vendors submitting monthly payout disputes

After: Disputes dropped to 1.7% in month 2

The Timeline Truth (Stop Believing the 6-Week Promise)

Any AWS partner who tells you they can build a production-grade multi-vendor marketplace architecture in 6 weeks is either describing a prototype or lying to win the deal. Here is the actual timeline we follow at Braincuber:

| Phase | Timeline | Deliverables |

|---|---|---|

| Infrastructure Design | Weeks 1–2 | Audit, cell-based architecture design, VPC setup, IAM policy framework for vendor isolation |

| Core Microservices | Weeks 3–5 | Catalog, inventory, orders, payouts on ECS Fargate with CI/CD via AWS CodePipeline |

| Integration Layer | Weeks 6–8 | API Gateway vendor quotas, EventBridge orchestration, OpenSearch indexes, SQS commission engine |

| Hardening | Weeks 9–11 | Load testing at 10x projected peak, WAF tuning, Aurora connection pool optimization, cost modeling |

| Go-Live | Week 12 | Staged vendor onboarding with real traffic cutover |

Total: 12 weeks to a production-ready, scalable multi-vendor platform. Not 6. Not 4. 12. The difference between a 6-week prototype and a 12-week production system is $380,000 in potential GMV losses during your first high-traffic event.

The Cost Reality at Different Scale Points

| Vendor Count | Monthly AWS (Optimized) | Monthly AWS (Unoptimized) | GMV at Risk if Architecture Fails |

|---|---|---|---|

| 100–500 | ~$1,800–$3,200 | ~$4,500–$7,800 | $50K–$200K/month |

| 500–2,000 | ~$4,100–$8,700 | ~$12,000–$22,000 | $200K–$1M/month |

| 2,000–10,000 | ~$9,500–$19,000 | ~$34,000–$67,000 | $1M–$5M/month |

(Figures based on Braincuber's implementation data across marketplace clients in US, UK, UAE, and India, 2023–2025)

The delta between optimized and unoptimized is not just a cost issue — it is a survival issue. At 2,000 vendors doing $500K GMV/month, a $45,000/month infra bill at 9% margins means you are running the platform at a loss. Braincuber's AWS cost optimization engagements have recovered between $4,100 and $31,000/month for marketplace clients — through Reserved Instance planning, Compute Savings Plans, S3 intelligent-tiering, and RDS right-sizing alone.

Frequently Asked Questions

What core AWS services does a multi-vendor marketplace need?

At minimum: Amazon ECS (Fargate) for microservices, Amazon Aurora with RDS Proxy for tenant-scoped databases, SQS for async payout processing, EventBridge for event orchestration, API Gateway with per-vendor usage plans, S3 for assets, CloudFront for CDN, and WAF for bot protection. Lambda works for event-triggered workflows but not as the primary compute layer.

How much does AWS infrastructure cost for a 500-vendor marketplace?

An optimized AWS setup for 500 active vendors runs approximately $3,200–$4,800/month. Unoptimized setups for the same vendor count routinely cost $9,000–$14,000/month. The gap is driven by improper database sizing, missing Reserved Instance commitments, and over-provisioned EC2 instances.

How does AWS enforce data isolation between vendors?

AWS supports isolation at three levels: row-level (application-enforced), VPC subnet-level (network-enforced), and AWS account-level (infrastructure-enforced). For enterprise vendors or regulatory requirements, Braincuber deploys cell-based architecture where vendor groups share a cell but each cell maps to a separate AWS account with dedicated service limits and blast radius boundaries.

Can AWS handle a marketplace with 10,000+ concurrent vendors?

Yes — but not with a default single-account setup. At 10,000+ vendors, the architecture must use cell-based account isolation, DynamoDB for high-frequency operations, Aurora read replicas per vendor cluster, and OpenSearch with per-vendor index aliases. Braincuber has designed architectures handling 5,000 queries per second per segment during holiday peaks.

How long does it take to deploy a production AWS marketplace platform?

12 weeks for production-grade deployment covering architecture design, cell-based VPC setup, ECS microservices, CI/CD pipelines, load testing at 10x peak, WAF tuning, and staged vendor onboarding. Prototypes can deploy in 4–6 weeks, but we do not recommend going live on a prototype when vendors' revenue depends on uptime.

Your Marketplace Is Either Built to Survive Scale — Or It Is Not

If your vendor count is growing but your AWS bill is growing faster, or if your payout reconciliation still involves a Friday afternoon Excel file, you are sitting on a structural problem. Book a free 15-Minute AWS Architecture Audit — we will identify your biggest infrastructure leak in the first call.